The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

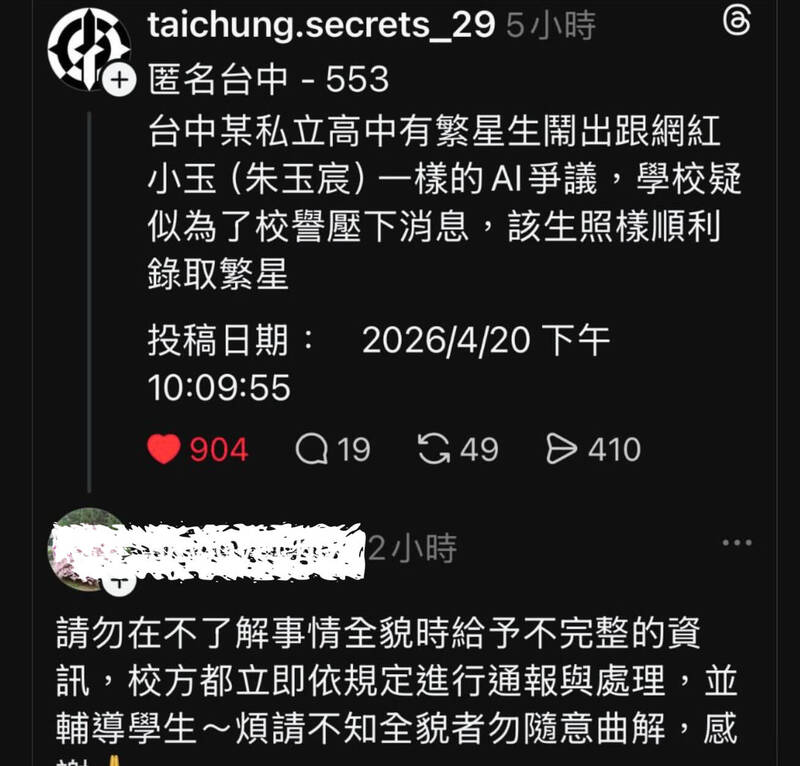

Two male high school students in Taichung, Taiwan, used AI deepfake technology to create and distribute non-consensual sexual images of nearly 20 female classmates. The incident caused significant psychological harm and privacy violations. Authorities and schools have launched investigations, and university admission for one perpetrator is under review.[AI generated]