The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

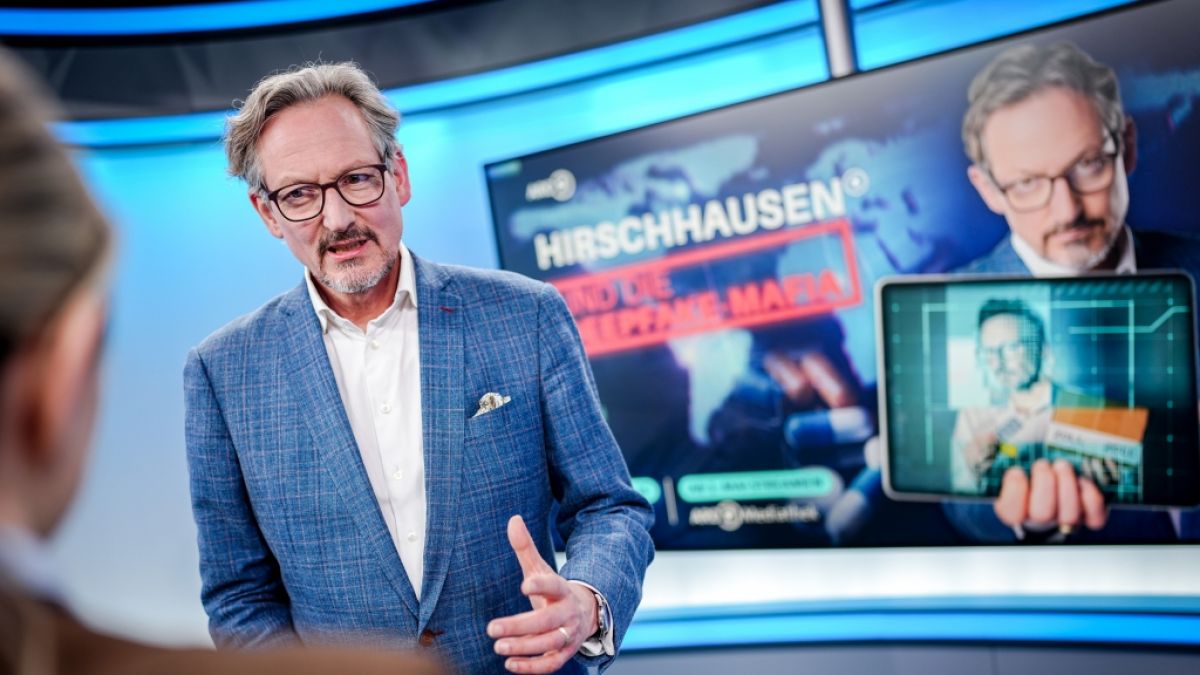

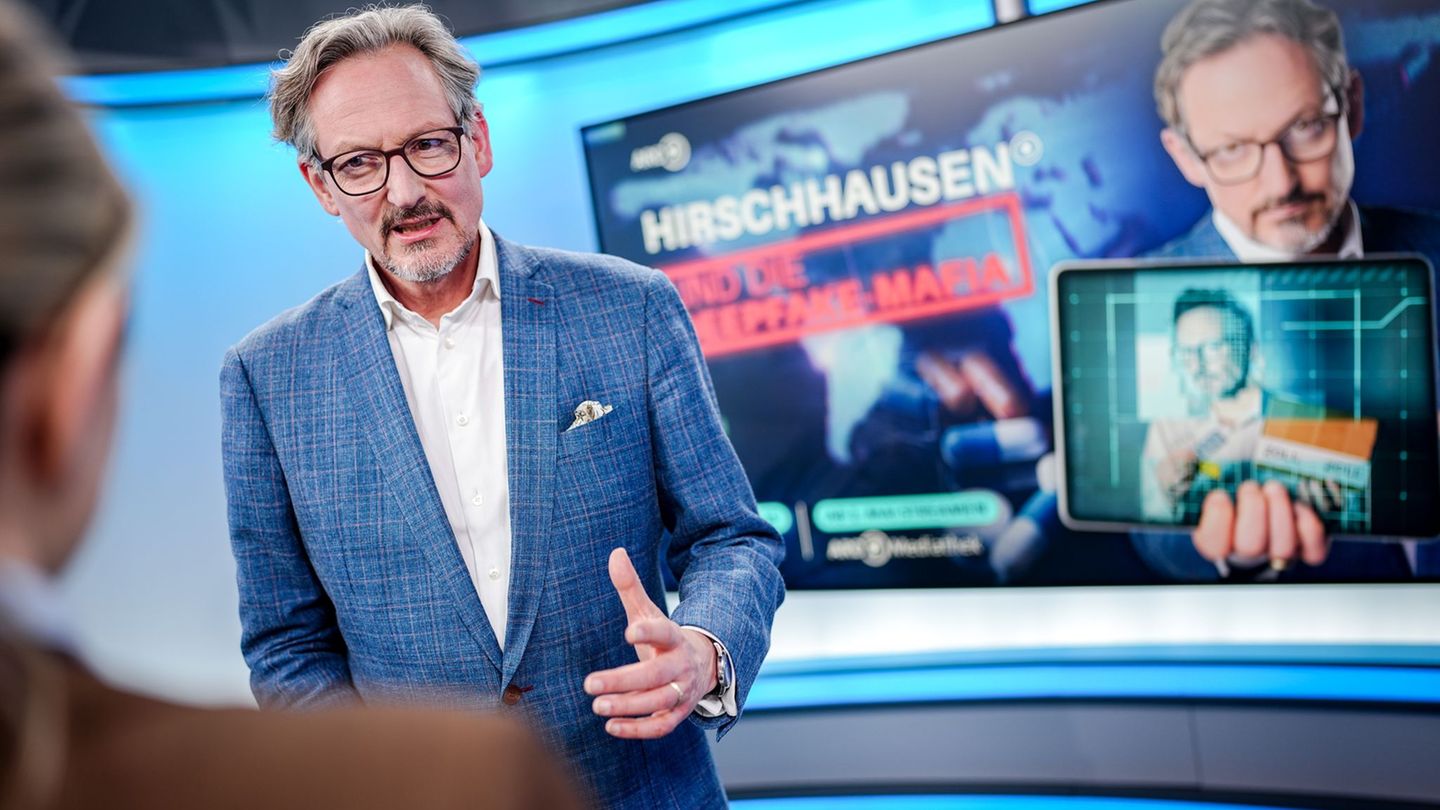

Criminal networks have used AI-generated deepfake videos and audio to impersonate German doctor Eckart von Hirschhausen, promoting fake medical products online. This has led to widespread deception, financial loss, and potential health risks for victims. Despite legal action, many deepfake ads remain online, highlighting ongoing harm and regulatory challenges.[AI generated]