The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

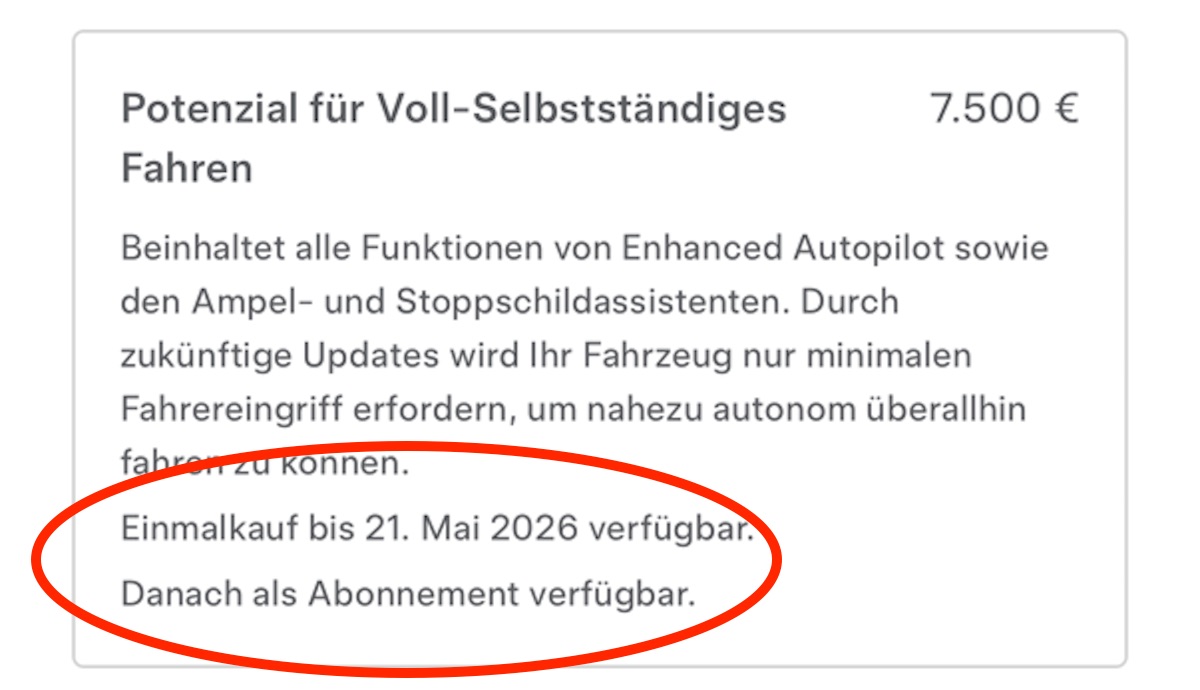

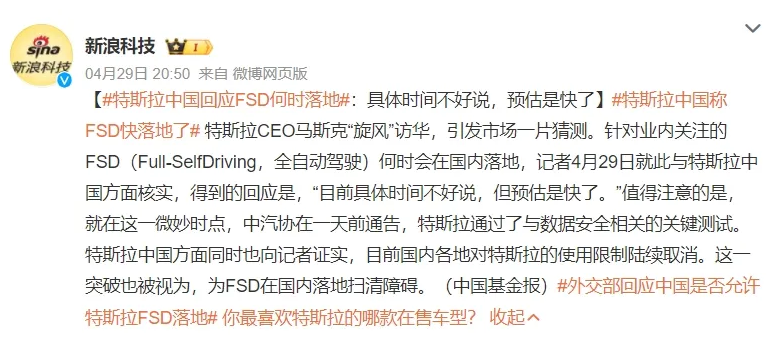

Tesla's Full Self-Driving (FSD) system faces significant regulatory scrutiny in the EU, with authorities from several countries raising concerns about safety issues such as speeding, performance on icy roads, and potentially misleading naming. Approval is delayed as regulators question the system's readiness and public safety implications.[AI generated]

:strip_icc()/i.s3.glbimg.com/v1/AUTH_63b422c2caee4269b8b34177e8876b93/internal_photos/bs/2024/k/I/lf0g1vTlO1fAQZzwFCLw/ap24288327681930.jpg)