The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

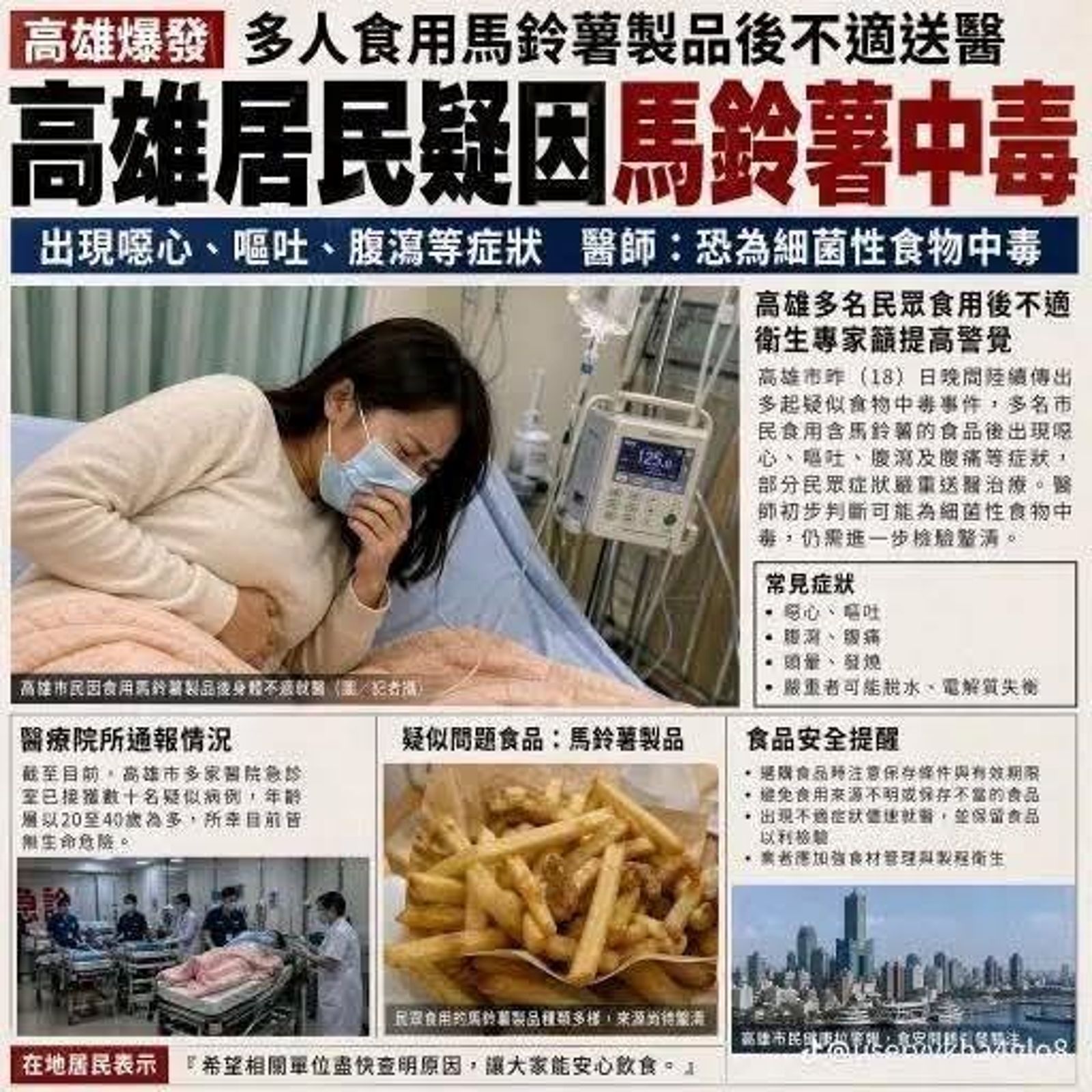

A man in Taiwan used AI to fabricate and spread false news and images on Facebook, claiming multiple people in Kaohsiung were poisoned by potatoes. The misinformation caused public fear, disrupted business operations, and required significant government resources to clarify. Authorities quickly investigated and prosecuted the individual under food safety laws.[AI generated]