The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

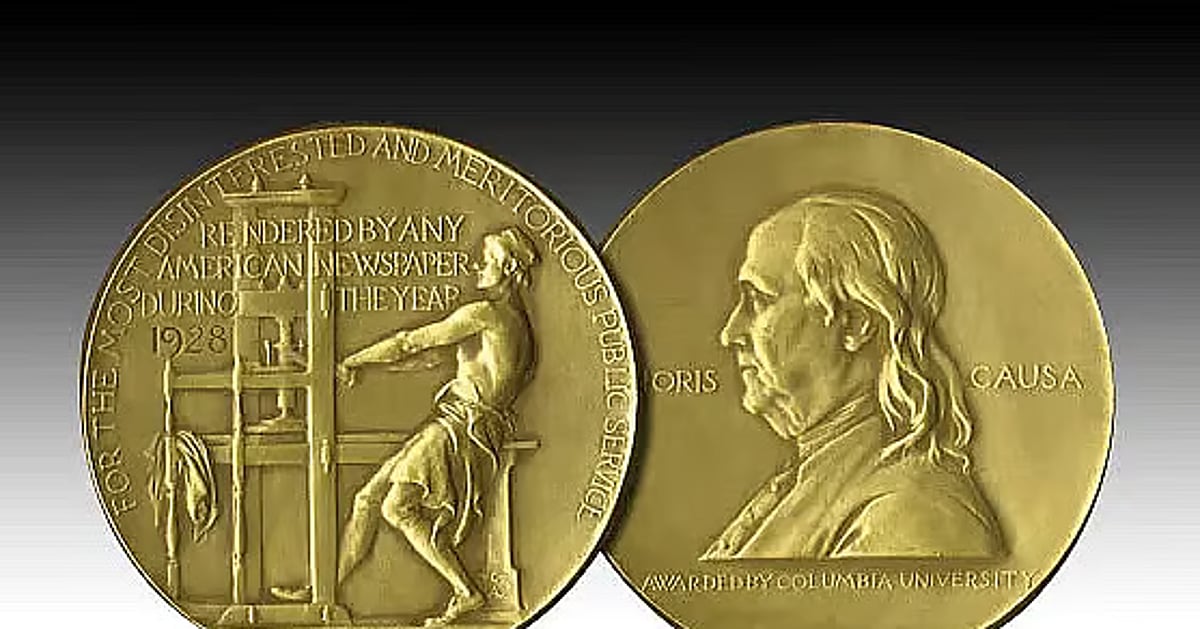

Reuters won a Pulitzer Prize for exposing how Meta knowingly exposed users, including children, to harmful AI chatbots and fraudulent ads. The investigation revealed direct harms, including a fatality and widespread scams, prompting regulatory and corporate responses. The incident highlights significant risks from AI system misuse.[AI generated]