The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

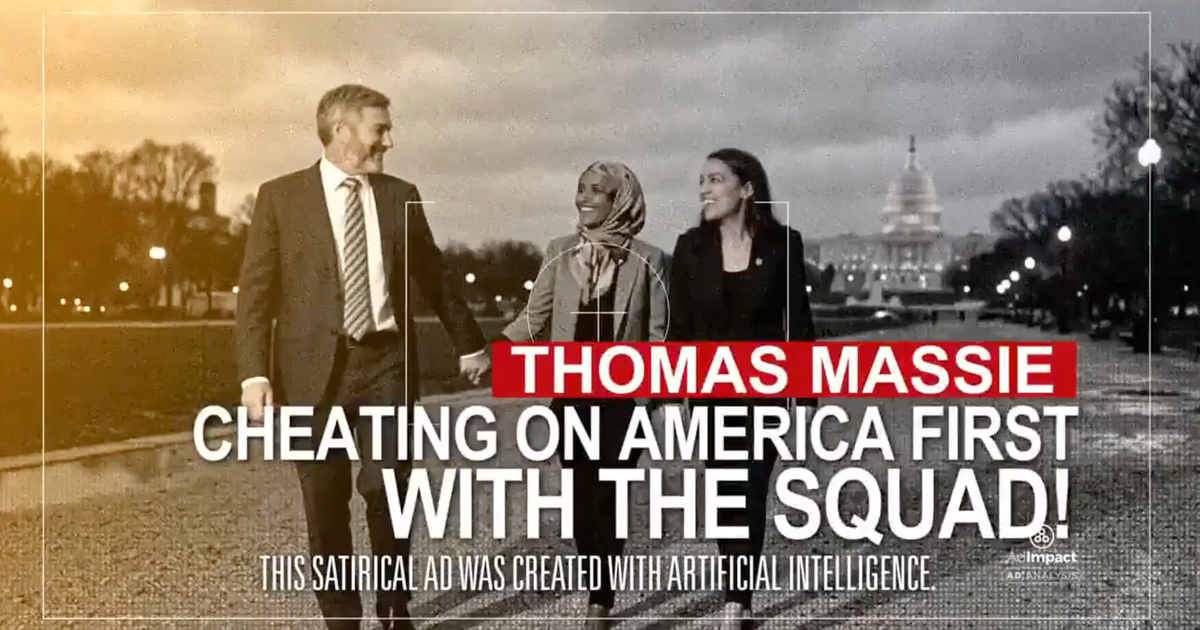

Super PACs in Kentucky's Republican primary used AI-generated deepfake videos to falsely depict Rep. Thomas Massie and Ed Gallrein in compromising situations, causing reputational harm and misinformation. The ads, criticized as defamatory and potentially violating state laws, highlight the malicious use of AI in political campaigns.[AI generated]