The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

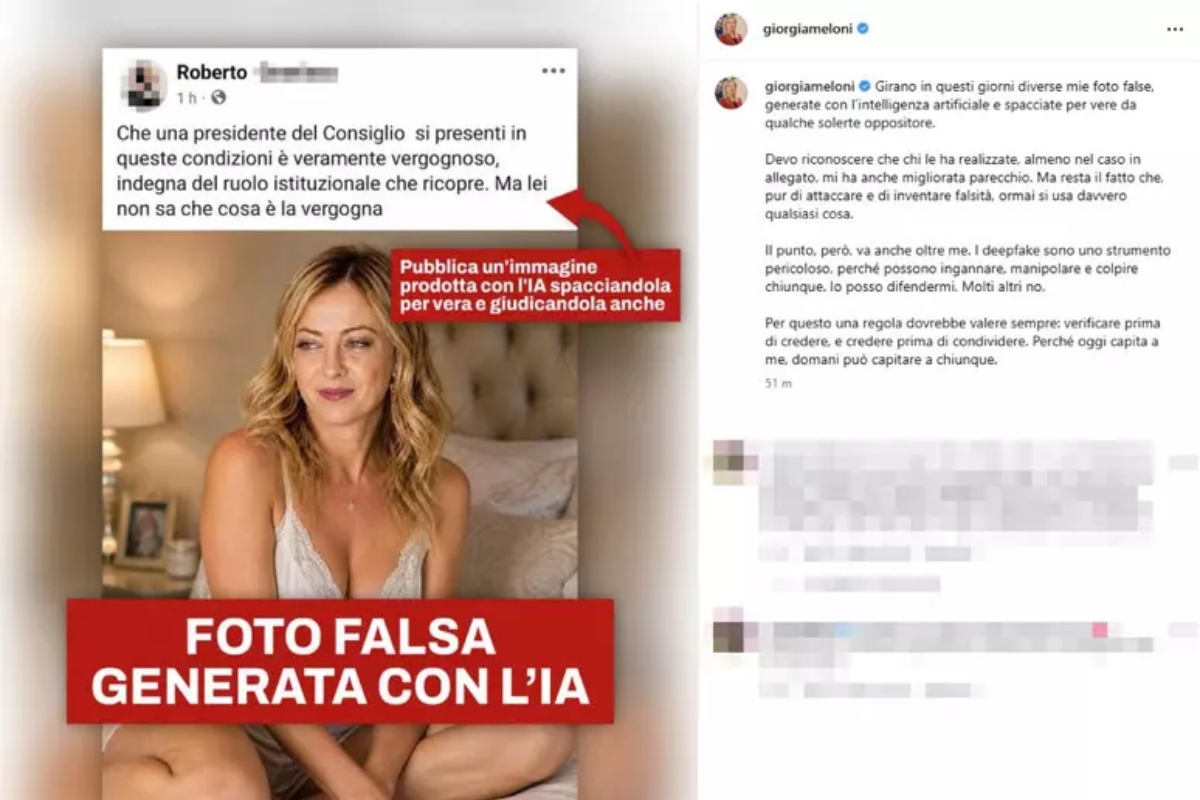

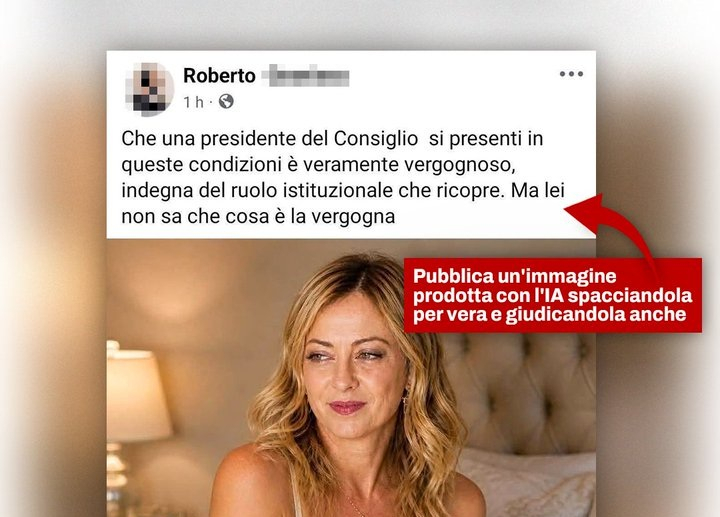

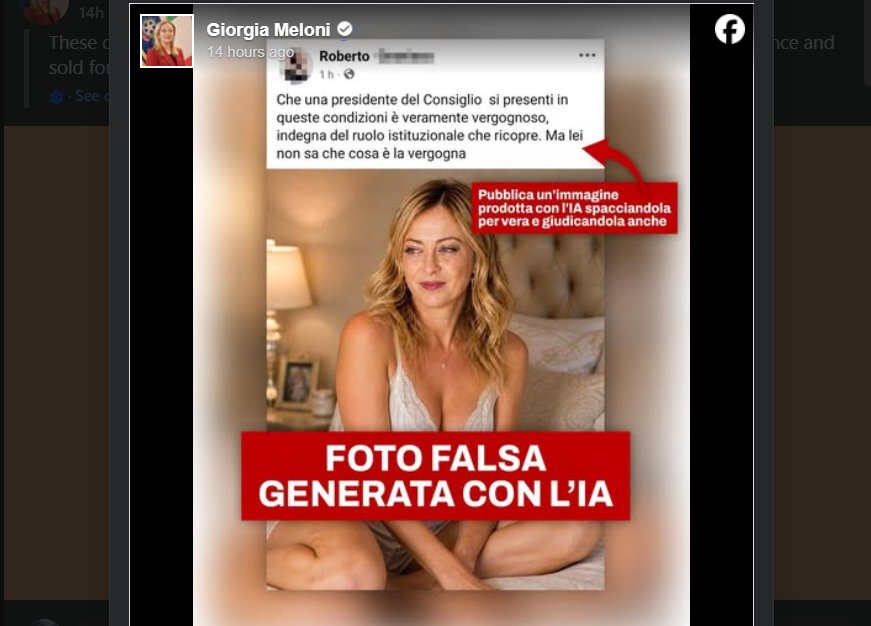

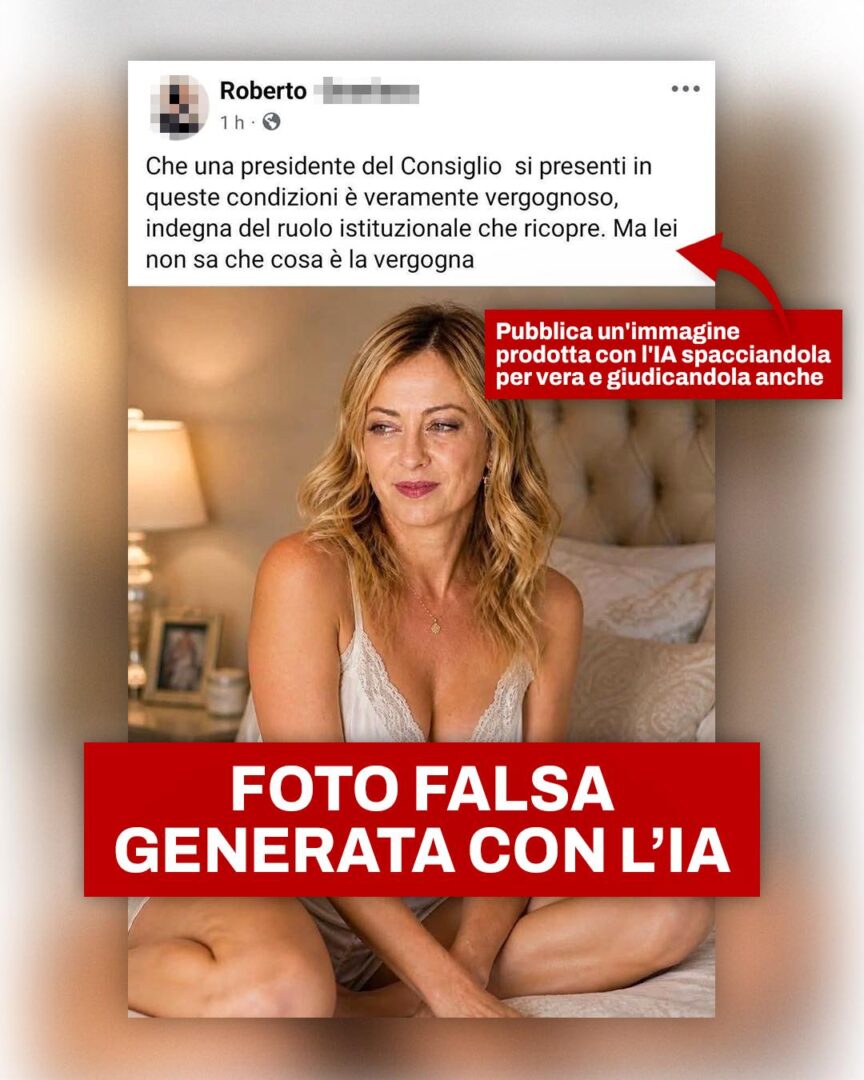

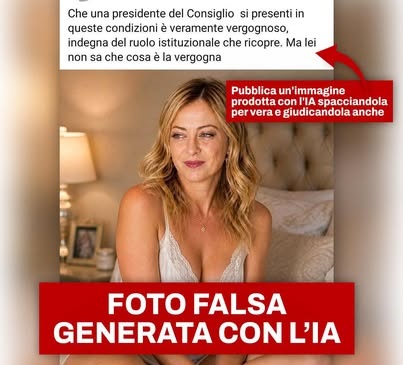

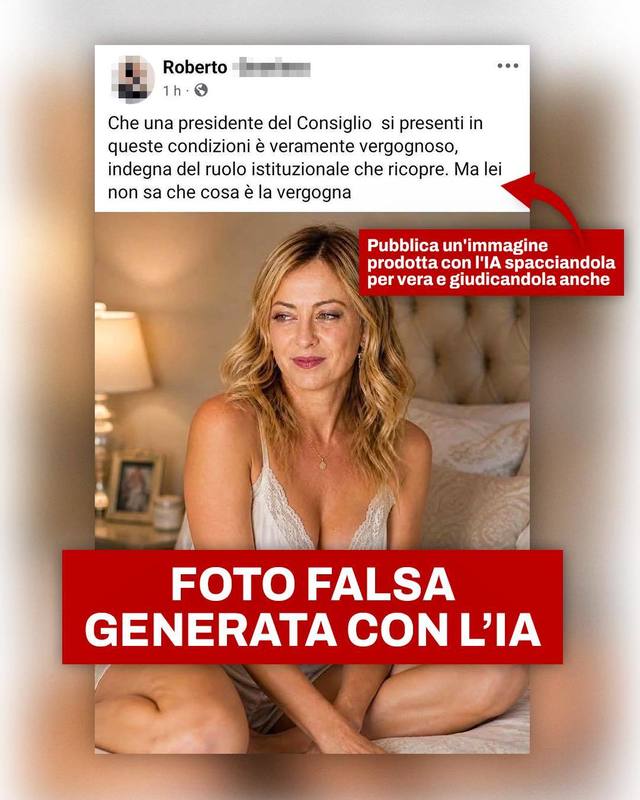

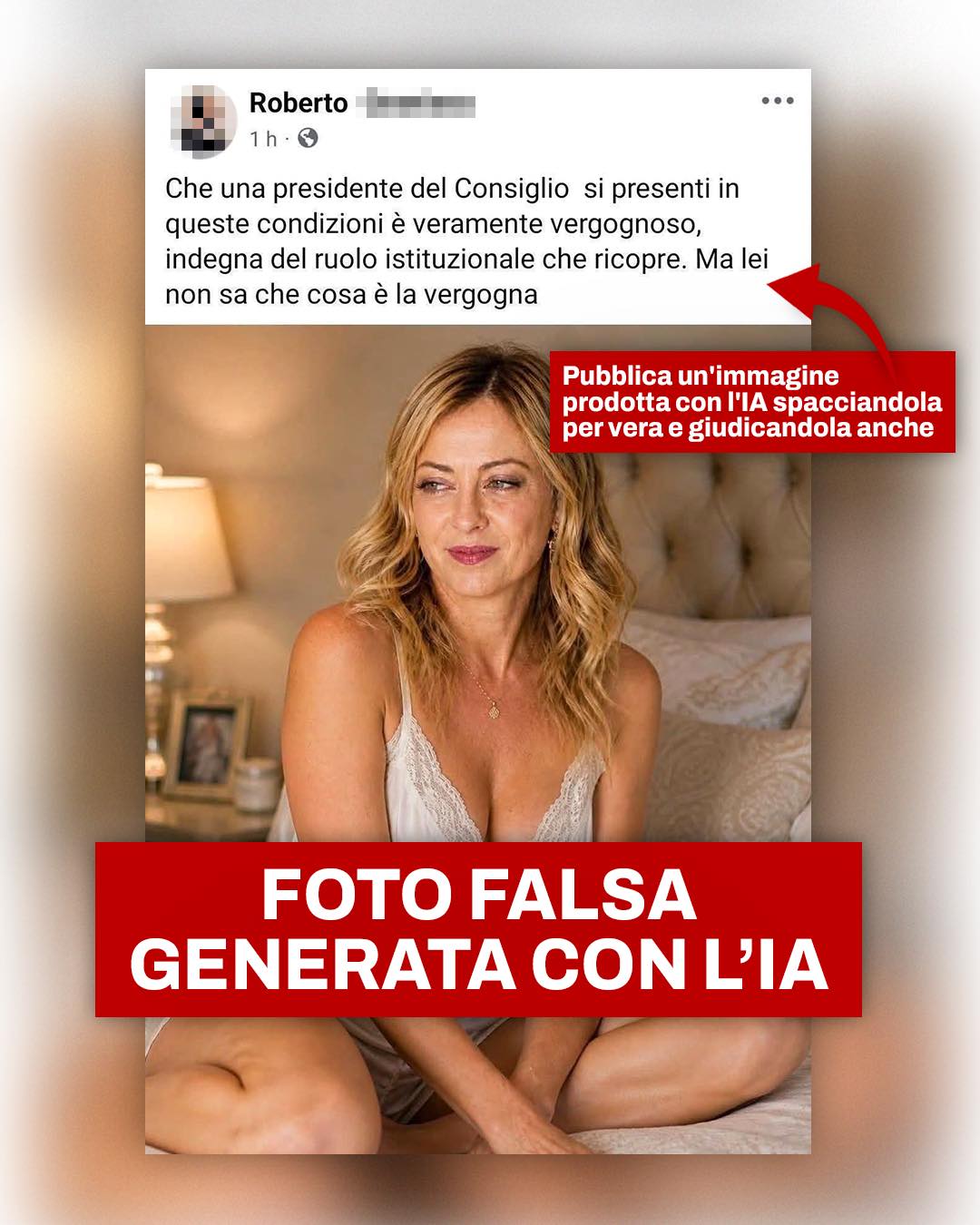

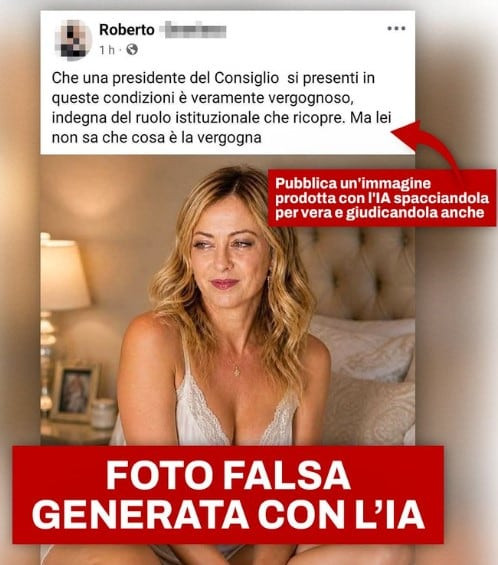

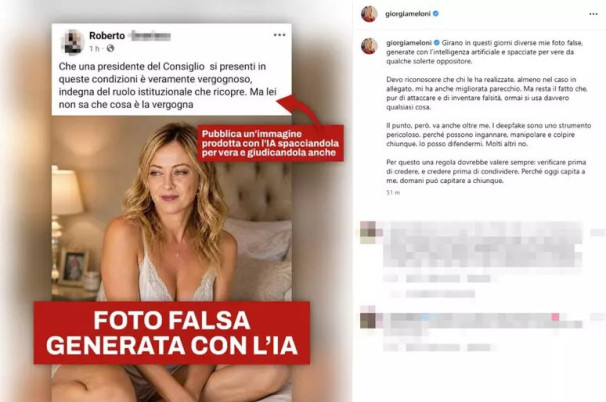

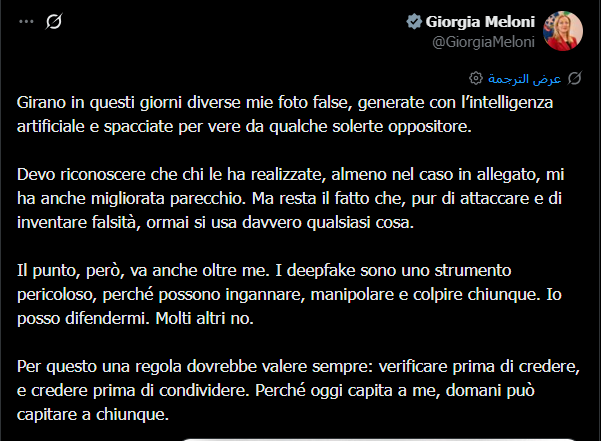

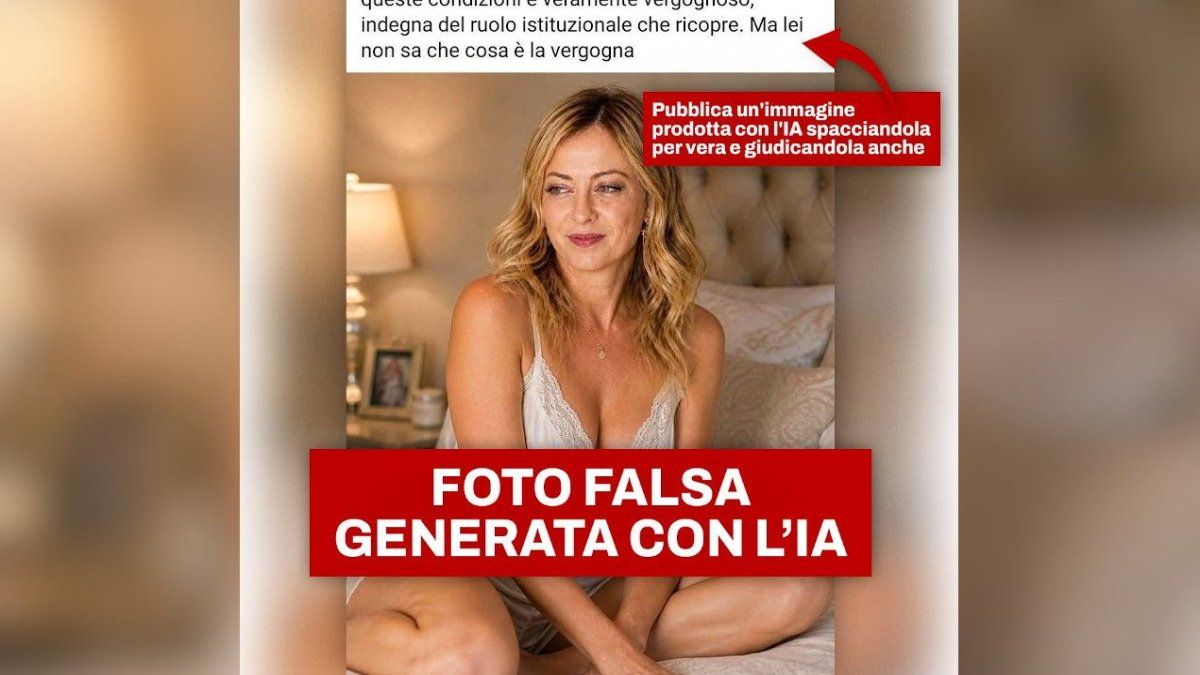

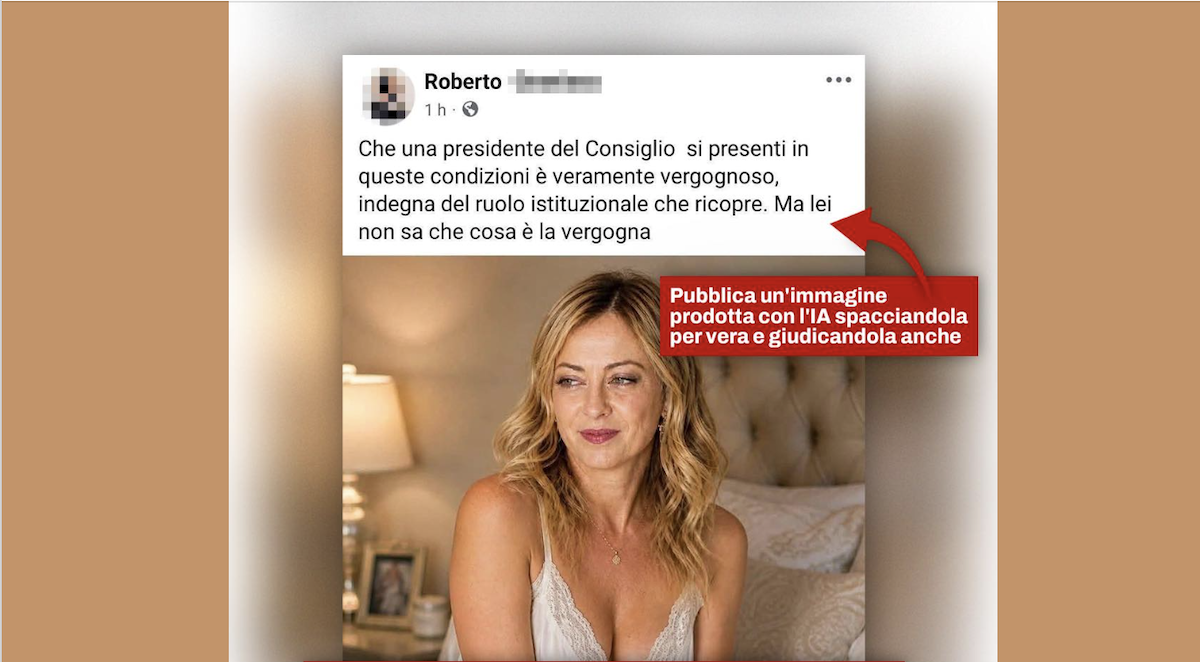

Italian Prime Minister Giorgia Meloni has been targeted by AI-generated deepfake images circulated online by political opponents. Meloni publicly warned about the dangers of such manipulated content, highlighting its potential to deceive, defame, and harm individuals, and urged the public to verify online information before sharing.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/2c7/2e6/987/2c72e6987c06a8b3324de5f30a12605b.jpg)

:format(jpg):quality(99)/f.elconfidencial.com/original/a18/900/d04/a18900d0493d247264c3d064a200f0f1.jpg)

)