The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

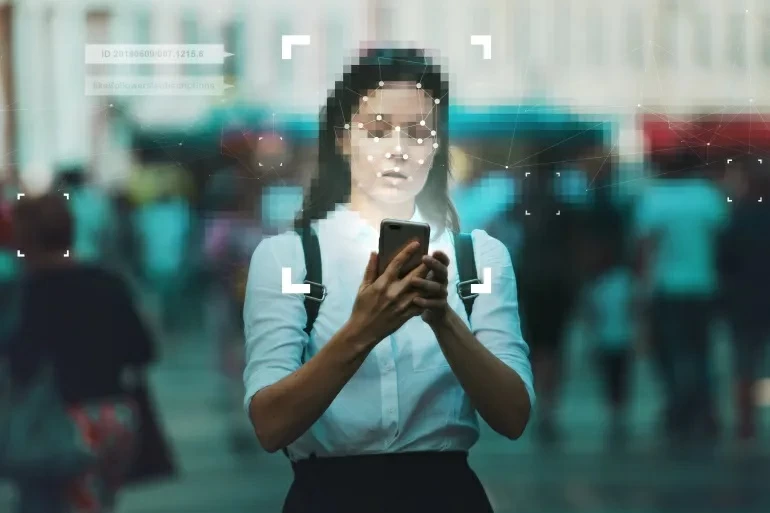

Disney has implemented AI-powered facial recognition at its California resorts, converting visitors' biometric features into unique digital values for identity verification. While Disney claims data is deleted within 30 days, critics warn of privacy risks, surveillance normalization, and potential misuse of biometric data, sparking debate over human rights and data security.[AI generated]