The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

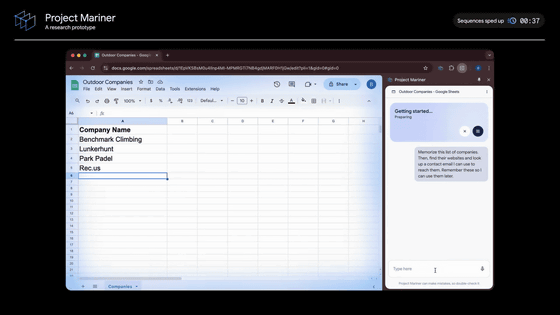

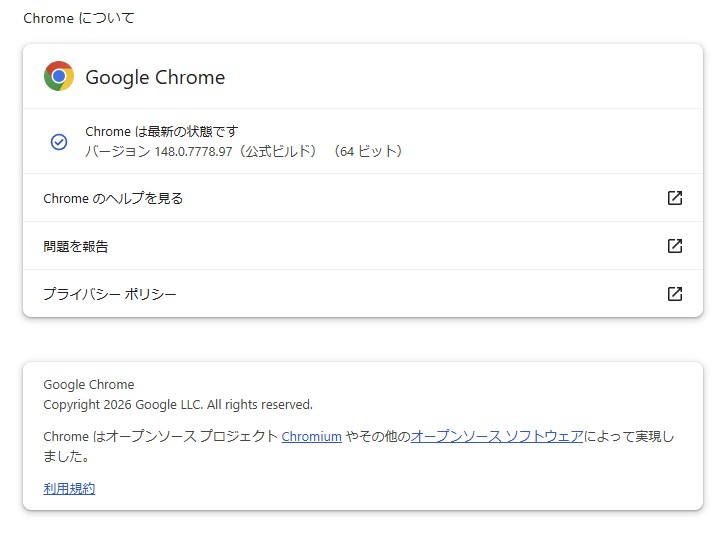

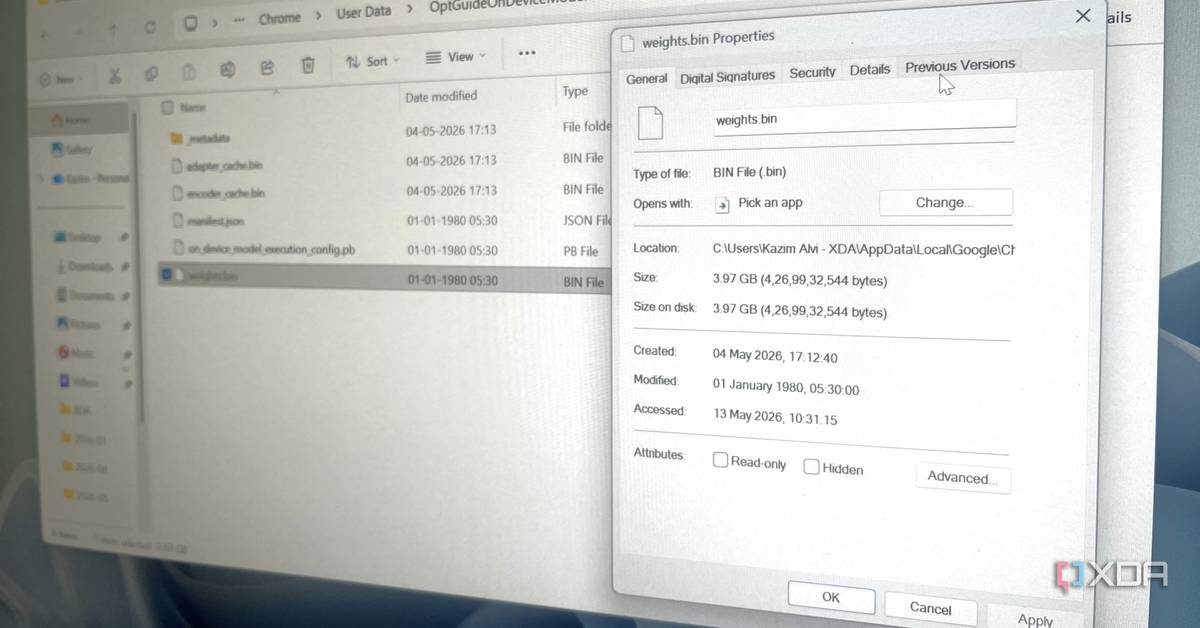

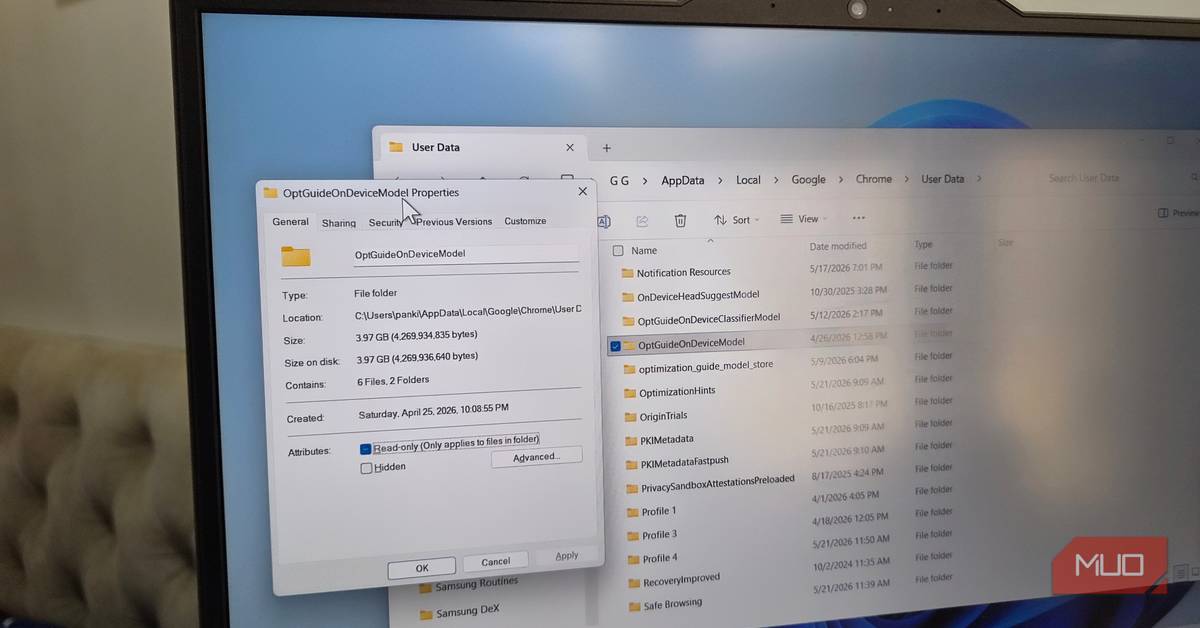

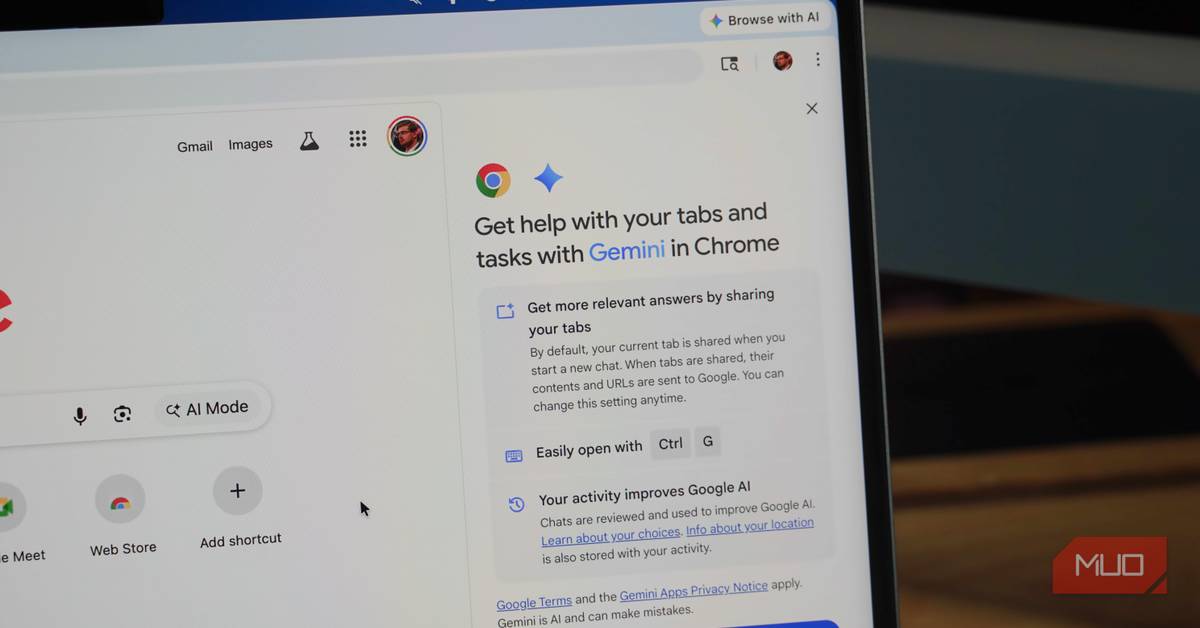

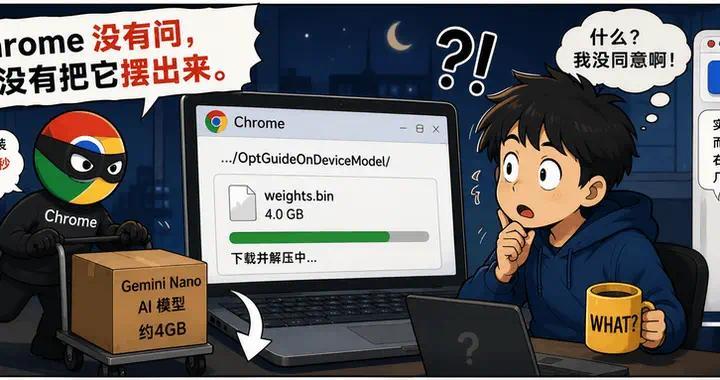

Google Chrome has been automatically downloading a 4GB Gemini Nano AI model onto users' devices without explicit consent, raising global concerns over privacy violations, user rights, and environmental impact due to large-scale data transfers. The practice, discovered by security researcher Alexander Hanff, may breach privacy laws and has prompted widespread criticism.[AI generated]

)

)

/https://i.s3.glbimg.com/v1/AUTH_08fbf48bc0524877943fe86e43087e7a/internal_photos/bs/2024/A/W/Kv5A4ARQ2S4KPpH5ua5Q/tt-chrome-01.jpg)