The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

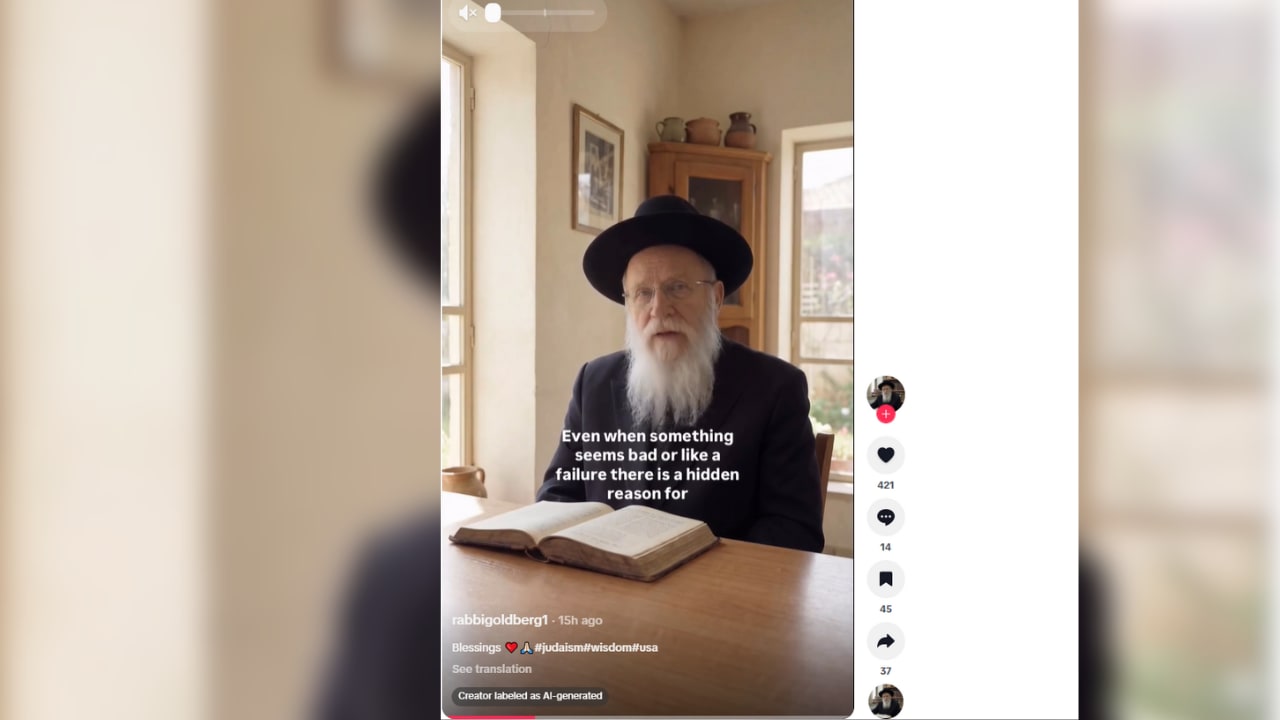

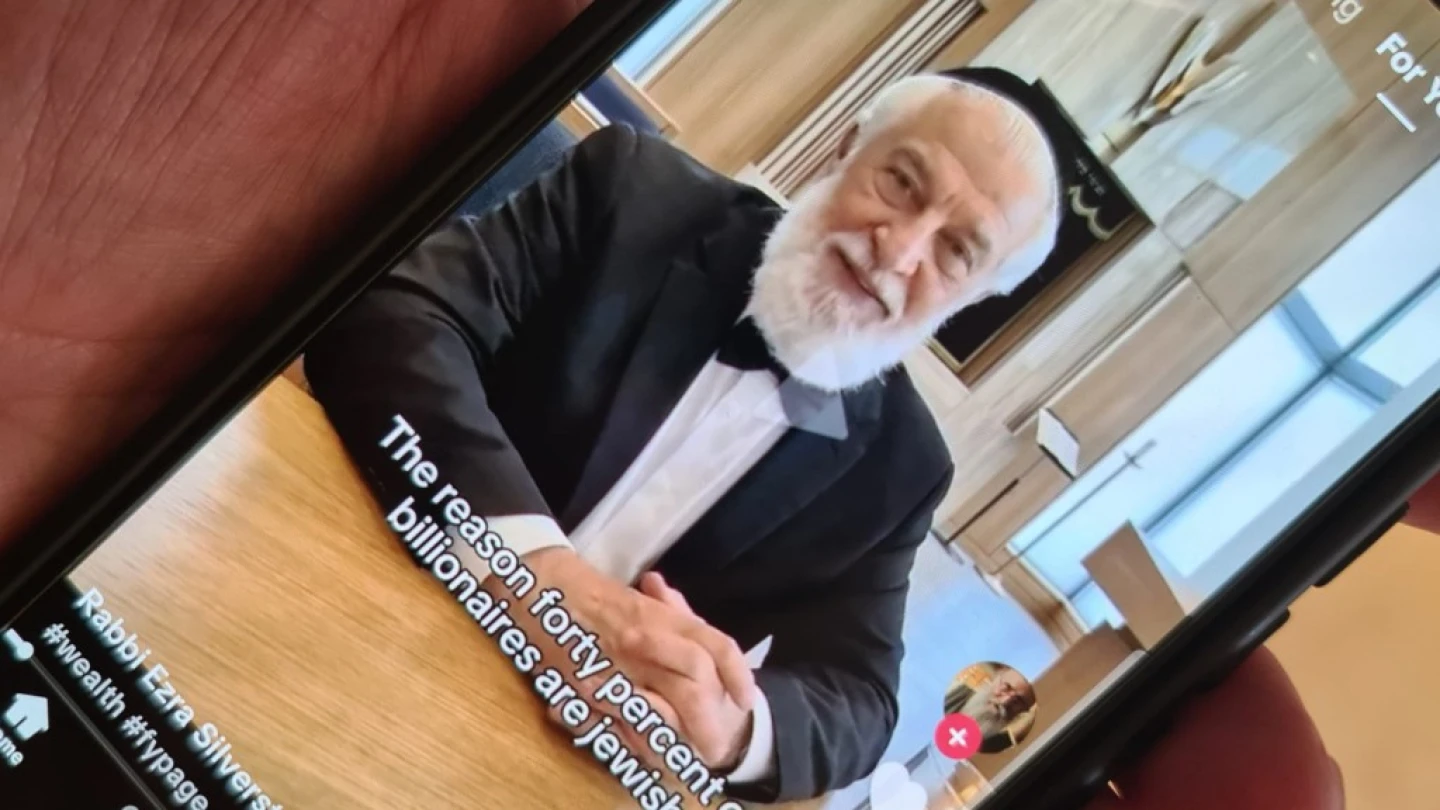

A coordinated network of at least 49 TikTok accounts used generative AI to create fake rabbis who spread antisemitic stereotypes and conspiracy theories. These AI-generated avatars amassed over 950,000 followers and 10 million likes, amplifying hate and misinformation by impersonating credible Jewish voices and deceiving audiences.[AI generated]