The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

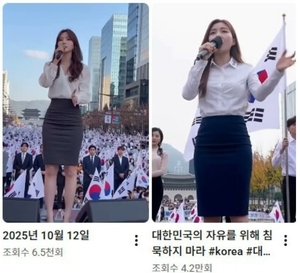

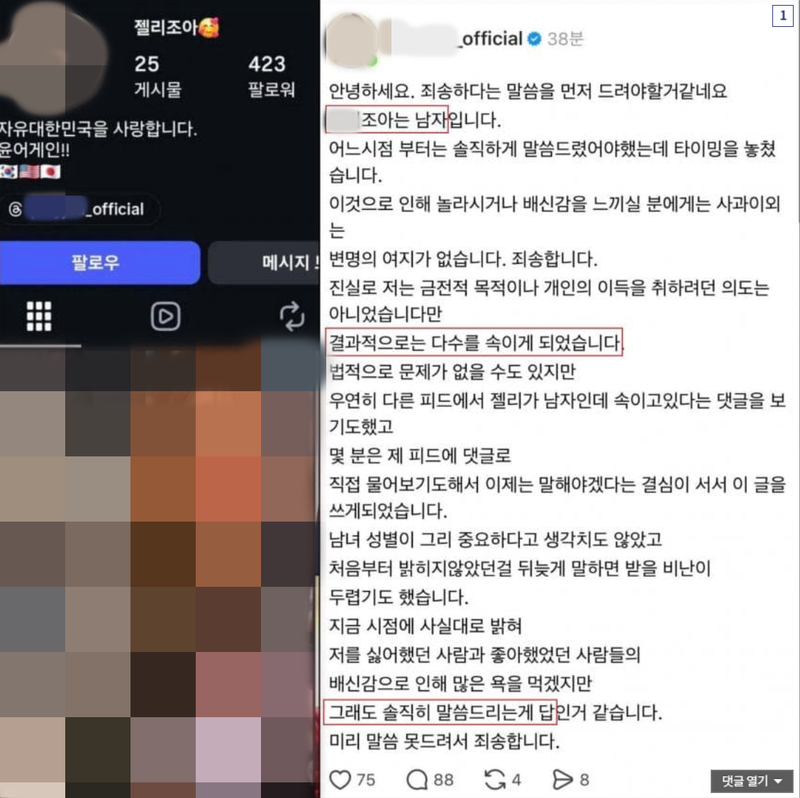

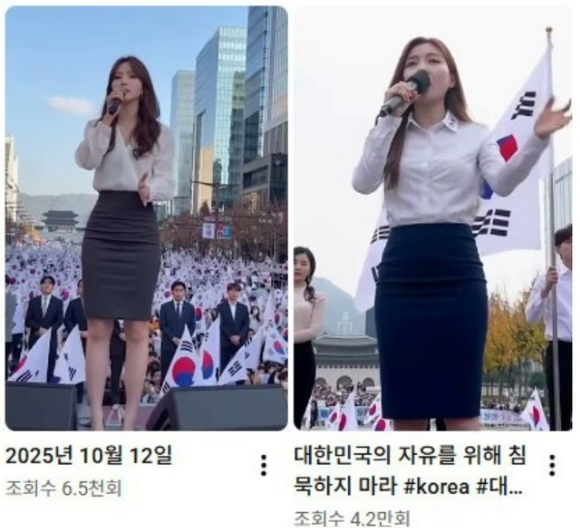

AI-generated images of young women were used to create fake social media accounts in South Korea, spreading political messages and deceiving users. The accounts, operated by men, used deepfake technology to manipulate public perception, leading to misinformation, violation of individual rights, and undermining social trust.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly states that the videos were created using AI technology to fabricate a person's appearance and speech, which were then widely shared on social media, deceiving many people. This manipulation of public perception through AI-generated fake content is a direct cause of harm to communities by spreading misinformation and undermining democratic discourse. Therefore, this event qualifies as an AI Incident due to realized harm caused by AI misuse.[AI generated]