The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

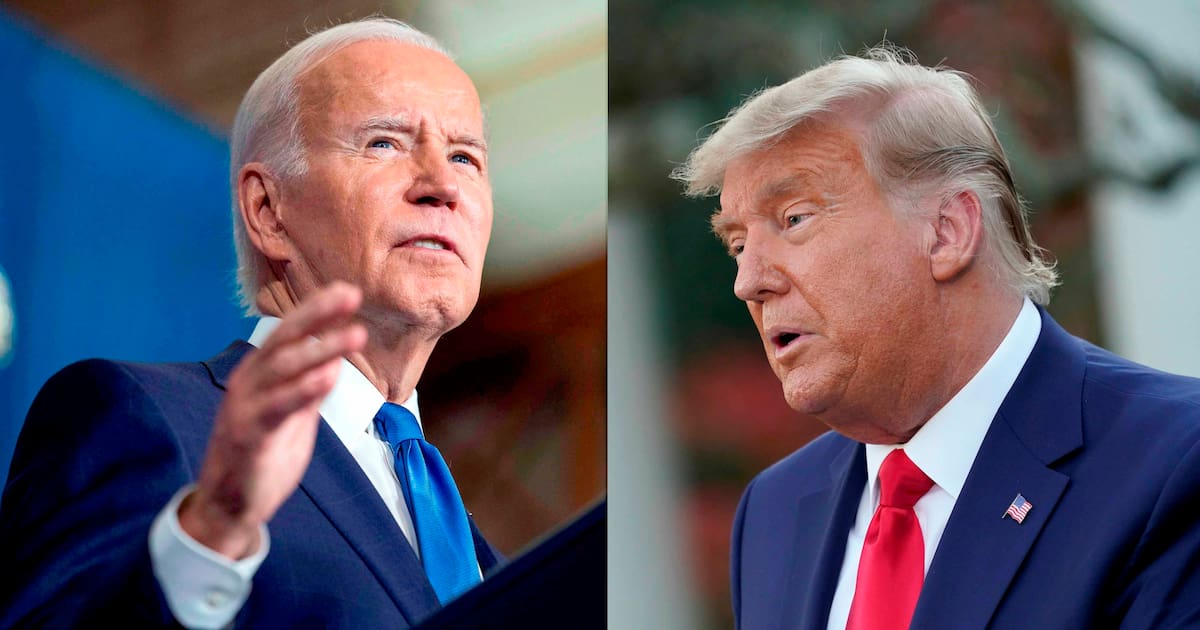

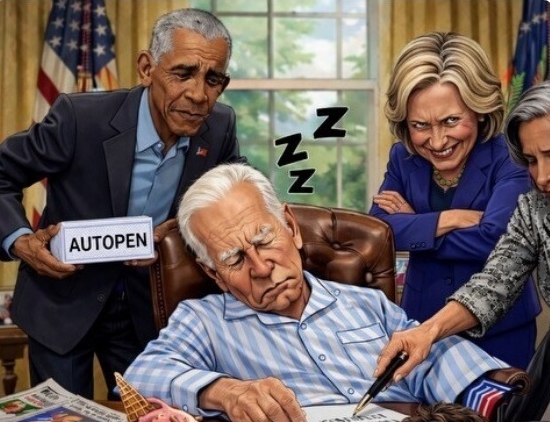

Donald Trump posted an AI-generated image on Truth Social depicting Joe Biden asleep in the Oval Office and his son Hunter using drugs, alongside other political figures. The manipulated image, widely shared online, raises concerns about AI-driven misinformation and reputational harm in U.S. political discourse.[AI generated]