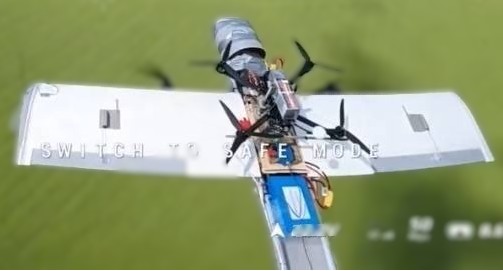

The article explicitly mentions an AI system that autonomously detects and tracks drones and assists in targeting, which qualifies as an AI system. The system is actively used in combat, so the AI is involved in the use phase. However, the article does not describe any harm caused by the AI system malfunctioning or misuse; rather, it is used to prevent harm from enemy drones. There is no indication of injury, rights violations, or other harms caused by the AI system itself. Therefore, this is not an AI Incident. The system is already deployed and operational, so it is not merely a potential hazard. The article does not focus on governance, policy, or research updates, so it is not Complementary Information. Hence, the event is best classified as an AI Hazard because the AI system's deployment in a military context with autonomous targeting capabilities plausibly could lead to harms such as injury or escalation, even if no harm is reported yet. However, since the system is already in use and the article does not report harm caused by the AI system, the classification as AI Hazard is appropriate rather than AI Incident.