The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

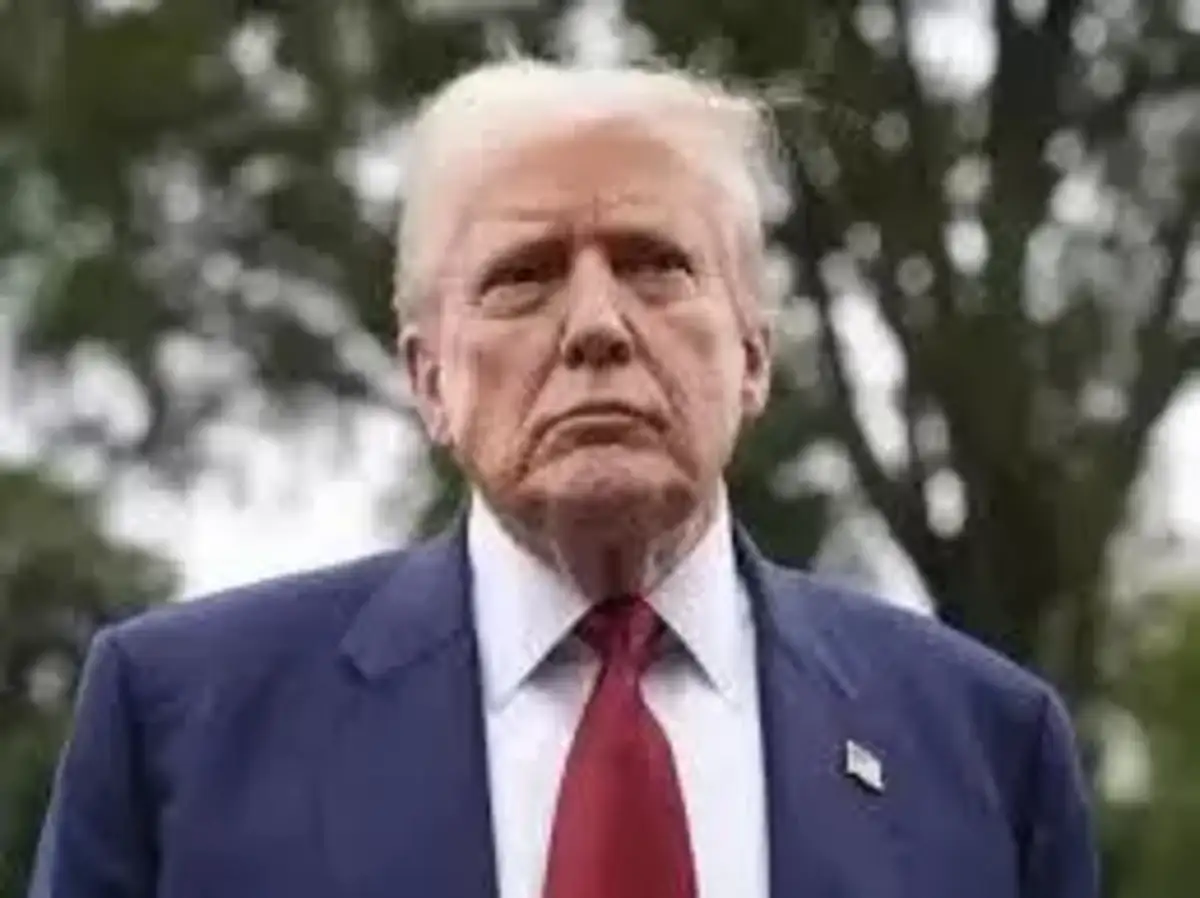

Hyper-realistic AI-generated avatars, posing as fervent Trump supporters, have flooded social media platforms with partisan political messaging and disinformation ahead of the US midterm elections. This use of AI manipulates public opinion and threatens the integrity of democratic processes by spreading deceptive content to influence voters.[AI generated]