The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

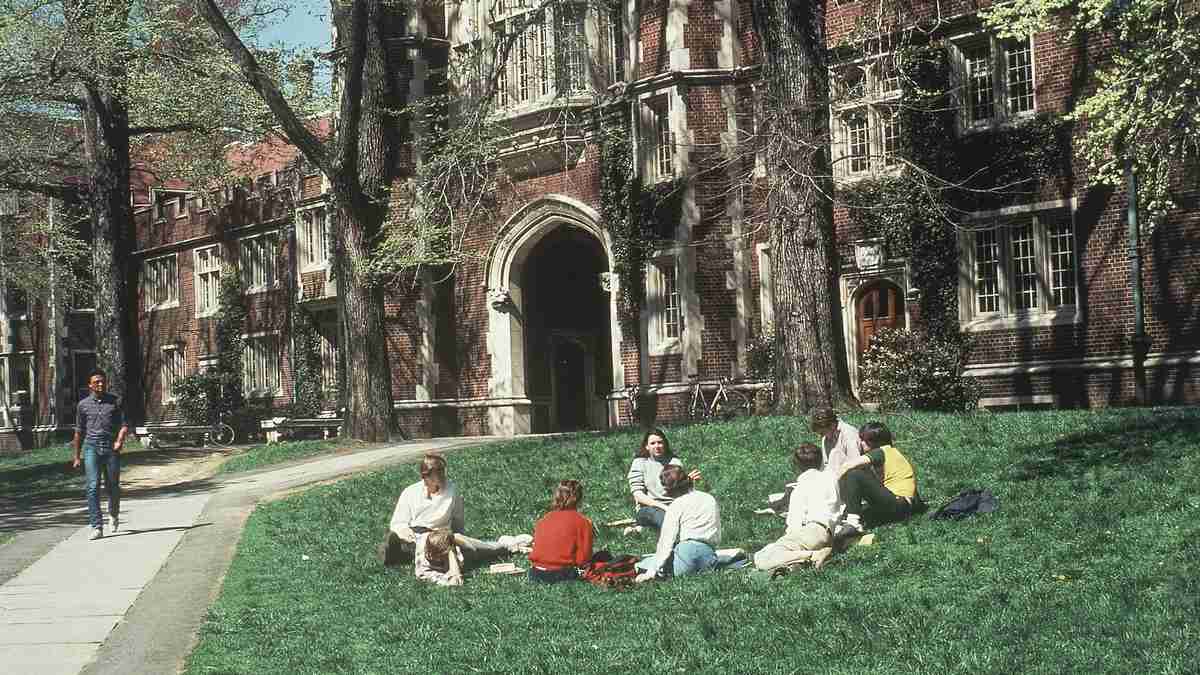

Princeton University has ended its 132-year tradition of unproctored exams due to widespread student cheating facilitated by generative AI tools like ChatGPT. With nearly 30% of students admitting to cheating, the university will now require proctored exams and implement detection software to restore academic integrity.[AI generated]