The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

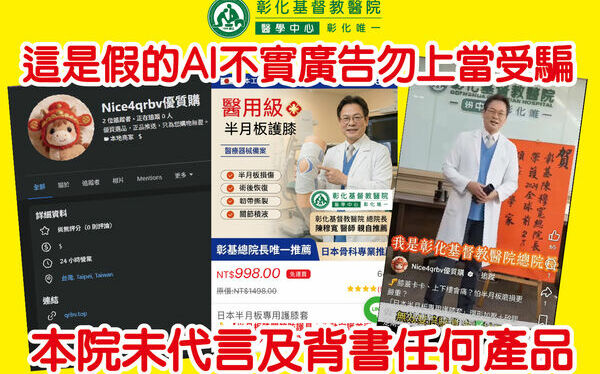

Fraudsters used AI deepfake technology to create convincing fake videos of Changhua Christian Hospital director Chen Mu-Kuan, falsely endorsing medical products. The deepfakes misled both staff and the public, causing financial and health risks. The hospital is pursuing legal action to protect its reputation and public health.[AI generated]