The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

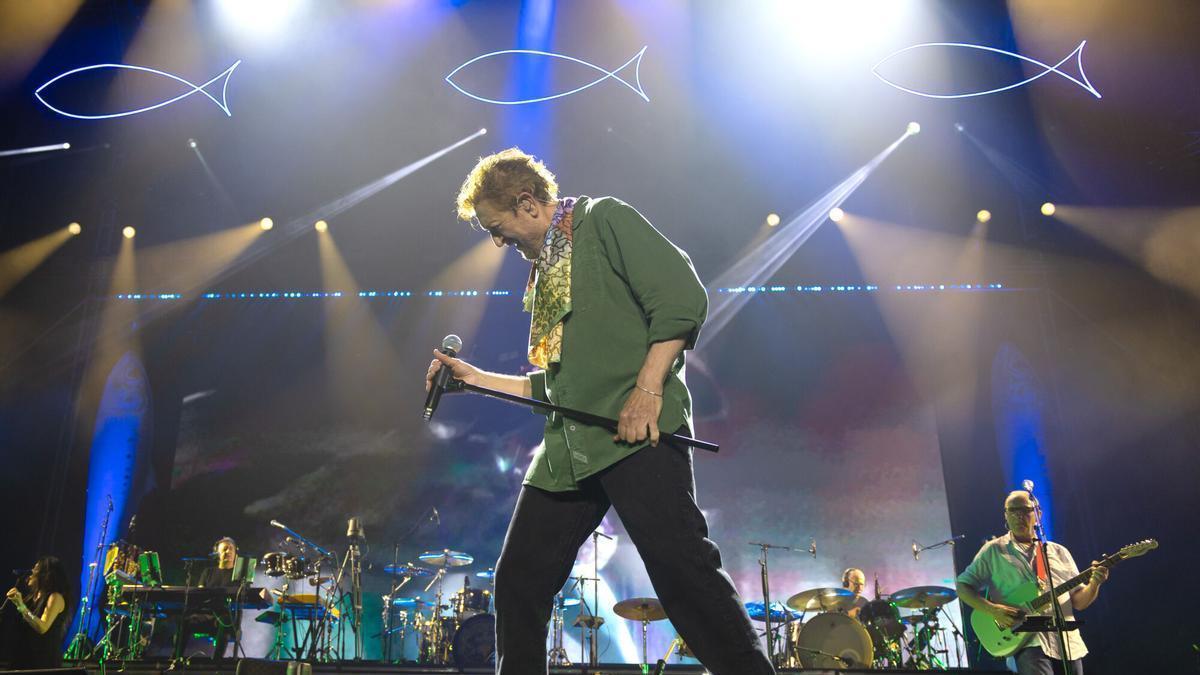

After Manolo García's crowd surfing at a Barcelona concert, AI-manipulated images falsely depicting his injury circulated online, causing public concern and reputational harm. The artist condemned the unauthorized use of his image and the spread of misinformation, highlighting the social impact of AI-generated fake content.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions the use of AI to create manipulated images that falsely show the artist injured, which caused real emotional harm and public alarm. The AI system's outputs directly led to misinformation and distress, fulfilling the criteria for harm to communities and individuals. Since the harm has already occurred and is directly linked to the AI-generated content, this is an AI Incident rather than a hazard or complementary information.[AI generated]