The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

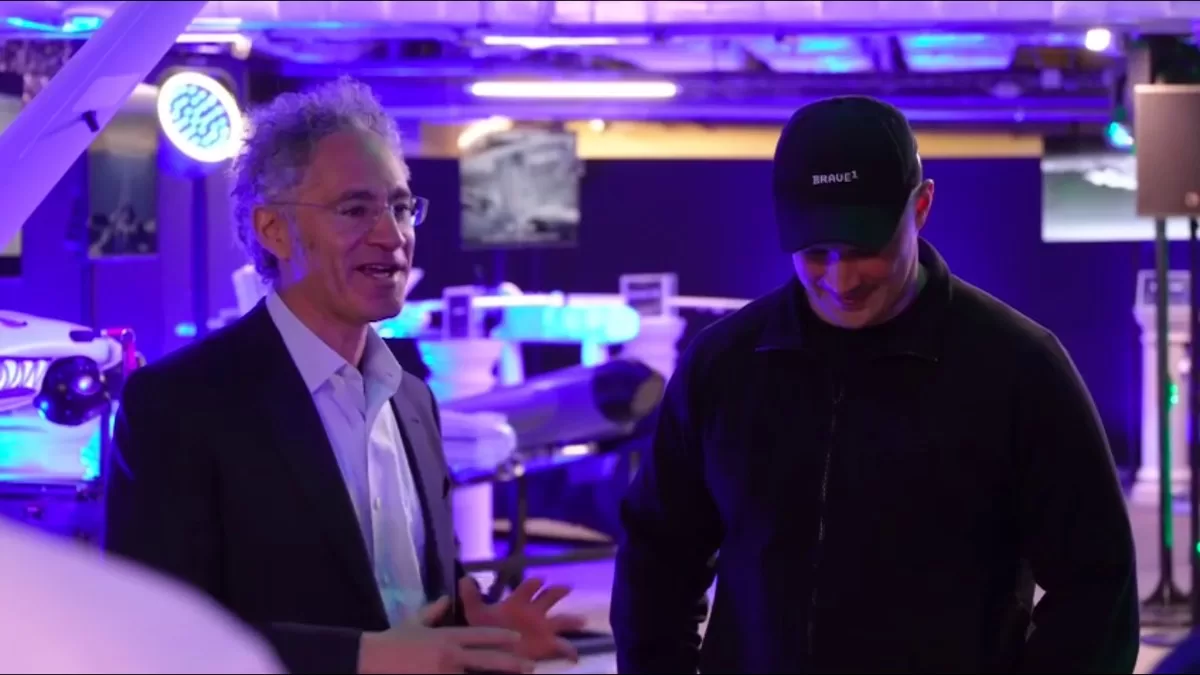

Ukrainian President Zelenskyy and Defense Minister Fedorov met with Palantir CEO Alex Karp in Kyiv to strengthen AI-driven military cooperation. The partnership includes projects like Brave1 Dataroom, leveraging battlefield data to develop AI for intercepting drones and analyzing attacks, but no AI-related harm or incidents were reported.[AI generated]