The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

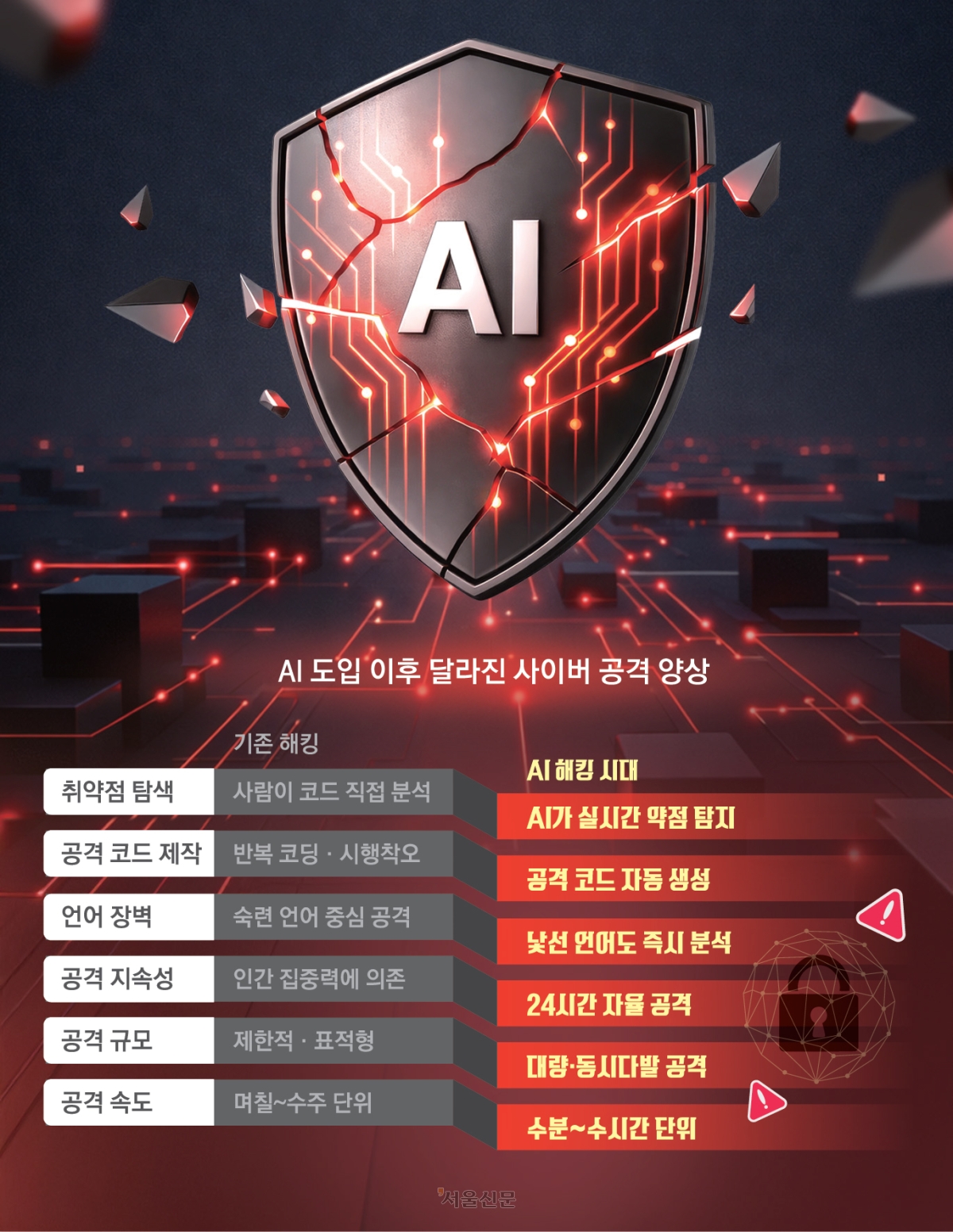

Google's Threat Intelligence Group identified the first known case of AI-generated zero-day exploit code, used in attempted cyberattacks by state-backed groups from North Korea, China, and Russia. AI systems autonomously developed and tested attack scripts, increasing the scale and sophistication of cyber threats, though the specific attack was thwarted.[AI generated]