The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

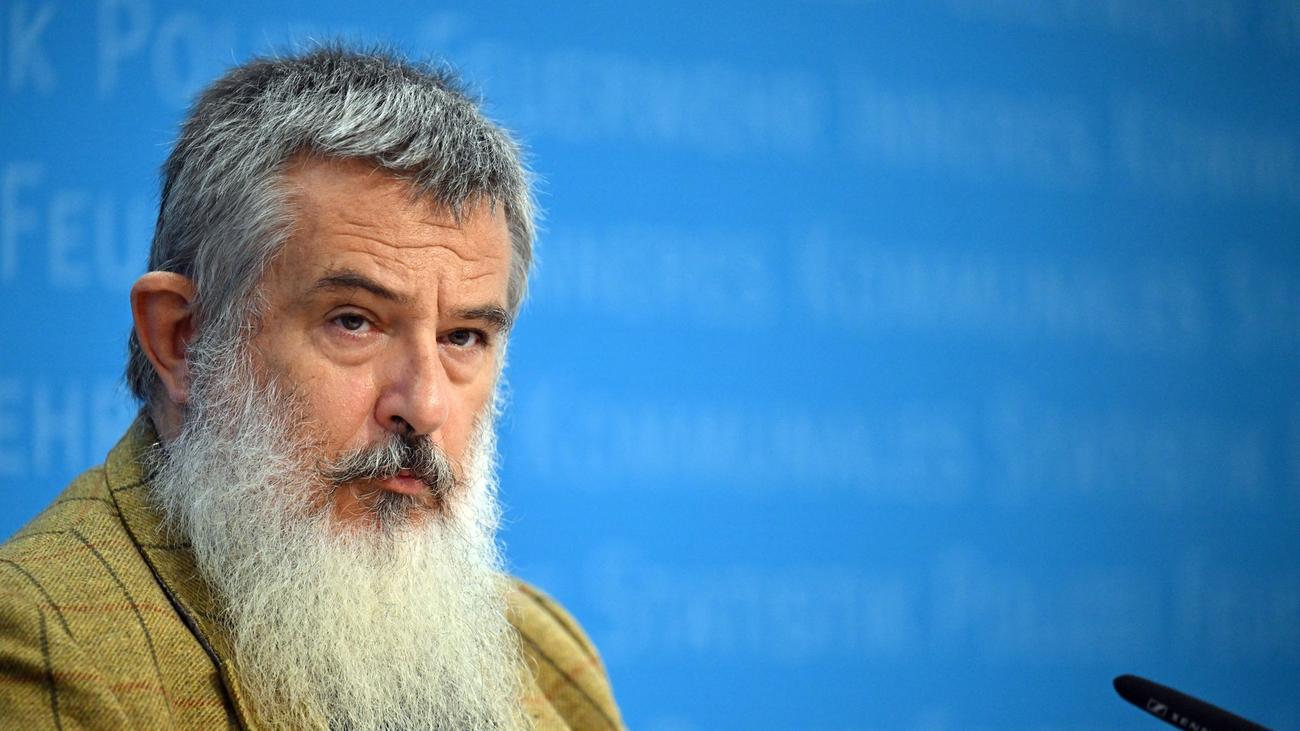

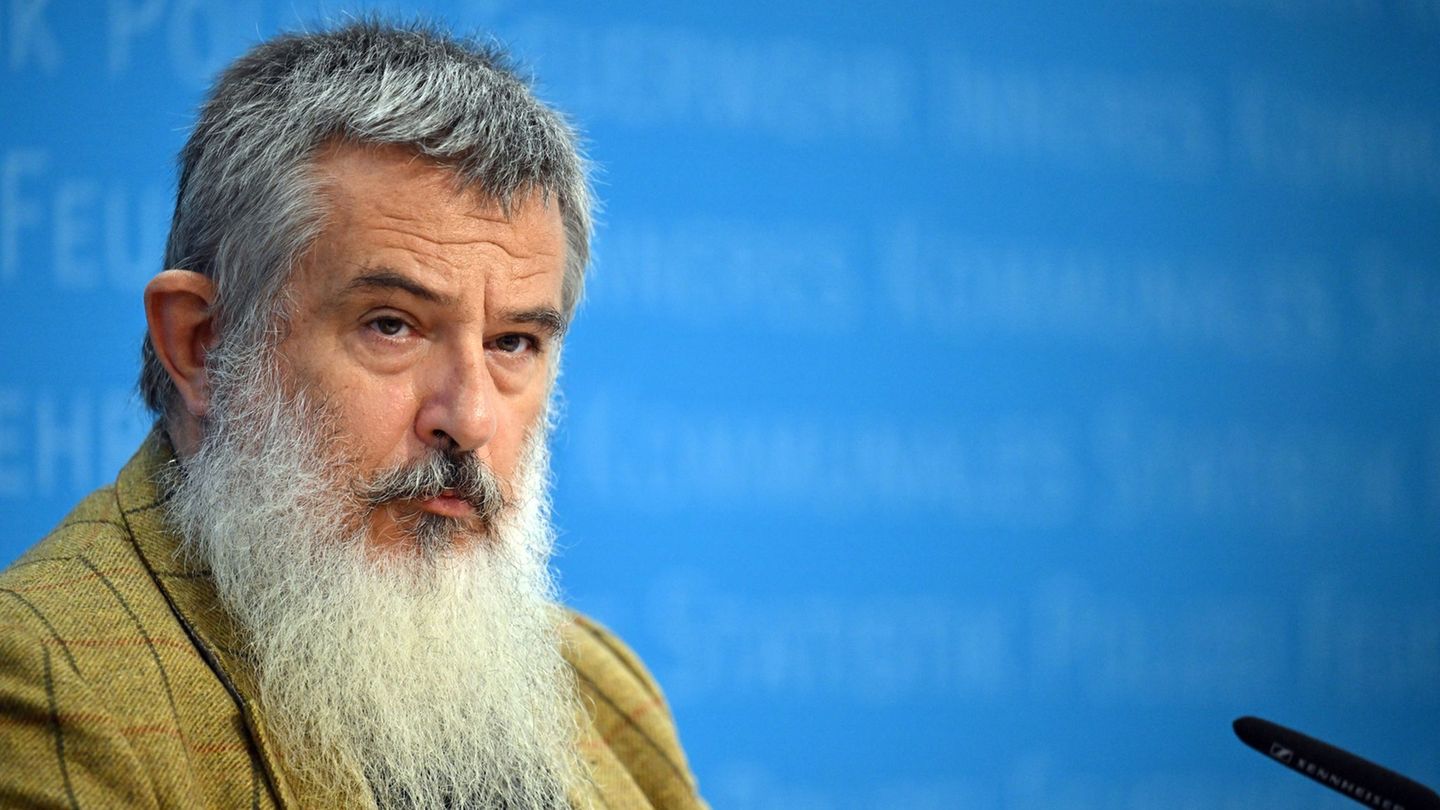

Security experts, including Stephan Kramer, President of the Thuringian Office for the Protection of the Constitution, warn that Anthropic's AI model 'Mythos' can autonomously identify and exploit software vulnerabilities, lowering barriers for cyberattacks. Concerns focus on potential misuse by criminals or state actors, especially against critical infrastructure and financial institutions in Europe.[AI generated]