The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

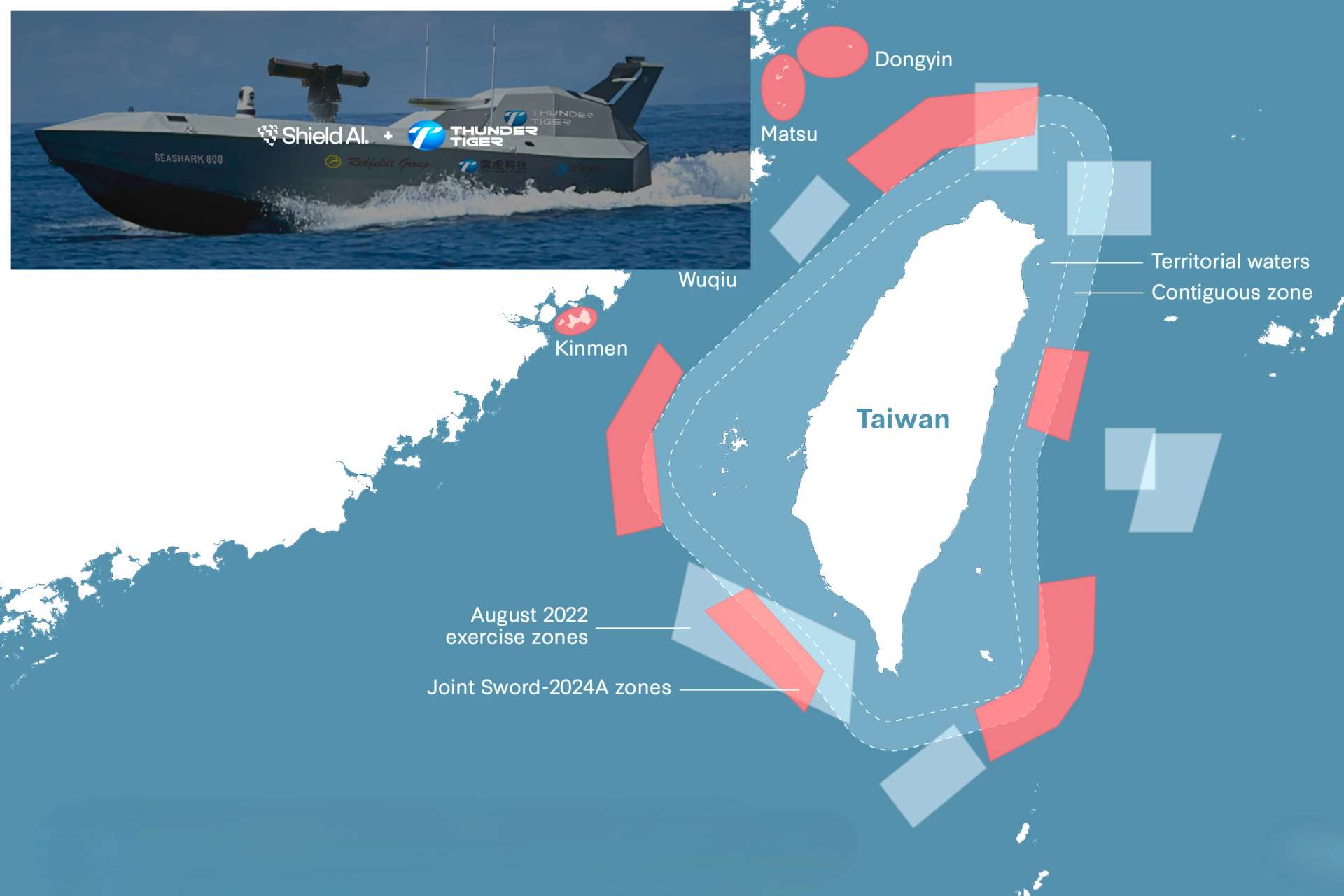

Shield AI and Taiwan's Thunder Tiger have signed an agreement to integrate Shield AI's Hivemind autonomous AI software into Thunder Tiger's unmanned maritime platforms. The collaboration aims to enhance Taiwan's defense with autonomous, AI-driven systems capable of independent and coordinated military operations, raising future risks associated with autonomous military AI deployment.[AI generated]