The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

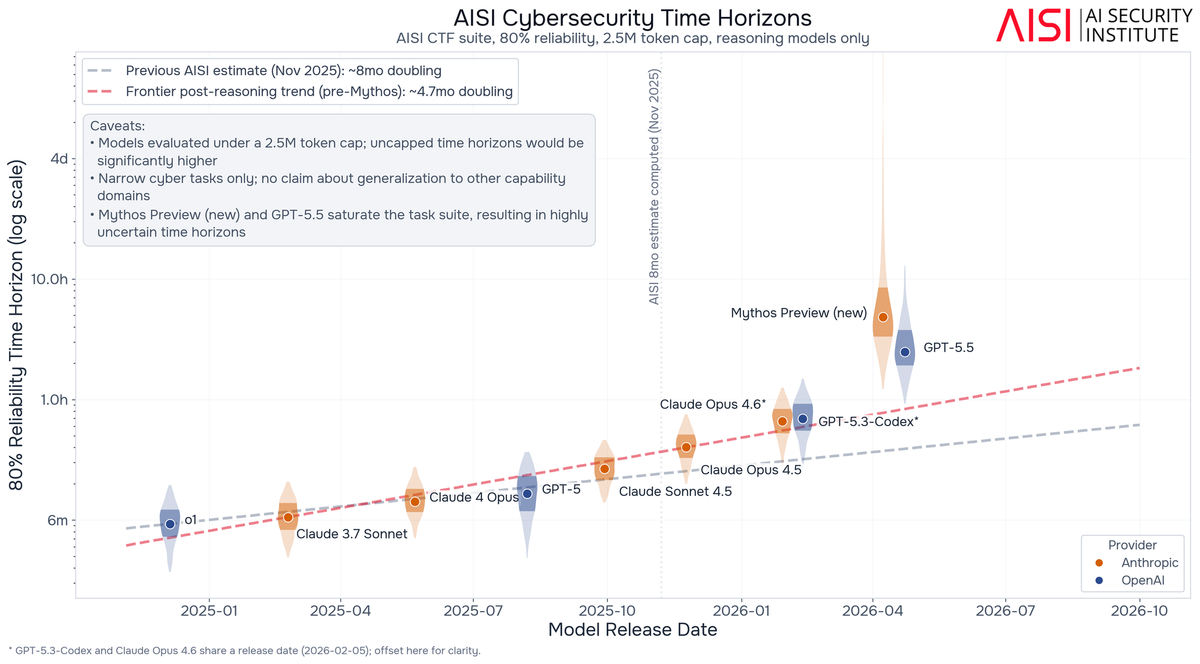

AI models such as Microsoft's MDASH, Anthropic's Mythos, and OpenAI's GPT-5.5 are rapidly advancing in autonomously finding and exploiting software vulnerabilities, leading to both the discovery of new security flaws and increased risks of AI-enabled cyberattacks. Authorities and experts warn of urgent threats to critical infrastructure, especially in Europe.[AI generated]