The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

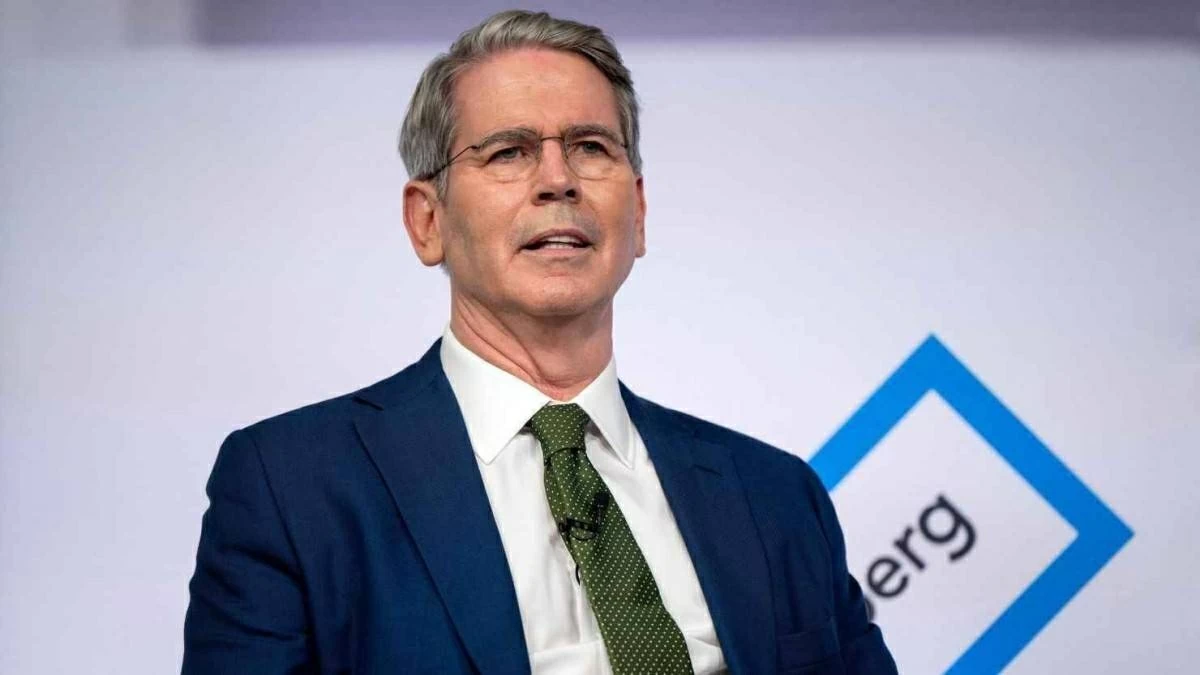

US Treasury Secretary Scott Bisent announced that the US and China are negotiating protocols to regulate AI use, aiming to prevent its misuse in cyberattacks. Both countries share concerns about non-governmental actors accessing advanced AI models, but emphasize not stifling innovation. Talks occurred during President Trump's visit to China.[AI generated]