The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

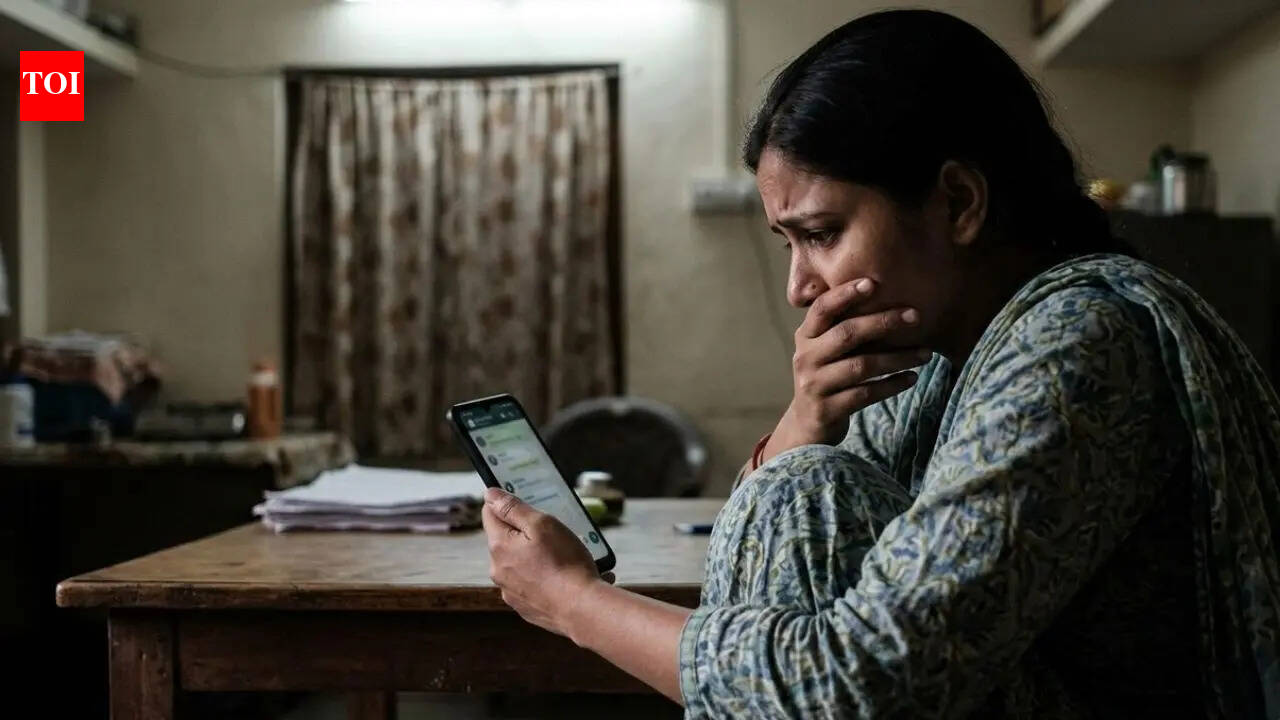

In Bhadohi, Uttar Pradesh, a cyber cafe operator and his brother used AI to create obscene images of a woman from her social media photos, then blackmailed her for money. The accused extorted Rs 50,000 and threatened further exposure, with police investigating possible additional victims.[AI generated]