The 2021 AI Index: AI Industrialization and Future Challenges

Despite the COVID-19 pandemic, the AI industry saw major growth in 2020: more AI investment flowed to companies applying AI to cancer treatments and drug discovery; a new class of large-scale AI models emerged in the research community; computer vision systems continued to get cheaper and easier to deploy; universities offered more AI classes than ever before; and more than a fourth of countries in the world have published or announced plans to develop national AI strategies.

These are just some of the findings of the 2021 AI Index report, published by an interdisciplinary team at the Stanford Institute for Human-Centered Artificial Intelligence (HAI), and now available in the OECD’s AI policy observatory.

The AI Index partners with organizations from industry, academia, and government to provide a thorough analysis of AI’s technical progress and its impact on national economies, education, government policies, the state of diversity among AI professionals, and more. The OECD and the AI Index are working together to ensure that data from the Index is uploaded to the AI Policy Observatory (OECD.AI), and to find ways to use the OECD’s expertise to develop better AI Index tools, such as the AI Vibrancy Index.

Report highlights: shaking up academia, deep fakes, trust, diversity and more

Here are some of the highlights from the 2021 report:

- China surpassed the United States in significant scholarly work. Chinese-affiliated scholars were cited in more peer-reviewed journals than any other country’s scholars, indicating China’s AI research has increased in quality and quantity. However, the United States has consistently (and significantly) more cited AI conference papers than China over the last decade.

- Synthetic media, colloquially known as deep fakes, are on the rise, with breakthroughs in the generation of synthetic text, imagery, and video demonstrating the progress of AI, but also highlighting the potential for unethical or dangerous use.

- Ethical challenges of AI applications have become a bigger focus for the AI community, with a significant increase in papers mentioning ethics and related keywords between 2015 and 2020.

- Diversity in AI is low – in 2019, 45% of new U.S. resident AI PhDs were white and 22.4% were Asian, while 2.5% were African American and 3.2% were Hispanic. AI researchers are forming more affinity groups to try to improve diversity in the field, and these groups are seeing significant growth in their membership and impact: Black in AI members had twice the number of papers accepted at NeurIPS in 2019 compared to 2017, and participation at workshops held by the Women in Machine Learning Group has grown from under 200 participants in 2014 to more than 900 in 2020.

- Since Canada published a national AI strategy in 2017, other nations have followed, with more than 30 countries committing to national AI strategies through the end of 2020.

- In 2021, more AI PhDs chose to take jobs in private industry than in academia, and professors continued to leave higher education for roles in corporations.

- Corporations have come to dominate the tools that AI researchers use, with corporate-backed software libraries (Google’s TensorFlow and Keras, and Facebook’s PyTorch) becoming the most popular frameworks on GitHub.

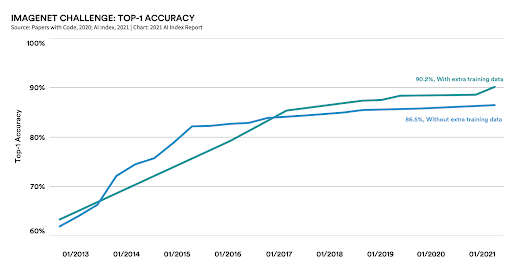

One of the noticeable trends in the 2021 report is the industrialization of AI, most visible in computer vision. Here, we’ve seen how years of research has led to a staggering rise in the capabilities of computer vision systems; as the above graph shows, computers have gone from being able to reliably label the contents of an image 63.3% of the time in 2012, to 90.2% of the time in 2021.

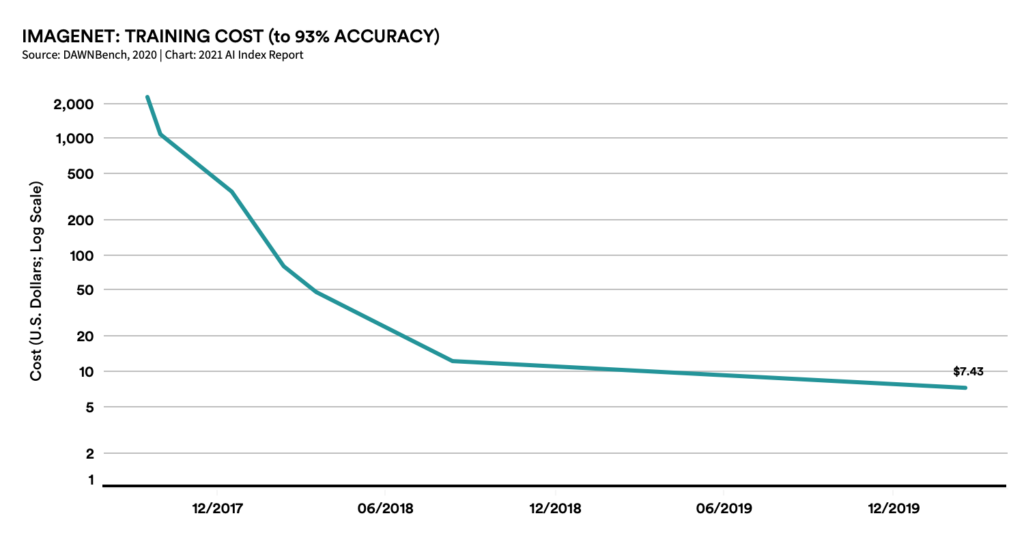

The second graph shows how these research improvements have been coupled with a dramatic fall in the costs for training computer vision systems. Combined, this gives us a better sense of why AI is increasingly influencing the world around us: it is because most AI systems are becoming more capable and less expensive to deploy.

Open questions

There are serious, open questions facing the AI community. We’ve formulated these questions by identifying the gaps between what we can and cannot measure. For example, computer vision systems have gotten better and cheaper but they can potentially still display biases. Our ability to test for the biases in these systems is not as well developed as our ability to assess them for technical performance against standard benchmarks, or cost of deployment. As AI becomes an increasingly influential part of the economy, we’ll need to develop better ways to test and assess systems for salient societal impacts.

There are many more open questions relating to measuring AI system development and impact: How can we substantially increase the amount of work being done to test and assess AI systems for societal impacts, such as their potential to magnify or minimize various harmful biases. These metrics help us understand the potential security and robustness traits of systems and the creation of more testing suites for evaluating systems. As AI is now being deployed widely, we need to develop a broad set of metrics to help us characterize these computational systems according to policy concerns, not just raw technical performance.

The AI Index is committed to working on this important problem, in partnership with a growing range of organizations, including the OECD.