AI legal cases are increasing: how can we prepare?

Artificial intelligence (AI) has enormous potential to promote global economic growth by more than 15 trillion USD. Additionally, it can advance social good by helping to combat climate change and biodiversity loss, reduce human trafficking, improve healthcare, and support achieving the UN Sustainable Development Goals by 2030. AI also can strengthen the national security of the United States and other countries.

With these tremendous promises, however, comes the responsibility to mitigate AI’s potential harms and risks. One risk is the spread of violent and other troublesome content created by foundation or generative AI. This is highlighted by the expansive capabilities of ChatGPT and Stable Diffusion’s open-source project that has sparked Congressional and other concerns. While these AI applications have beneficial uses, if not managed properly, they can displace many workers and upend intellectual property protection, in addition to producing discriminatory and other harmful content.

Foundation or generative AI is just one example of the risks and responsibilities that AI technology presents. Responsibilities extend broadly to preventing over-surveillance, discrimination, and infringement upon fundamental rights. The Blueprint for an AI Bill of Rights in the United States, Canadian and European Union legal proposals, the Council of Europe, UNESCO, and many others, all spotlight this.

Policy makers worldwide are considering a range of approaches to operationalize the OECD AI Principles, designed to help unlock AI’s potential while mitigating harms and risks. For example, the European Union is working on comprehensive AI regulations with its draft EU AI Act. The United States is looking to manage risks with an AI Risk Management Framework (AI RMF) and by applying longstanding civil rights and consumer protection laws, along with other laws and policies.

AI enforcement challenges and solutions

While the OECD’s democratically aligned jurisdictions may choose different AI regulatory pathways, they converge on the need for appropriate enforcement. This is reflected in the OECD AI Principles that underscore the need for accountability. This makes sense: countries will never achieve their trustworthy AI goals if those harmed by AI applications do not have appropriate redress mechanisms.

Any means for enforcement, however, should be consistent with other OECD AI Principles, such as promoting inclusive growth, sustainable development, and well-being. And this will require investment in AI research and development.

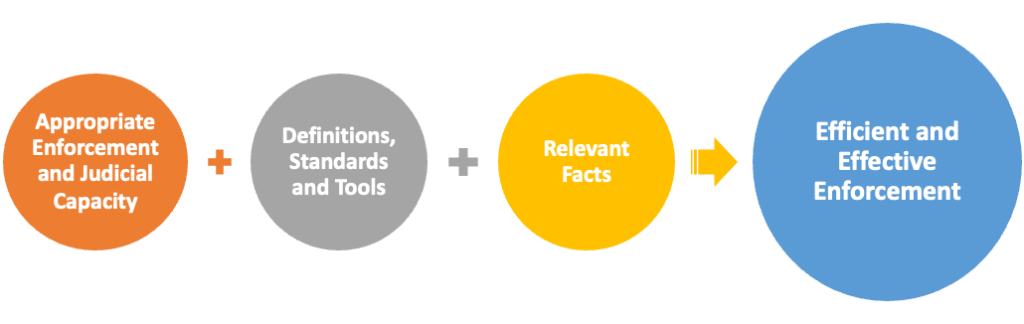

A major challenge lies in making these OECD AI Principles work together: how to foster AI enforcement so it appropriately redresses harms but does not unnecessarily hinder responsible innovation. To help respond to this challenge, AI laws and policies must factor in all OECD AI Principles. Furthermore, it is equally important to (1) build enforcement and judicial capacity for AI cases, (2) develop common AI definitions, standards, and related tools, and (3) ensure that individual AI enforcement cases appropriately consider the relevant facts.

Enforcement and judicial capacity

The mounting cases involving AI underscore the urgency to develop appropriate enforcement and judicial capacity. Adding to this imperative, the rise in AI cases likely will accelerate.

In the United States, government agencies are expanding enforcement and seeking to build upon the AI Bill of Rights. In the European Union, the proposed AI Act, along with the draft AI Liability Directive and the revised Products Liability Directive, should make it easier for aggrieved parties to bring cases and obtain remedies.

Support for enforcement

As enforcement expands, the question becomes how best to build more capacity. The current AI enforcement landscape sheds important light on the answers. It reveals that a variety of complex cases already exist. These cases span several sectors and applications, such as facial recognition technology, fair housing, lending, employment, healthcare, surveillance, and criminal justice. Remedies have included fines and injunctive-style relief. In some cases, such as with Clearview AI, similar actions have been brought in different jurisdictions.

Collectively, the cases have presented many challenging issues involving consumer protection, privacy, discrimination, civil rights, intellectual property, due process, equal protection, freedom of speech, transparency, product liability, and other legal matters. In one novel case, the US Supreme Court will decide whether Section 230 of the Communications Act immunizes Google from liability for harms caused by third-party ISIS videos displayed by its algorithms.

This expanding landscape demonstrates a need for AI enforcement capacity with sufficient resources and broad expertise to make well-informed decisions about which cases to bring and to pursue such cases efficiently and effectively. This also includes seeking remedies tailored to redress the relevant harms.

Education is key for AI in the judiciary

The judiciary must have the necessary training and support to manage AI cases and the increasing AI usage in the court system. The Massive Online Open Course of AI and the Rule of Law is just one example of judicial training. This widely available course produced by several stakeholders explains the risks and opportunities presented by AI applications, including their impact on the justice system. Academia and others have created educational programmes for the judiciary too.

To be effective, enforcement needs common AI definitions and tools

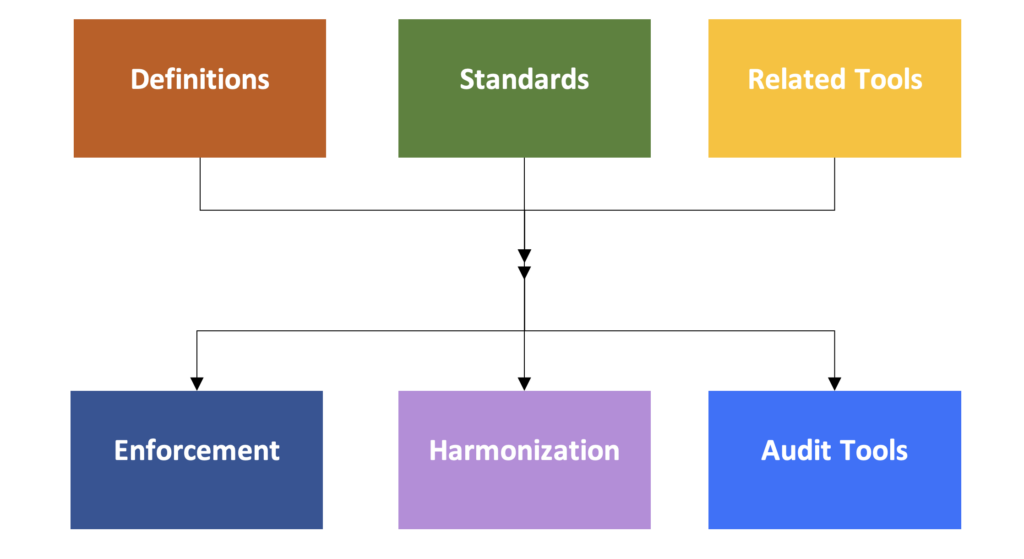

Whether cases involve applying well-established laws to AI, such as the United States’ longstanding anti-discrimination laws, or interpreting new laws, such as the EU AI Act, once enacted, enforcement will be more effective if there are common AI definitions, standards, and related tools. Importantly, this will better position stakeholders to assess compliance more swiftly, accurately, and transparently. Equally as critical, these tools should improve alignment between AI laws and policies on the one hand and engineering and science on the other.

Context matters for tools

The draft EU AI Act and other existing and proposed laws illustrate this need. For example, the draft EU AI Act would impose data governance requirements on “high-risk” AI applications. It also emphasizes that these AI applications require high-quality data sets that are “sufficiently relevant, representative and free of errors and complete in view of the intended purpose of the system.” Common AI definitions, standards, and other tools can help enable fair and effective enforcement of this type of requirement by giving the legal language more meaning.

Turning to the standards and tools, the AI Bill of Rights emphasizes that they should be crafted so that data quality is assessed factoring in the relevant use case. In other words, the challenge is determining how to assess whether the data is fit or suitable for the AI application’s intended purpose. The draft AI RMF also recognizes the importance of considering risk management in the relevant context.

Similarly, data governance tools are needed to help measure compliance with emerging regulations and to aid with assessing liability and enforcement more broadly. These tools should enable tracking and reporting of relevant data characteristics in a way that complies with privacy and other laws. They should also foster greater data interoperability and voluntary data sharing. Recognizing this need, the US Congress directed the National Institute of Standards and Technology to develop data governance tools.

As with data quality, data governance tools should be adaptable for different contexts. For instance, data formats for healthcare may not be well-suited for environmental data. Data governance tools should also factor in privacy-enhancing technologies and provide for reporting that is transparent and understandable while maintaining the privacy and intellectual property protections.

Harmonization and audit tools are also benefits

As mentioned, AI regulatory approaches vary among jurisdictions. However, developing common AI definitions, technical standards and related tools can help foster much-needed cross-border harmonization through other mechanisms. The EU-US Trade and Technology Council is a good example of an effort to build international convergence on these types of tools when regulatory pathways diverge. Common definitions, standards and related tools can also provide a good foundation for appropriate AI audits, which are emerging in legal requirements and proposals.

Facts matter

Even with the necessary enforcement and judicial resources reinforced by AI definitions and measurement tools, sharp focus must be maintained on the underlying facts of each case. As reflected in the OECD Framework for the Classification of AI Systems, the AI RMF, and other publications, there are myriad AI use cases that present varying risks. A “one-size fits all” approach that overlooks the relevant and unique facts of individual cases will not lead to optimal enforcement. Among other things, this approach could deny aggrieved parties adequate remedies.

Liability through the AI supply or value chain

As a further illustration of their importance, the underlying facts are critical for determining who should be liable for causing AI harm. This is paramount, as many organisations utilise third-party AI tools or data sets or engage in collaborative development. In addition, several jurisdictions have laws and policies where more than one entity in the AI supply or value chain can potentially be liable for harm caused by AI applications. For instance, this is contemplated by the EU AI Act and liability proposals discussed above. In the United States, the Department of Justice and Equal Employment Opportunity Commission have confirmed that deployers can be liable for harm from using third-party AI tools.

Emerging case law considers the facts

The pivotal role of the facts is further highlighted by judicial cases addressing whether an AI developer should be liable for harm caused by a customer’s use of its product. For example, in one US case, the developer of an AI tool used when making bail decisions was not held liable for a murder committed by a defendant released while awaiting trial. Importantly, the court examined the facts and cited factors besides the developer’s AI tool that informed the bail decision.

In contrast, in a federal case involving discrimination against rental candidates brought in Connecticut, the court held that the vendor of an AI tool used to screen rental candidates could be liable for its customer’s discriminatory conduct. This decision highlighted the facts pertaining to how the AI vendor designed and marketed its product.

Expect the unexpected

There is a pressing need for efficient and effective AI enforcement across industry sectors and jurisdictions. Cases already raise many complicated legal issues, and the need for enforcement will continue to grow.

As AI applications evolve, enforcement questions could arise that we presently cannot anticipate. This is particularly true as we advance toward General Purpose AI (GPAI).

By investing in building enforcement capacity, common AI definitions and measurement tools, and maintaining a steadfast focus on the facts, society should be positioned to manage current and future AI cases consistent with the OECD AI Principles. This will require support from judiciaries, which need appropriate resources to fulfil their critical role.