To be truly participative, stakeholder involvement should follow an AI system’s entire lifecycle

Participatory AI initiatives are meant to bring together diverse stakeholders to design and oversee AI systems that are fair and trustworthy. However, a recent review of 80 participatory AI initiatives revealed that, rather than providing participants with genuine decision-making power, the vast majority consult specific communities on narrow implementation details.

Meanwhile, every major AI lab now conducts some form of public consultation, the EU AI Act requires stakeholder involvement, and the OECD AI Principles regard stakeholder engagement as a fundamental element of trustworthy AI. Participation in AI governance has become more popular than ever. But an uncomfortable pattern is emerging: the more we discuss participation, the less we discuss meaningful engagement, real influence and power.

Many participatory approaches primarily involve communities during the early stages of the AI system lifecycle: design, data collection, and model development, while later stages, such as deployment, monitoring and system evolution, attract far less attention. During my fieldwork across Kenya, Malawi, and the Philippines, a troubling question keeps surfacing: once the participatory design phase concludes, who truly governs the AI system? Even highly participatory processes often dissolve once the system is launched. Governance then shifts back to those who built or commissioned the system. Communities involved in shaping the design often have little influence over how systems evolve to meet ongoing community needs or how they expand beyond their initial scope.

The old lesson we keep relearning

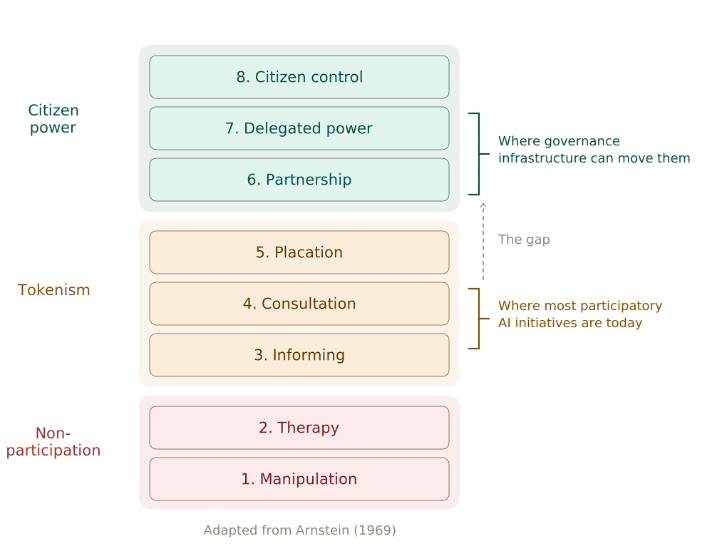

Back in 1969, urban planner Sherry Arnstein published a deceptively simple insight: not all participation redistributes power. Her “ladder of citizen participation” described eight levels, from manipulation at the bottom to citizen control at the top. She called the middle rungs “tokenism”: processes that perform inclusion without actually transferring authority. Arnstein was writing about urban planning in American cities, but her framework resonates powerfully in today’s AI landscape.

Over fifty years later, AI researchers are revisiting this lesson. Recent studies have introduced the concept of “participation washing”: the act of claiming inclusion without the necessary redistribution of power. Other researchers have documented how participatory rhetoric often conceals the ongoing centralisation of decision-making authority.

These findings paint a consistent picture. Participatory AI, despite methodological progress, largely remains at Arnstein’s “consultation” and “informing” levels rather than reaching the “partnership” or “delegated power” levels. The reasons for this are understandable. Genuine participation is costly, slow, and fosters accountability relationships that complicate rapid development and deployment cycles. Organisations optimising for scale naturally minimise governance complexity. However, this means participatory AI often reproduces the very power imbalances it seeks to address.

The real divide: Methods versus infrastructure

Despite all this, the field has made genuine progress in developing methods to meaningfully involve people in AI design. The repository of tools and metrics on the OECD.AI Policy Observatory showcases participatory frameworks that now guide humanitarian and public sector AI initiatives globally. Methods have become increasingly sophisticated, moving beyond superficial consultation towards authentic co-design. What remains underdeveloped is the infrastructure for ongoing governance after deployment.

Think of it this way. Methods answer: “How do we involve people in design?” Infrastructure addresses: “How do people exercise authority after deployment?”

This distinction matters because AI systems, as the OECD definition emphasises, are adaptive. They are never “finished.” They evolve continuously through new training data, model updates, and deployment expansions. Each evolution requires governance decisions.

AI also relies on collective data. These are not tools that individuals choose to use; rather, they are infrastructure that processes communal information and makes decisions that affect entire populations. Without proper governance, participation during the design phase can inadvertently legitimise systems that centralise power. “We consulted the community” becomes a justification for deployment, even if communities no longer hold any authority over the system’s future.

What commons governance looks like in practice

If the challenge is institutional, what institutional forms could address it? One promising approach draws on principles from natural resource commons. Economist Elinor Ostrom received the Nobel Prize for demonstrating that communities can effectively manage shared resources such as fisheries, forests and irrigation systems. The application of commons governance to knowledge and digital resources has been extensively developed in later work, from Hess and Ostrom’s study of knowledge commons to comprehensive frameworks for analysing knowledge commons governance across various institutional contexts.

Commons governance models have several features that matter for AI: collective ownership, where communities hold rights to the resource; participatory decision making, where rules for usage and development are established through community processes; value sharing, where benefits are returned to the community; and continuous stewardship, where governance continues as long as the system operates.

The work I contribute to involves exploring whether these principles can guide AI system development in resource-limited settings. In Malawi, our research team at NYU and our local partners are creating a voice-based crisis reporting system designed to ensure that community governance councils oversee data practices, model updates, and deployment decisions. In the Philippines, we are collaborating with Kalinga State University on an Indigenous Knowledge Data Collaborative that allows communities to control how their traditional knowledge is digitised and used. The CARE Principles for Indigenous Data Governance, emphasising Collective benefit, Authority to control, Responsibility, and Ethics, offer a powerful framework here. These principles emerged from broader movements around Indigenous data sovereignty and represent a significant way to reshape data governance around community authority rather than external oversight.

While the OECD AI Principles mention data trusts as a mechanism for ethical data sharing, commons models take this logic further. Data trusts typically delegate authority to trustees acting on a community’s behalf. Commons models place community decision-making at the centre. The community does not transfer control to a benevolent intermediary; it retains authority itself.

Initiatives like Mozilla Common Voice and various Indigenous data-sovereignty movements are exploring this path worldwide, yet critical questions persist. Can commons governance operate effectively in resource-limited humanitarian settings? What occurs when commons governance moves too slowly for operational needs? Our fieldwork aims to address these exact questions.

What we are learning and what presents challenges

I want to be frank about the limitations of this work. These are early-stage experiments, not established models. However, they reveal important tensions that the wider field needs to address. If participatory AI governance is to go beyond words, we must be honest about what makes it challenging.

Power redistribution is costly. Governance meetings involve travel expenses, translation services, and participant compensation. In our work in Malawi, these costs are significant and surpass typical AI development budgets. However, this should be viewed not just as an expense but as an investment in risk mitigation. Without this investment, systems in complex environments risk rejection, non-adoption and even obsolescence.

Democracy is slow, and crises are urgent. Emergency responses demand quick decisions. Community governance takes time. The challenge is to differentiate between decisions that truly require rapid centralised action and those that only seem urgent to technical implementers.

“Community” is not uniform. Gender, age, and ethnicity influence who takes part and whose voice carries weight, and commons governance does not automatically resolve representation issues. However, it does make them visible and demands specific mechanisms to address them.

Sustainability remains uncertain because meaningful participation requires ongoing resources; governance cannot be an afterthought funded by short-term grants. It must be incorporated into budgets from the outset and treated as operational expenditure. International development finance models will need to adapt to support this. A system that works brilliantly for two years and then collapses because governance funding runs out is not a success.

What this means for AI policy

These experiments demonstrate methods to strengthen international frameworks for stakeholder engagement.

First, we need clearer distinctions between engagement levels. Consultative engagement involves gathering input to inform others’ decisions. Governance authority means stakeholders exercise binding power over system operation. Frameworks often conflate these two very different concepts. When a government or company says it has “engaged stakeholders,” does that mean it sought feedback or that it shared power? Making this distinction explicit helps implementers understand what they are truly committing to, and helps communities know what to expect.

Second, resource models need to change. Governance infrastructure, including ongoing meetings, capacity building, and conflict resolution, should be seen as a way to protect assets rather than as overhead. Current assessments of AI pilots rarely differentiate between technical and governance factors when a project fails. This complicates the process of allocating resources effectively. Funding structures must recognise that governance is the mechanism that maintains an AI system’s viability and trust over time.

Third, we need governance metrics. The OECD effectively monitors AI policy implementation. But we also need ways to measure the quality of power distribution. Who makes binding decisions about how a system evolves? Just as “technical debt” builds up when code is rushed, “governance debt” accumulates when engagement is overlooked. Eventually, the interest is paid in the form of lost trust or system failure. Recent work on AI accountability frameworks suggests promising directions for creating such assessments.

Finally, community data ownership has an unclear legal status in most jurisdictions. Cross-jurisdictional research on legal frameworks that support collective governance would be very valuable. Experiments such as New Zealand’s Māori data sovereignty frameworks and Barcelona’s data cooperatives offer promising models to learn from. The goal is not to impose universal frameworks but to document what works, what does not, and where the legal gaps are most severe.

Community governance authority as infrastructure

The participatory turn in AI governance represents genuine progress. However, involving people during design without engaging them in governance risks becoming a sophisticated form of consultation that maintains existing power structures. This is exactly what Arnstein warned against more than fifty years ago.

Moving from participation to power involves treating community governance authority as infrastructure: something that requires investment, upkeep, and institutional backing comparable to the technical systems it oversees. It means funding that supports governance alongside technical development. It means metrics that evaluate power distribution, not just engagement processes. And it means honest recognition that meaningful participation is slower and more costly than consultation, but also more likely to produce systems that communities genuinely trust and use over the long term.

Eighty participatory AI initiatives were reviewed, and most of them never moved beyond consultation. That finding should concern anyone who values participation. If we are serious about closing the gap between engagement and authority, we must start building the governance infrastructures to do it. AI remains in its early stages, and there is much we still need to understand. But one thing is becoming clear: if AI is considered infrastructure, it requires governance infrastructure. Otherwise, there is no trustworthy AI.

The international community has made significant progress in defining what responsible AI looks like. The next step is investing in the unglamorous, difficult, and necessary work of establishing governance structures to uphold these standards. This involves supporting communities not only as participants in design but also as equal partners in decision-making.