Why AI Sandboxes matter for responsible innovation and public trust

Among the various tools available to policymakers, regulatory sandboxes have gained considerable prominence in the AI governance landscape because they enable supervised innovation testing under controlled conditions and within limited timeframes. This can help to identify risks early, foster regulatory learning and refine regulatory requirements before they are applied at scale.

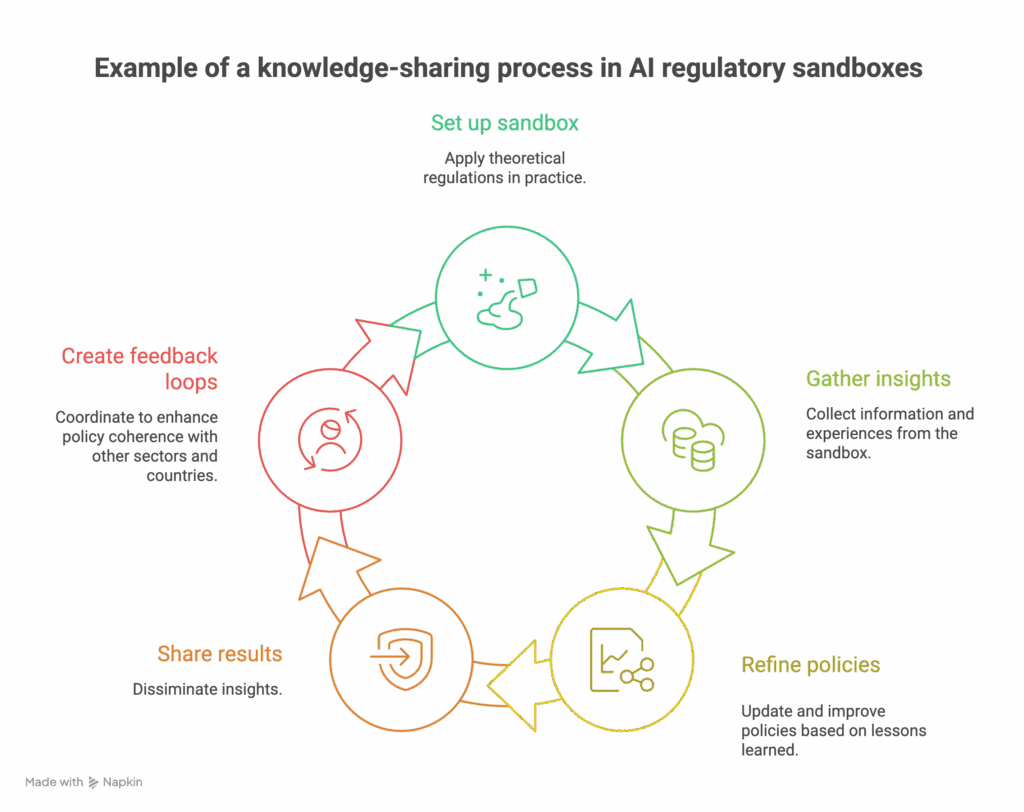

As AI regulatory sandboxes expand across jurisdictions and sectors, common design principles, recurring challenges and opportunities for greater effectiveness and policy coherence are emerging. As this happens, institutional co-operation and knowledge sharing are more important for ensuring coherent and effective regulatory experimentation both nationally and across borders.

In November 2025, the OECD webinar “AI Sandboxes: Sharing knowledge for success” brought together government officials, regulators and policy experts from seven countries to discuss the design and implementation of AI regulatory sandboxes. Here are the event’s key takeaways.

What is an ‘AI regulatory sandbox’?

Although there is no universally accepted definition, a regulatory sandbox generally offers temporary regulatory flexibility or waivers, allowing innovative products, services, or business models to be tested under controlled conditions and regulatory oversight. This approach promotes responsible experimentation and innovation while protecting the public interest.

To cite a few examples, Singapore’s AI healthcare sandbox offers guidelines for synthetic data to minimise privacy risks while allowing realistic testing. In the UK and other countries, AI-powered innovations in financial services are being tested under supervision that helps to prevent consumer harms such as biased scoring and automated decision-making.

In AI, this approach aligns with the OECD AI Principles – specifically Principle 2.3, which encourages governments to promote experimentation to enable the safe testing and scaling of AI systems. Similarly, the Recommendation of the Council for Agile Regulatory Governance to Harness Innovation urges governments to facilitate greater experimentation, testing, and trialling to stimulate innovation under regulatory supervision.

AI regulatory sandboxes are valuable for testing new technologies and rules in a safe, controlled way before full rollout. They offer less benefit if risks are low or if rules are already well established, but can be useful for compliance and learning in more complex regulatory environments. Decisions to utilise sandboxes should follow clear criteria to ensure efforts are appropriately targeted. Generally, initiatives with high innovation potential, substantial risks, and opportunities for regulatory discovery and improvement (including by removing barriers to beneficial innovation) should be prioritised. Key regulators, industry actors and other relevant stakeholders should be involved in this process.

In July 2023, the OECD published the policy paper Regulatory Sandboxes in artificial intelligence. Building on lessons from fintech, the report highlights the benefits of AI sandboxes, including accelerating market entry, improving regulatory understanding, and stimulating investment. It also explains why adapting the traditional sandbox model to AI presents unique technical and governance challenges. As a cross-sectoral technology, AI covers multiple legal, ethical, and technical domains, requiring strong coordination among several regulatory authorities.

Since the paper’s release, the use of AI sandboxes has accelerated. By February 2025, the Datasphere Initiative identified over 60 sandboxes worldwide related to AI, data, and technology. Furthermore, key regulatory and policy frameworks, including the European Union’s AI Act and America’s AI Action Plan, view regulatory sandboxes as essential tools for fostering AI innovation and ensuring the safe development and adoption of AI.

Insights shared during the webinar by experts from Spain, Thailand, Luxembourg, Brazil, Korea, Israel and Singapore offer valuable lessons on how different jurisdictions design and operate AI sandboxes, highlighting what works, where challenges arise, and how approaches vary across contexts. For example, Spain provides appropriate, tailored guidance to ensure effectiveness and facilitate regulatory compliance further down the line. Brazil’s sequenced approach includes capacity-building to enable participants to contribute to effective experimentation and evaluation.

>> REVISIT THE WEBINAR AND RELATED PUBLICATIONS <<

Six insights about AI regulatory sandboxes from around the globe

1. AI sandboxes are not uniform

- According to the Datasphere Initiative, three primary types of sandboxes are emerging worldwide, especially within the context of AI. Regulatory sandboxes: Collaborative processes where regulators work with innovators to test innovations under regulatory supervision.

- Operational sandboxes: Testing environments and infrastructure where data can be hosted and accessed in controlled conditions.

- Hybrid models: Combining regulatory oversight with operational capabilities, sometimes offering infrastructure and operational spaces for testing and experimentation (e.g., “supercharged sandbox” in the UK).

These models intervene at different phases of the policy and regulatory lifecycle. Some are employed before formal regulation to identify gaps and suggest necessary updates. Others operate during the development process, supporting iterative regulatory design. Some focus on helping understand legal obligations and ensure regulatory compliance, such as under the EU AI Act. Sector-specific sandboxes are also common, with countries adopting different approaches depending on regulatory priorities and institutional settings. Across these models, regulatory waivers are frequently used to enable experimentation under regulatory supervision.

Several experimentation-related initiatives, such as regulatory testbeds, living labs, or policy prototyping, share certain features and objectives with regulatory sandboxes. What truly distinguishes sandboxes is that they are the most institutionalised form of regulatory experimentation, usually led by regulators and integrated with regulatory supervision.

2. Coordination is essential

AI does not always fit neatly within existing sectoral, jurisdictional, or administrative boundaries. Its development and deployment span multiple regulatory domains, making effective coordination crucial. Luxembourg’s approach demonstrates this well, showing that AI sandboxes are more than testing spaces—they are platforms for regulatory collaboration and coordination. Luxembourg’s model brings together 11 authorities and innovation actors to align priorities and prevent fragmentation, emphasising the need for skilled project management alongside legal and technical expertise.

Specific stakeholders within the AI ecosystem pursue different objectives: data protection authorities concentrate on privacy, cybersecurity authorities on resilience, and innovators on efficiency and speed. They also offer different kinds of expertise. Sandboxes can offer a neutral space to reconcile these priorities, fostering trust and mutual understanding. To achieve this, managing the expectations of involved parties and clearly defining the objectives of a sandbox are particularly important steps.

In Thailand, a multi-faceted approach to AI regulatory sandboxing shows how balancing safety and flexibility depends on agile cooperation between sectoral regulators and industry. This approach integrates three complementary pathways: in the short term, fostering AI deployment where existing rules already allow it; in the medium term, establishing sector-specific sandboxes to manage domain-specific risks and opportunities; and eventually, developing system-wide sandboxes, including for government use, to test cross-cutting applications. Together, these mechanisms help promote AI-driven innovation within current legal frameworks while leveraging testing and experimentation to better understand the implications of emerging AI applications. Effective coordination is essential to prevent duplication of effort, regulatory gaps or conflicting rules.

Sandboxes can also play a valuable role in involving expert and academic communities in the development of AI regulation, with countries such as Spain, Luxembourg, and Brazil benefiting from such expertise at multiple stages of sandbox design and operation.

3. From policy to practice, and back again

AI sandboxes are increasingly used to bridge the gap between regulatory frameworks and real-world implementation. For example, Spain’s regulatory sandbox pilot translates the EU AI Act’s requirements for high-risk AI applications into practical compliance steps, enabling early identification of gaps and clarifying obligations for deployers. In December 2025, the Spanish AI Supervision Agency (AESIA) published a series of introductory and technical resources, developed from insights gathered during the regulatory sandbox pilot, that demonstrate how sandboxes can support evidence-based compliance guidance. Luxembourg’s AI sandbox, in turn, acts as a coordination platform to ensure lessons learned on overlapping obligations feed into the domestic operationalisation of the EU AI Act and related future guidance.

In July 2025, Singapore launched its Global AI Assurance Sandbox, building on insights from a previous pilot phase, to create a testing environment where creators or deployers of GenAI applications can have their applications evaluated by expert technical testers. Key risk aspects examined during testing include hallucination, undesirable content, data leakage, and vulnerability to adversarial prompts, with the findings informing policy guidance. Brazil’s Regulatory Sandbox on AI and Data Protection also exemplifies this trend. It aims to promote transparency, privacy by design and responsible innovation in AI systems that handle personal data, using structured experimentation to help innovators achieve regulatory compliance and assist regulators in understanding how rules work in practice and where adjustments may be necessary.

Simultaneously, AI sandboxes continue to shape future regulatory frameworks. In Thailand, sector-specific sandboxes for digital payments, digital assets, banking and insurance are expected to help regulators understand real-world AI applications and prepare for system-wide governance. As sandboxes move regulation from theory to practice and back to policy, they create an iterative loop that can strengthen trust and adaptability in AI governance. To achieve this, sandboxes should generate insights to inform better regulation. This, in turn, requires consistent reporting, sharing of results, and the establishment of feedback loops across sectors and countries to boost compliance and policy development.

Nevertheless, translating sandbox results into regulatory improvements remains challenging, even in countries like Korea, which has considerable experience conducting regulatory experiments across sectors.

4. Incentives matter

Participation in AI sandboxes is not automatic. Clear and well-designed incentives are essential for both innovators and regulators. Israel, for instance, has introduced a government fund that provides financial support, legal counselling and mentorship for regulators launching AI sandboxes, while also offering grants to participating firms. Similarly, Singapore reduces testing-related barriers to GenAI adoption through practical guidance and access to specialised testing partners.

These models recognise a fundamental challenge: AI experimentation is resource-intensive and needs to focus on areas where it matters most. Without targeted support, regulators may struggle to operate sandboxes, and companies might be hesitant to participate. Furthermore, when offering incentives, authorities should encourage a diverse mix of participants—small firms, big players, different sectors, and different AI applications—to ensure that sandbox insights are both representative and robust. The complex nature of regulatory sandboxes themselves may also pose challenges for some applicants or even participants. In this context, Brazil outlined a three-stage execution framework, starting with capacity building for selected participants undertaken by a partner university, before advancing to the experimentation and evaluation phases.

5. The growing need for interoperability and cross-border collaboration

As AI systems operate across borders, there is a growing need for AI sandboxes to extend beyond national borders. Without international coordination, firms may engage in ‘jurisdiction hopping’, seeking the most permissive regulatory environments. Interoperability between sandboxes is thus becoming a governance necessity. Cross-border collaboration is also crucial for international regulatory cooperation. In Brazil’s case, preparatory work to develop the sandbox included international consultations on the experimental methodology. This approach enables the benefit from international practices and standards and facilitates the sharing of experiences in later stages of the project.

Cross-border sandboxes have already proven their worth. Singapore’s Global AI Assurance Pilot, for example, involved 17 AI deployers from nine countries collaborating with 16 specialised testers from the US, UK and Europe. Use cases include summarisation, chatbots to AI applications in healthcare, finance and human resource management. These cross-border tests allowed regulators and companies to understand how AI performs in different legal, cultural and technical environments. For example, a chatbot that safely managed English queries inadvertently leaked confidential information when prompted in Mandarin, demonstrating the importance of multilingual testing.

6. AI sandboxes come with challenges of their own

AI sandboxes face several challenges. Regulators often encounter capacity limitations and lack the technical expertise or project management skills necessary to supervise complex AI systems. Fragmentation and coordination issues also arise, as AI spans multiple sectors and necessitates collaboration among numerous authorities, including across borders.

Designing suitable requirements and safeguards for sandbox frameworks can be challenging, especially when multiple regulatory regimes are involved, as demonstrated by Brazil. Deciding the appropriate level of transparency, human oversight, and data governance can be particularly difficult when firms seek waivers to speed up testing.

At the same time, Korea’s experience demonstrates that although safety and consumer protection rules are vital, excessively strict requirements may deter participation, especially among SMEs, and hinder experimentation. Safeguards should therefore be proportionate to and aligned with the risks posed by the technology, as overly complex procedures can undermine the agility required in regulatory sandboxes in a rapidly evolving AI landscape.

Measuring the impact of AI sandboxes is also difficult. Without clear metrics, sandboxes risk becoming isolated experiments rather than influential policy tools. Ideally, impact should be monitored across various areas, such as faster time-to-market for compliant AI systems, increased regulatory clarity and coherence (including through less fragmentation), and tangible updates to laws and standards shaped by sandbox insights.

Furthermore, as highlighted in a 2024 OECD policy paper, there are potential limitations concerning legality, feasibility, resources, and equity. Regulatory experiments should adhere to constitutional norms, including those concerning equal treatment.

A vital element for responsible innovation and public trust?

As a relatively new regulatory tool designed to address a rapidly evolving general-purpose technology, AI sandboxes raise significant questions about their role. Some of the questions raised during the online workshop include:

- What role might civil society play in AI sandboxing?

- How can public institutions build the expertise required to supervise regulatory sandboxes involving frontier-level AI systems?

- How can sandboxes balance flexibility with protecting long-term societal values (e.g., what types of safeguards should be in place regarding regulatory exemptions)?

- What measures, such as reporting and documentation requirements, talent management, and capacity building, are necessary to ensure transparency and build trust in AI regulatory sandboxes?

As AI governance develops and these questions are addressed, AI sandboxes hold the potential to become key tools for promoting responsible innovation, enhancing governance and building public trust in AI systems across the globe.

The OECD is well placed to advance these objectives by facilitating the systematic exchange of knowledge and expertise, and by developing standardised, comparable frameworks for measuring outcomes. It can also use its convening role to support alignment on guidance for the targeting, design and implementation of AI regulatory sandboxes. By grounding this work in empirical evidence and practical experience, the OECD can help strengthen the overall evidence base and inform more effective policy approaches.

The authors would like to thank Natalie Cohen, Lucia Russo, Guillermo Hernandez, Xavier Pearson, Viktor Samek and John Leo Tarver for their contributions to this piece.