An AI system was used to generate manipulated images and false content that distort historical facts, which has directly led to harm to communities by spreading misinformation and causing social disruption. The AI's role in fabricating and disseminating these false narratives is pivotal to the harm occurring. Therefore, this qualifies as an AI Incident under the framework, as the AI-generated content has directly caused harm to communities and violated rights related to truthful information and respect for victims.[AI generated]

AIM: AI Incidents and Hazards Monitor

Automated monitor of incidents and hazards from public sources (Beta).

AI-related legislation is gaining traction, and effective policymaking needs evidence, foresight and international cooperation. The OECD AI Incidents and Hazards Monitor (AIM) documents AI incidents and hazards to help policymakers, AI practitioners, and all stakeholders worldwide gain valuable insights into the risks and harms of AI systems. Over time, AIM will help to show risk patterns and establish a collective understanding of AI incidents and hazards and their multifaceted nature, serving as an important tool for trustworthy AI. AI incidents seem to be getting more media attention lately, but they've actually gone down as a share of all AI news (see chart below!).

The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

Advanced Search Options

As percentage of total AI events

Show summary statistics of AI incidents & hazards

AI-Generated Fake News Distorts South Korea's 5·18 Democratic Movement

AI-generated fake newspaper articles and images falsely depicting the 5·18 Democratic Movement as a North Korean-led riot have spread widely online in South Korea. Authorities are investigating and considering legal action, as the misinformation distorts historical truth and harms victims and public understanding.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

AI system task:

Why's our monitor labelling this an incident or hazard?

AI Reconstructs and Leaks Protected Cockpit Audio from UPS Crash, Forcing NTSB to Restrict Access

AI tools were used to reconstruct and publicly share cockpit voice recordings from spectrogram images released in the NTSB's UPS Flight 2976 crash investigation. This violated federal privacy laws, prompting the NTSB to suspend public access to its investigation dockets to prevent further unauthorized disclosures.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

An AI system or AI-related technology was used to reconstruct audio from a spectrogram image, which is a form of computational image recognition and signal processing. This reconstruction led to the release of sensitive cockpit voice recordings that are legally protected and intended to remain confidential. The harm involves violation of privacy rights and potential disruption to the integrity of the investigation, which are harms to individuals and legal rights. Since the harm has already occurred (the audio was posted online), this qualifies as an AI Incident. The NTSB's response to restrict access is a mitigation measure but does not change the classification of the event as an incident.[AI generated]

Waymo Suspends Driverless Car Services in Atlanta and Texas Due to Flooding Risks

Waymo suspended its autonomous vehicle services in Atlanta and Texas after heavy rains stranded at least one driverless car in floodwaters. The precautionary pause was prompted by the AI system's inability to safely navigate hazardous weather, highlighting operational risks but resulting in no injuries or property damage.[AI generated]

AI principles:

Industries:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves an AI system (Waymo's autonomous vehicles) that malfunctioned in the sense that a vehicle was stranded due to flooding, but no injury or damage was reported. The company paused service proactively to prevent possible harm. Since no actual harm occurred and the pause is a precaution, this constitutes an AI Hazard, as the AI system's use in severe weather conditions could plausibly lead to harm if continued.[AI generated]

Turkey to Deploy AI-Powered Surveillance for School and Exam Security

Turkey's Ministry of Education announced plans to implement AI-supported image processing camera systems and card-based entry for enhanced security in schools and during the national high school entrance exam (LGS). While no harm has occurred yet, the AI surveillance raises potential privacy and misuse concerns.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article mentions the intended deployment of AI-supported image processing for security in schools, which involves AI systems. Since these systems are planned but not yet in use, and no harm or incident has occurred, this constitutes a plausible future risk scenario where AI could lead to incidents such as privacy violations or other harms. Therefore, it fits the definition of an AI Hazard rather than an Incident or Complementary Information.[AI generated]

AI Moderation Failures on Major Platforms Enable Widespread Financial Scams in Europe

Consumer groups across Europe have filed complaints against Google, Meta, and TikTok, alleging their AI-driven content moderation systems failed to effectively detect and remove fraudulent financial ads. This failure has allowed scams to proliferate, exposing millions of users to financial harm despite regulatory requirements under the EU Digital Services Act.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

AI system task:

Why's our monitor labelling this an incident or hazard?

The platforms explicitly use AI to detect and remove fraudulent ads, which are part of their content moderation systems. The complaints highlight that these AI systems are not fully effective, allowing fraudulent ads to reach users and cause financial harm. This is a direct link between AI system use and harm to consumers, fitting the definition of an AI Incident. The event is not merely a potential risk or a governance update but reports ongoing harm due to AI system shortcomings.[AI generated]

Meta Settles Lawsuit Over AI-Driven Social Media Harm to Students

Meta settled a lawsuit with Kentucky's Breathitt County School District, which alleged that AI-driven engagement features on social media platforms contributed to student mental health issues. The case, a bellwether among 1,200 similar lawsuits, highlights the role of AI in causing harm to youth and school communities.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves AI systems in the form of social media platforms that use AI algorithms to maximize user engagement, which has been alleged to cause mental health harms to students. The lawsuit claims that the platforms were deliberately designed to be addictive, which is a direct consequence of AI-driven recommendation and content delivery systems. The harms include anxiety, depression, and self-harm among students, which are injuries to health. The settlement and ongoing litigation confirm that these harms are recognized and linked to the AI systems' use. Hence, this is an AI Incident as per the definitions provided, since the AI system's use has directly or indirectly led to harm to groups of people.[AI generated]

Malaysia Demands TikTok Address AI-Generated Offensive Content Targeting King

Malaysian authorities have ordered TikTok to explain its failure to promptly moderate and remove AI-generated offensive and defamatory content, including fake videos and manipulated images targeting the king. The inadequate response of TikTok's AI moderation system has raised concerns about public order and national harmony in Malaysia.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions AI-generated videos and manipulated images as part of the offensive content targeting the king, indicating the involvement of AI systems in creating harmful content. The harm is realized as the content is described as grossly offensive, false, and menacing, which threatens public order and respect for constitutional institutions, fitting the harm to communities category. The regulator's legal notice to TikTok for failure to moderate such content promptly further supports that the AI system's outputs have directly led to harm. Hence, this is an AI Incident rather than a hazard or complementary information.[AI generated]

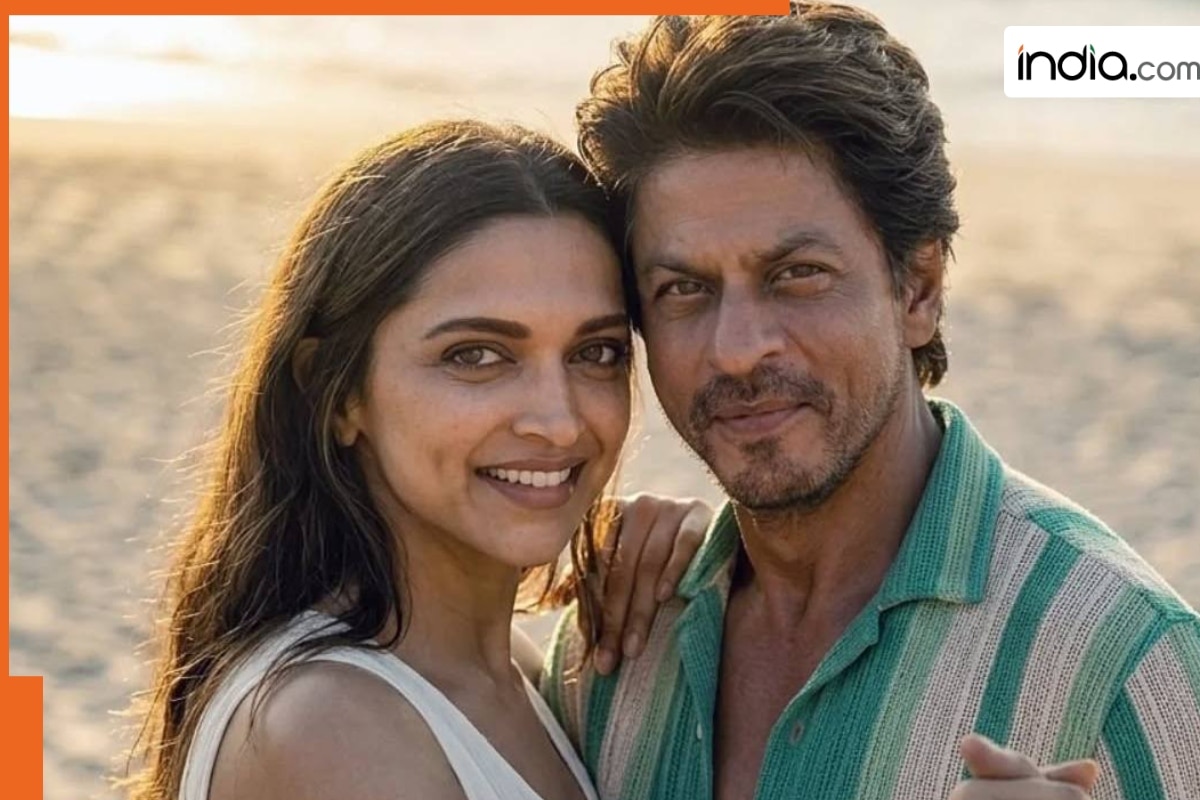

AI-Generated Fan Edit of Shah Rukh Khan's 'King' Sparks Security Crackdown After Leaks

An AI-generated fan video using leaked and paparazzi footage from Shah Rukh Khan's upcoming film 'King' went viral online, violating intellectual property rights and prompting the production team to tighten security on set in Mumbai. The incident led to mass reporting and removal of the unauthorized content from social media platforms.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions an AI-edited video that uses leaked visuals to create a fake narrative of the film, which has been circulated online. This unauthorized use of AI-generated content infringes on intellectual property rights and disrupts the film's intended release and creative control. The harm is realized, not just potential, as the fake video has already shocked the public and caused concern. The AI system's role is pivotal in generating this misleading content. Hence, this event meets the criteria for an AI Incident involving violation of intellectual property rights and harm to the community.[AI generated]

New York City Officials Warn of Potential Economic Disruption from AI

New York City Comptroller Mark Levine released a report warning that artificial intelligence could eliminate thousands of jobs and trigger major economic shocks. The report outlines multiple scenarios, urging the city to prepare for possible disruptions to employment, wages, and tax revenue due to AI-driven automation.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Why's our monitor labelling this an incident or hazard?

The event involves AI as a factor influencing economic forecasts and budget planning, but no realized harm or incident has occurred yet. The potential for AI to cause economic downturn is speculative and presented as a plausible future risk. Therefore, this qualifies as an AI Hazard because it concerns a credible risk of future harm stemming from AI's economic impact, not an AI Incident or Complementary Information.[AI generated]

Spanish Ombudsman Investigates Harmful AI-Driven Social Media Content Affecting Minors

The Spanish Ombudsman launched an investigation after a minor suffered self-harm and suicidal tendencies due to harmful content recommended by social media algorithms (AI systems) on X. Authorities are assessing measures to protect minors, following complaints about the platform's failure to remove dangerous content.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions that social media algorithms detect user behavior and automatically provide harmful content, which is a clear involvement of AI systems. The harm is realized, as a minor with a history of self-harm and suicide attempt was exposed to harmful content via these algorithms. The investigation and regulatory debate further confirm the seriousness of the harm caused. Hence, this is an AI Incident due to the AI system's role in causing harm to a vulnerable individual.[AI generated]

/https://i.s3.glbimg.com/v1/AUTH_ba41d7b1ff5f48b28d3c5f84f30a06af/internal_photos/bs/2025/e/R/4A4wBlQNSnRsuGEXyVlQ/whatsapp-image-2025-09-15-at-18.48.45-c47a9b05.jpg)

AI-Powered Monitoring System Reduces Motorcycle Accidents in Brazil

Mobility platform 99 deployed an AI-driven monitoring system using sensors and algorithms to track and alert partner motorcyclists about risky driving behaviors. The initiative, piloted in Rio de Janeiro, led to a significant reduction in accidents, with up to 82% of riders correcting unsafe practices after receiving app alerts.[AI generated]

Industries:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The described system uses AI algorithms to monitor and analyze driving behavior, issuing alerts and enforcing restrictions to improve safety. The use of this AI system has directly led to a reduction in accidents, which constitutes harm reduction to people, fitting the definition of an AI Incident. The event involves the use of an AI system and its outputs have directly led to a positive impact on health and safety, which is a form of harm mitigation. Therefore, this qualifies as an AI Incident rather than a hazard or complementary information.[AI generated]

Japan's Internal Affairs Ministry Urges Stronger AI Cybersecurity Measures

Japan's Ministry of Internal Affairs convened industry and local government leaders to address rising threats from advanced AI-driven cyberattacks, including concerns over Anthropic's Claude Mutos. Officials called for increased budgets, personnel, and public-private cooperation to strengthen defenses and prevent potential disruptions or data leaks. No actual AI incident has occurred yet.[AI generated]

AI principles:

Industries:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions a high-performance AI system (Anthropic's Claude Mutos) and concerns about its malicious use in cyberattacks. The Ministry's meeting and warnings indicate a credible risk that such AI could be exploited to harm critical infrastructure sectors. No actual harm or incident is reported, only preventive measures and alerts. Hence, the event is best classified as an AI Hazard, reflecting plausible future harm from AI misuse in cybersecurity contexts.[AI generated]

LaLiga to Implement AI for Referee Evaluation and Assignment

LaLiga, led by president Javier Tebas, announced plans to implement AI systems next season to objectively score and assign referees in Spanish football. The AI aims to reduce subjectivity in referee evaluations, but no incidents or harm have been reported as the system is still under development.[AI generated]

Industries:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article clearly describes an AI system being developed and planned for use in referee evaluation and assignment in LaLiga. However, it does not report any harm, malfunction, or violation resulting from this AI use. The AI's role is advisory and supportive, with final decisions made by humans. Since no harm has occurred and the AI system's use is prospective, this fits the definition of an AI Hazard, as the AI system's deployment could plausibly lead to incidents or benefits in the future but has not yet caused harm.[AI generated]

AI-Driven Fraud Causes Significant Financial Losses in Ukraine

In Ukraine, criminals are increasingly using AI technologies, including deepfake audio and AI-generated phishing messages, to scale and personalize financial fraud. These sophisticated attacks have led to a surge in losses, reaching 1.4 billion UAH in 2025, with most incidents involving social engineering and online deception.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly states that AI is used to generate fake calls, messages, and websites that are difficult to distinguish from genuine ones, enabling fraudsters to successfully deceive victims and cause financial harm. This constitutes direct harm to people (financial injury and psychological harm) caused by the use of AI systems. Therefore, this qualifies as an AI Incident because the AI system's use has directly led to realized harm through sophisticated fraud schemes.[AI generated]

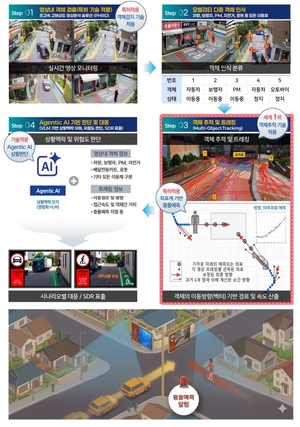

Yangju City Deploys AI-Based Traffic Safety System to Prevent Accidents

Yangju City, South Korea, has been selected for the Ministry of Land, Infrastructure and Transport's 2026 AI City Innovation Project. The city will implement an agentic AI system to predict and warn of collision risks in real time on local roads, aiming to prevent traffic accidents and enhance public safety.[AI generated]

Industries:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves the development and planned use of an AI system for traffic safety, which could plausibly lead to harm prevention but does not describe any realized harm or malfunction. Therefore, it qualifies as an AI Hazard because the AI system's deployment could plausibly lead to preventing or causing incidents in the future, but no incident has occurred yet. It is not Complementary Information because it is not an update or response to a past incident, nor is it unrelated as it clearly involves AI technology with potential safety implications.[AI generated]

AI Data Center Power Surge Threatens US Grid Stability and Consumer Costs

Wood Mackenzie warns that the rapid expansion of AI-driven data centers in the US is straining electricity grids, causing near grid failures and threatening project viability, market stability, and consumer costs. Technical and regulatory challenges, along with unprecedented power demands, have already led to harmful power fluctuations and increased risks for infrastructure and ratepayers.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article involves AI systems indirectly through the power demands of AI data centers, which rely on AI workloads. The challenges and risks described relate to the infrastructure and regulatory environment needed to support these AI systems. No direct or indirect harm has yet occurred as a result of AI system malfunction or use; rather, the article outlines potential future risks and technical/regulatory hurdles that could plausibly lead to harm if unaddressed. Therefore, this qualifies as an AI Hazard because it concerns plausible future harm related to AI system deployment and infrastructure stress, but no incident has yet materialized.[AI generated]

UK Launches Pilot Scheme for Self-Driving Taxi and Bus Services

The UK government has opened applications for operators to run self-driving taxi and bus services, allowing firms like Wayve to pilot autonomous vehicles carrying passengers on public roads later this year. The scheme aims to improve accessibility and safety, but introduces potential risks associated with AI-driven transport.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves the use of AI systems (self-driving vehicles) and their deployment on public roads, which could plausibly lead to harm if safety issues arise. However, the article does not report any realized harm or incidents caused by these AI systems. Instead, it discusses regulatory and safety measures, pilot programs, and anticipated benefits. Therefore, this event represents a credible potential risk scenario (AI Hazard) rather than an incident or complementary information about a past event.[AI generated]

AI-Induced Job Anxiety and Backlash Among US Graduates

In the US, widespread deployment of AI systems like ChatGPT and Gemini has led to job losses, heightened anxiety, and public backlash among young graduates. Incidents include booing of tech leaders at graduation ceremonies, layoffs, and even violent threats against AI-related figures, reflecting deep societal concerns over AI-driven workforce disruption.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly links AI development and deployment to real-world harms, including violent threats and attacks on individuals associated with AI companies or AI-related infrastructure. The harms are direct or indirect consequences of AI's societal impact, such as job displacement fears and public backlash. The presence of AI systems is clear (ChatGPT, Claude, Gemini), and the harms include injury or threats to persons and social disruption. Hence, this qualifies as an AI Incident rather than a hazard or complementary information.[AI generated]

AI-Generated Code Accelerates Cybersecurity Risks as Firms Ship Vulnerable Software

Research by Checkmarx reveals that 75% of organizations knowingly deploy vulnerable code, a risk exacerbated by AI-generated applications. AI tools have drastically reduced the time for threat actors to exploit vulnerabilities—from 840 days in 2018 to less than two days in 2026—leading to increased data breaches and cyberattacks.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions AI tools generating code that is shipped with known vulnerabilities, leading to exposure of sensitive data and increased cybersecurity risks. The AI system's use (code generation) is directly linked to the harm (data exposure and security flaws). The harm is realized, not just potential, as thousands of vulnerable apps are already live. This fits the definition of an AI Incident because the AI system's use has directly led to harm to property and communities through data breaches and increased cyberattack risks.[AI generated]

US School District Settles With Social Media Giants Over AI-Driven Mental Health Harms

Meta, Snap, TikTok, and YouTube reached confidential settlements with a Kentucky school district, avoiding a landmark trial. The district accused the platforms' AI-driven algorithms of causing student mental health crises through addictive design. Over 1,200 similar lawsuits are ongoing across the US, highlighting AI's role in youth harm.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The social media platforms operated by Meta use AI-driven algorithms to capture and retain user attention, which is central to the allegations of causing mental health harm to adolescents. The lawsuit and settlement directly relate to harms caused by the use of these AI systems. Although the article does not detail specific AI malfunctions, the design and use of AI to maximize engagement have indirectly led to psychological harm, fitting the definition of an AI Incident. The event is not merely a potential risk or a general update but concerns realized harm and legal consequences, thus not a hazard or complementary information.[AI generated]