Designing transparency for government AI: Insights from the UK’s Algorithmic Transparency Recording Standard initiative

In countries around the world, the public sector must ensure the trustworthiness of any algorithmic tools it wants to deploy by verifying that they function as intended, ensuring fair and acceptable use, and guaranteeing explainability of outputs.

Still, numerous high-profile incidents have emerged in which failing to consider one or more of these factors has led to undesirable events or outcomes in areas such as educational qualifications, social security, and debt. The truth is that the widespread use of AI is still new and much remains to be done to standardise approaches to safe deployment.

Nobody notices infrastructure until it fails

This is a common saying in the public sector. To this end, much of the important work in AI governance is routine day-to-day processes, guidance documentation, and activities within organisations that lead to the safety and responsible use critical for trustworthy AI.

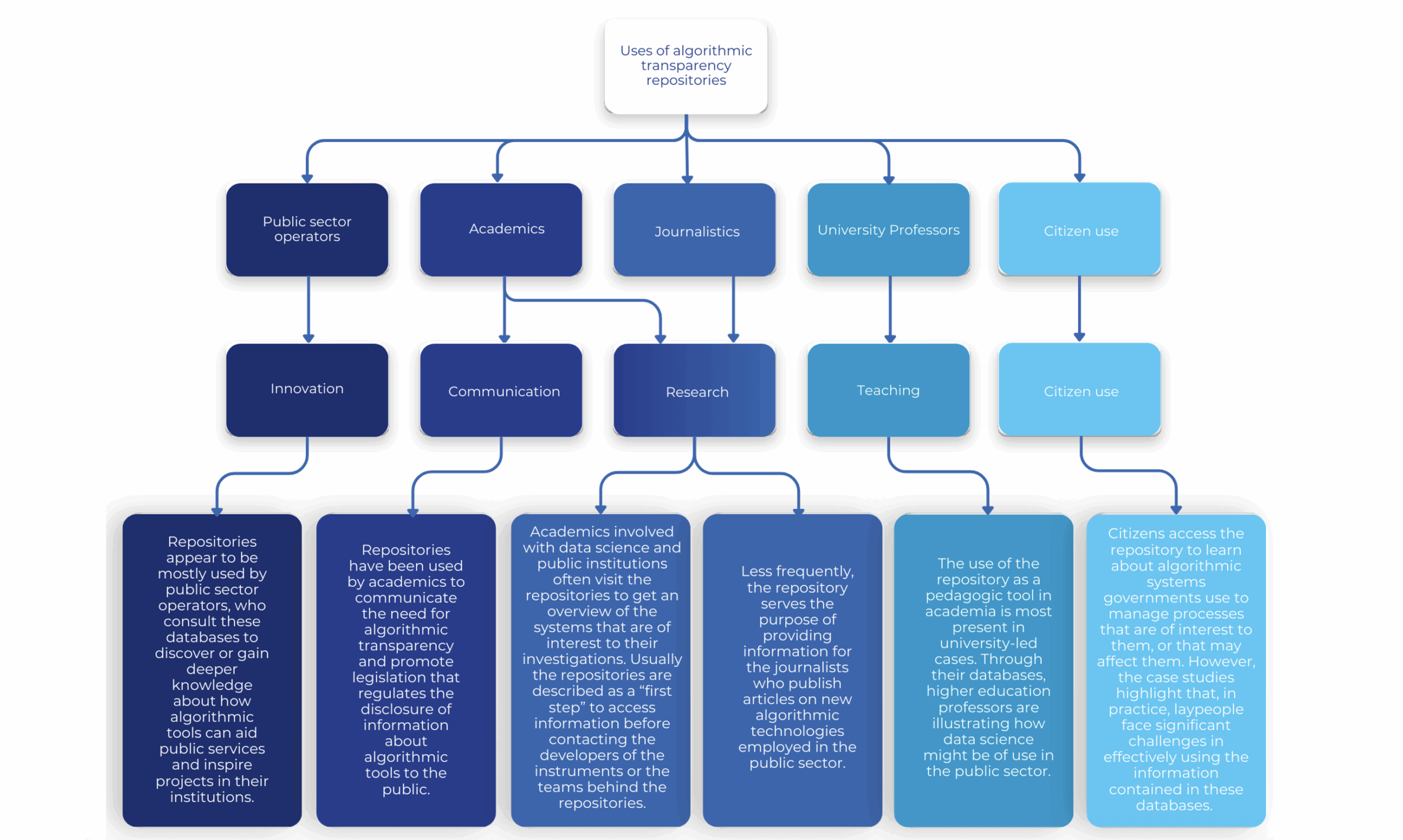

The Algorithmic Transparency Recording Standard (ATRS) fits this description. It is a UK government initiative that establishes a standardised way for public sector organisations to publish information about how and why they use algorithmic tools.

In 2024, GPAI ran a project on Algorithmic transparency in the public sector, led by Juan David Gutierrez from Universidad de los Andes in Colombia and supported by CEIMIA (Centre d’Expertise International de Montréal en Intelligence Artificielle) – one of the three Centres of the GPAI Expert Community. The study reviewed global best practices and featured three case studies from Chile, the European Union and the UK. At its core, it explored why countries pursue such initiatives and how championing transparency can help avoid controversies and improve public trust. Before diving into the details of the standard, it is worth looking at a few cases that illustrate why such a standard is necessary.

A ‘mutant algorithm’, or just opaque?

In the UK, one of the most high-profile controversies occurred in 2020, involving a school exam grading algorithm that estimated grades for students who did not sit formal exams due to COVID. The algorithm was subsequently found to unfairly benefit private school students while limiting test scores from publicly funded schools. There are also several examples of opaque uses of algorithms within benefits systems. Australia’s ‘Robodebt’ scheme assessment programme fell under scrutiny for generating false debts, resulting in significant impacts on affected individuals. In Denmark, algorithms used by the country’s welfare agency have been the subject of reports of potential mass surveillance, discrimination and social scoring.

Beyond governments, equally high-profile cases have involved algorithms used in hiring systems or credit scoring that discriminated against people based on their gender, race or ethnicity.

Many of these controversies were exacerbated by the opacity of the algorithms used: certain impacts could have been reduced by proactively sharing information about tools and by working with the public and civil society during testing, development and implementation to identify risks ahead of deployment. The tools’ developers would have had the opportunity to engage with comprehensive information in the public domain, rather than relying on incorrect or incomplete information. This is all essential to ensure our emerging ‘algorithmic infrastructure’ stays ‘routine’, behind the scenes, and working as intended.

To address this, the UK government published the ATRS in November 2021. In a nutshell, ATRS provides a structured template and public repository to improve transparency, accountability and public trust by documenting how algorithmic tools work, their purpose, and their impact on decisions that affect citizens. In 2025, reporting the use of algorithms via the ATRS became mandatory for central government departments and Arms-Length Bodies (a specific classification of public bodies in the UK).

To build on the momentum, the government committed to the Roadmap for Modern Digital Government to compile and publish records of all identified in-scope algorithmic tools (as of March 2025) in government departments (excluding their associated public bodies) by the end of 2025. This was achieved, and at the time of writing, 125 ATRS records have been published, with more in progress.

International engagement and CEIMIA initiatives to improve ATRS

The Standard received international attention, with the OECD identifying it as a world-leading initiative and featuring it on the Observatory of Public Sector Innovation. In Europe, the Estonian government translated the Standard and piloted it as part of the UK-Estonia Tech Partnership, providing insights into how the Standard can be implemented across different jurisdictions.

Following the 2024 GPAI project on algorithmic transparency, the UK government’s Department for Science, Innovation and Technology (DSIT) entered into a partnership with CEIMIA under the brand of the Centres of the GPAI Expert Community to review the existing UK transparency standard and obtain rapid feedback as the standard continues to develop. ATRS also benefits from input from a group of international experts, many of whom participated in the initial 2024 GPAI project. The results informed a set of recommendations, which the UK government is currently considering for a future update.

Testing the Standard through international partnerships, such as the one with Estonia and the Centres of the GPAI Expert Community, is a way for the UK to share best practices, a cornerstone of driving responsible data and AI practices globally.

Transparency and security must work together

Responsibility in a public technology context is often about striking a balance, which can require difficult trade-offs. Transparency matters, but so does security, especially in today’s geopolitically unstable environment.

How algorithms interact with and shape public life remains a major focus worldwide. One of the key themes at the India AI Impact Summit 2026 was Safe and Trusted AI, under which transparency was a specific concern, and the G7 could discuss it as a critical issue.

Transparency is essential for governments adopting algorithmic tools to enhance productivity and growth. Ensuring that it is a priority for public-sector organisations is an ongoing learning process. As the ATRS Standard gains wider recognition and adoption in the UK and beyond, DSIT continues to explore ways to improve it. Part of this involves researching how public-sector teams interact with the ATRS process and balancing security and safety considerations, including those related to cyber threats.

In the end, all of this helps DSIT to create a healthy balance between maximising transparency – protecting citizens – and ensuring that digital government services remain safe and secure.

Get in touch!

Governments can contact the GPAI Centres of the GPAI Expert Community directly to receive help with algorithmic transparency: contact info@ceimia.org