The event involves an AI system (ChatCPR) that is used in a critical health emergency context to guide CPR, directly impacting the health and survival of individuals experiencing cardiac arrest. The AI system's use has been demonstrated to improve the quality of CPR instructions compared to human dispatchers, which directly relates to harm reduction (injury or harm to health). Although the system is still in testing and not yet widely deployed, the study shows realized benefits in simulated and retrospective real-call scenarios, indicating direct involvement of AI in improving health outcomes. Therefore, this qualifies as an AI Incident because the AI system's use has directly led to a positive health impact, addressing harm in emergency medical response.[AI generated]

AIM: AI Incidents and Hazards Monitor

Automated monitor of incidents and hazards from public sources (Beta).

AI-related legislation is gaining traction, and effective policymaking needs evidence, foresight and international cooperation. The OECD AI Incidents and Hazards Monitor (AIM) documents AI incidents and hazards to help policymakers, AI practitioners, and all stakeholders worldwide gain valuable insights into the risks and harms of AI systems. Over time, AIM will help to show risk patterns and establish a collective understanding of AI incidents and hazards and their multifaceted nature, serving as an important tool for trustworthy AI. AI incidents seem to be getting more media attention lately, but they've actually gone down as a share of all AI news (see chart below!).

The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

Advanced Search Options

As percentage of total AI events

Show summary statistics of AI incidents & hazards

AI System ChatCPR Outperforms 911 Dispatchers in CPR Guidance

Researchers from UC San Diego, University of Pittsburgh, and Johns Hopkins developed ChatCPR, an AI-powered CPR coaching agent. In studies, ChatCPR provided more accurate and guideline-compliant CPR instructions than human 911 dispatchers, demonstrating potential to improve emergency cardiac arrest outcomes. The system is open-source and tested on real emergency call data.[AI generated]

Industries:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

AI and Drones Heighten Global Nuclear Terrorism Threat

UN officials and experts warn that the proliferation of AI and militarized drones has raised the risk of nuclear terrorism to unprecedented levels. While no nuclear terrorist attack has occurred, the potential misuse of AI-enabled technologies by terrorist groups poses a credible, high-impact global threat, prompting urgent calls for enhanced governance.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article describes a credible and significant potential threat involving AI-enabled technologies (e.g., drones potentially used to deliver dirty bombs) that could lead to catastrophic harm if realized. Since no actual nuclear terrorist attack involving AI has occurred, the event represents a plausible future risk rather than a realized harm. Therefore, it fits the definition of an AI Hazard, as the development and use of AI in this context could plausibly lead to an AI Incident involving severe harm to people, communities, and the environment.[AI generated]

Xpeng Begins Mass Production of AI-Powered Robotaxis in China

Chinese EV maker Xpeng has started mass production of its first AI-driven robotaxi at its Guangzhou headquarters, aiming for fully driverless operations by 2027. Pilot operations will begin later this year, marking a significant step toward large-scale deployment of autonomous vehicles in China.[AI generated]

Industries:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves the use of AI systems in autonomous vehicles (robotaxis) that will operate without drivers, which clearly fits the definition of an AI system. The mass production and planned deployment of these robotaxis could plausibly lead to incidents involving injury, disruption, or other harms if the AI systems fail or are misused. Since no actual harm has been reported yet, but the potential for harm is credible and foreseeable, this event is best classified as an AI Hazard rather than an AI Incident or Complementary Information.[AI generated]

Disneyland Sued Over Undisclosed Use of Facial Recognition AI

Disneyland faces a $5 million class action lawsuit alleging it failed to properly disclose the use of facial recognition AI at park entrances, violating privacy and consumer protection laws. The suit claims biometric data, including that of children, was collected without adequate notice or explicit consent from visitors.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

AI system task:

Why's our monitor labelling this an incident or hazard?

Facial recognition technology is an AI system that processes biometric data to identify individuals. The lawsuit claims that Disney's deployment of this AI system at park entrances has led to violations of privacy and consumer protection laws, constituting harm under the framework's category of violations of human rights or legal obligations. The harm is realized as the lawsuit is filed alleging these violations, indicating direct or indirect harm caused by the AI system's use. Hence, this qualifies as an AI Incident rather than a hazard or complementary information.[AI generated]

Hyderabad Police Deploy AI Tool SOCEYE for Harmful Content Detection

Hyderabad Police launched SOCEYE, an AI-based platform for real-time social media surveillance. The system identifies and enables removal of harmful content, such as hateful and communally sensitive posts, supporting law enforcement and public safety by mitigating potential disruptions to public order.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

AI system task:

Why's our monitor labelling this an incident or hazard?

SOCEYE is an AI system explicitly described as performing intelligent social media surveillance, real-time monitoring, and automated alerts to identify harmful content. Its use has directly led to the identification and removal of objectionable posts, which helps prevent harm to communities by maintaining peace and public order. Since the AI system's use has directly contributed to harm mitigation and public safety, this qualifies as an AI Incident under the framework, as it involves harm to communities and the AI system's role is pivotal in addressing that harm.[AI generated]

Draganfly Acquires AI-Enabled Autonomous Drone Technology for Defense Applications

Draganfly Inc. has agreed to acquire Skip Dynamix's ultra-low-cost, mass-producible fixed-wing drone technology, which features AI-enabled autonomy for surveillance, electronic warfare, and one-way missions. The acquisition expands Draganfly's defense portfolio, raising concerns about future risks from deploying autonomous AI systems in military operations.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves AI systems explicitly—autonomous drones with AI-enabled autonomy and mission systems. The article details the acquisition and integration of technology designed for military and defense use, including autonomous ISR, electronic warfare, and one-way missions, which inherently carry risks of harm if deployed. However, the article does not describe any realized harm or incidents resulting from these AI systems. The focus is on the acquisition and strategic positioning, implying potential future risks rather than current harm. Thus, it fits the definition of an AI Hazard, as the development and intended use of these AI systems could plausibly lead to AI Incidents in the future, but no direct or indirect harm has yet occurred.[AI generated]

AI-Generated Deepfake Intimate Image Abuse Spurs Regulatory Action in UK

AI systems, including generative AI and hash-matching technology, have enabled the creation and spread of non-consensual intimate images and sexualized deepfakes, causing significant harm to women and girls in the UK. Ofcom has issued new guidelines for tech firms, but survivors say current measures remain insufficient.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article clearly involves AI systems, specifically generative AI used to create deepfake intimate images, which have caused harm to women and girls by spreading non-consensual sexualized content. This constitutes a violation of rights and harm to communities. The regulatory update aims to address these harms by mandating detection and blocking technologies. Since the harm is ongoing and the AI system's use has directly led to violations and distress, this qualifies as an AI Incident. The article primarily reports on the incident and the regulatory response, but the harm is materialized and central to the report, not merely a complementary update.[AI generated]

Ondas Acquires Israeli AI Defense Firm Omnisys for Battlefield Orchestration

Ondas Holdings announced the $200 million acquisition of Omnisys, an Israeli developer of AI-powered Battle Resource Optimization (BRO) software for autonomous defense planning and real-time battlefield decision-making. The BRO platform, operational for 25 years, poses credible risks of harm in military contexts but no incident has occurred.[AI generated]

AI principles:

Industries:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions an AI system (Omnisys' BRO platform) used for autonomous defense decision-making, which fits the definition of an AI system. The event involves the development and use of this AI system in military contexts. Although no direct or indirect harm has been reported, the nature of the AI system—autonomous battlefield decision-making and counter-drone operations—implies a credible risk of future harm, including injury, disruption, or violations of rights in conflict scenarios. Therefore, the event qualifies as an AI Hazard due to the plausible future harm from the AI system's deployment in defense applications. It is not an AI Incident because no harm has materialized, nor is it Complementary Information or Unrelated as the focus is on the AI system's acquisition and potential impact.[AI generated]

Tesla Plans Nationwide Expansion of Fully Autonomous Cars Amid Safety Concerns

Elon Musk announced Tesla's plan to expand fully self-driving cars without human safety monitors across the US this year, following initial deployment in Texas. Despite operational issues and recent safety-related recalls, Tesla aims to increase the use of AI-driven vehicles, raising concerns about potential future harms from autonomous system malfunctions.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions AI systems in the form of fully self-driving cars operating without human safety monitors, which is a clear AI system. While recalls due to safety issues are noted, no actual accidents or injuries are reported, so no realized harm is described. The planned expansion of these systems nationwide without human monitors plausibly increases the risk of future incidents or harms, fitting the definition of an AI Hazard. Other AI-related developments mentioned do not describe harm or risk directly. Hence, the event is an AI Hazard due to the credible potential for harm from the deployment of these autonomous vehicles without human oversight.[AI generated]

Greece Deploys AI System to Monitor Train Communications for Safety

The Greek Ministry of Transport, led by Deputy Minister Konstantinos Kyranakis, announced the deployment of an AI system to monitor real-time communications between train stationmasters and drivers. The AI transcribes and analyzes conversations to detect protocol deviations, aiming to enhance railway safety and enable timely intervention, though no incidents have yet occurred.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The AI system is explicitly mentioned as being used to transcribe and analyze communications to ensure compliance with safety protocols in railway operations. Its use directly relates to managing human safety in a critical infrastructure context (railway transport). Although no harm has yet occurred, the system's role is to prevent injury or harm to people by detecting and addressing unsafe communication practices in real time. Therefore, this event involves the use of an AI system with a plausible risk mitigation purpose in a critical infrastructure setting, qualifying it as an AI Hazard because it could plausibly lead to the prevention of harm or, if malfunctioning or misused, could itself lead to harm. Since no actual harm or incident is reported, it is not an AI Incident. The focus is on the system's deployment and potential impact on safety, not on a realized harm or incident.[AI generated]

AI-Generated Deepfake Ads Fuel Massive International Dietary Supplement Scam

A criminal network based in Sofia used AI to create deepfake videos and manipulated images of well-known personalities for online ads promoting fake dietary supplements. The scheme, active since 2019, generated over €240 million, deceived millions across Europe, and posed health risks. Authorities dismantled the operation in a coordinated international effort.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly states that AI was used to create aggressive online advertisements with fake endorsements by public figures, which misled consumers into buying products with no proven health benefits, falsely marketed as medicines. This directly led to harm by deceiving vulnerable populations, including elderly people, about health effects, which is a violation of consumer rights and potentially harmful to health. The criminal scheme generated significant financial damage and exploited AI-generated content for fraudulent purposes. Therefore, the event meets the criteria for an AI Incident due to the direct harm caused by the AI system's use in the fraudulent advertising scheme.[AI generated]

Trump Allies Urge Mandatory Government Testing for Advanced AI Systems

A coalition of over 60 conservative allies, including Steve Bannon and the group Humans First, urged President Trump to require mandatory government testing and approval of advanced AI systems before public release, citing risks to national security, jobs, and critical infrastructure. No actual AI incident has occurred yet.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Why's our monitor labelling this an incident or hazard?

The article focuses on a political advocacy effort urging stricter AI regulation due to plausible future risks posed by powerful AI systems. There is no indication that any AI system has caused harm or malfunctioned yet. The event is about potential risks and policy responses, not about realized harm or incidents. Therefore, it fits the definition of an AI Hazard, as it highlights credible concerns that AI systems could plausibly lead to significant harms if unregulated.[AI generated]

AI Deepfakes Fuel Misinformation in Wall Street Sexual Harassment Case

Following a Wall Street sexual harassment lawsuit filed in New York, AI-generated deepfakes and memes have spread false narratives and salacious fabrications online. This malicious use of AI has caused reputational harm to those involved and misled the public, highlighting the dangers of AI-driven misinformation.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly describes AI-generated deepfakes and memes that fabricate scenes and narratives about the lawsuit, which are spreading widely on social media. These AI systems are used maliciously to create false content that harms the reputations of the individuals involved and misleads the public, constituting harm to communities and individuals. Since the harm is occurring due to the AI-generated content, this qualifies as an AI Incident under the framework, specifically harm to communities and individuals through misinformation and reputational damage.[AI generated]

AI-Powered Dolunay Project Rapidly Tackles Cyber Fraud in Turkey

Turkey's Interior Ministry launched the AI-supported Dolunay project to combat cyber fraud. The system analyzes citizen reports and digital evidence, enabling rapid intervention and operational case creation within 18 minutes. Multiple agencies collaborate via a unified platform, aiming to reduce financial harm and streamline law enforcement response.[AI generated]

Industries:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly states the use of an AI-supported analysis infrastructure that processes data and generates actionable outputs to combat fraud. The AI system's use directly aims to prevent and mitigate harm caused by fraud, which is a recognized harm to persons and communities. Since the AI system is actively deployed and used to address ongoing fraud cases, this qualifies as an AI Incident due to the AI system's involvement in harm prevention and operational response to a real and ongoing harm domain (fraud). Although the article focuses on the system's deployment and intended benefits, the presence of AI in the operational use against actual fraud cases means it is not merely a hazard or complementary information but an AI Incident.[AI generated]

xAI Delays Payment for Employee Tax Data Used in Grok AI Training

Elon Musk's xAI asked employees to submit their personal tax returns as training data for its Grok AI chatbot, promising $420 per submission. Two months later, the payments remain unpaid, raising concerns about data governance and employee trust, though no direct harm or data misuse has been reported.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

AI system task:

Why's our monitor labelling this an incident or hazard?

An AI system (Grok chatbot) is involved, as it is being trained using personal tax return data. The event stems from the use and development of the AI system, specifically the collection of sensitive data for training. Although no direct harm has been reported yet, the failure to pay employees and the collection of sensitive financial data without clear resolution plausibly could lead to violations of privacy rights or other harms. Since no actual harm has been documented, but plausible future harm exists, this event fits the definition of an AI Hazard rather than an AI Incident. It is not merely complementary information because the main focus is on the problematic data collection and payment failure, which pose a credible risk of harm.[AI generated]

AI Tools Uncover Critical Linux and OpenClaw Vulnerabilities; AI-Generated Reports Disrupt Bug Bounty Programs

AI auditing tools, including V12 and OpenClaw, have uncovered multiple critical security vulnerabilities in Linux kernels and AI agent platforms, enabling privilege escalation and backdoor installation. Simultaneously, AI-generated invalid reports are overwhelming bug bounty programs, disrupting cybersecurity operations. These incidents highlight both the benefits and risks of AI in cybersecurity.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event explicitly involves an AI system, OpenClaw, which is an AI agent integration platform. The vulnerabilities allow attackers to execute arbitrary code, modify configurations, and implant backdoors, which directly harms system integrity and security. This constitutes harm to property and potentially to communities depending on the system's reliability. The exploitation of these vulnerabilities has already occurred or is highly plausible given the unpatched systems, fulfilling the criteria for an AI Incident. The event is not merely a warning or potential risk (AI Hazard), nor is it a general update or response (Complementary Information).[AI generated]

AI Toll System Faces Challenges from Tampered Plates and Exempt Vehicle Management in India

India's AI-enabled Multi-Lane Free Flow (MLFF) tolling system faces issues as tampered number plates hinder accurate toll collection, causing revenue loss. The government is also planning special FASTags for toll-exempt vehicles to prevent wrongful charges, highlighting operational challenges in AI-based toll enforcement.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The AI system (automatic number plate recognition integrated with AI-enabled cameras) is explicitly mentioned and is central to the toll collection process. The tampering of number plates interferes with the AI system's function, causing revenue leakage and enforcement difficulties. While this is a harm related to government revenue and system integrity, it does not constitute direct injury, rights violation, or physical harm. The event describes a current problem that could plausibly lead to more significant harms if unaddressed, such as widespread toll evasion or system failure. Hence, it fits the definition of an AI Hazard rather than an AI Incident or Complementary Information.[AI generated]

AI-Guided Drones Cause Mass Destruction in Ukraine-Russia Conflict

Ukraine's deployment of AI-assisted intercept drones has resulted in the destruction of over 33,000 Russian drones in March 2026, significantly altering the dynamics of the Russia-Ukraine war. These AI-enabled drones, capable of autonomous targeting and interception, have directly caused casualties and large-scale equipment losses.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves AI systems in the form of attack drones, which are typically AI-enabled for autonomous functions. The article focuses on the exhibition and interest in these systems, not on any realized harm or malfunction. Therefore, it represents a plausible future risk of harm due to the proliferation and potential use of AI-enabled attack drones, qualifying it as an AI Hazard rather than an AI Incident. There is no indication of complementary information or unrelated content.[AI generated]

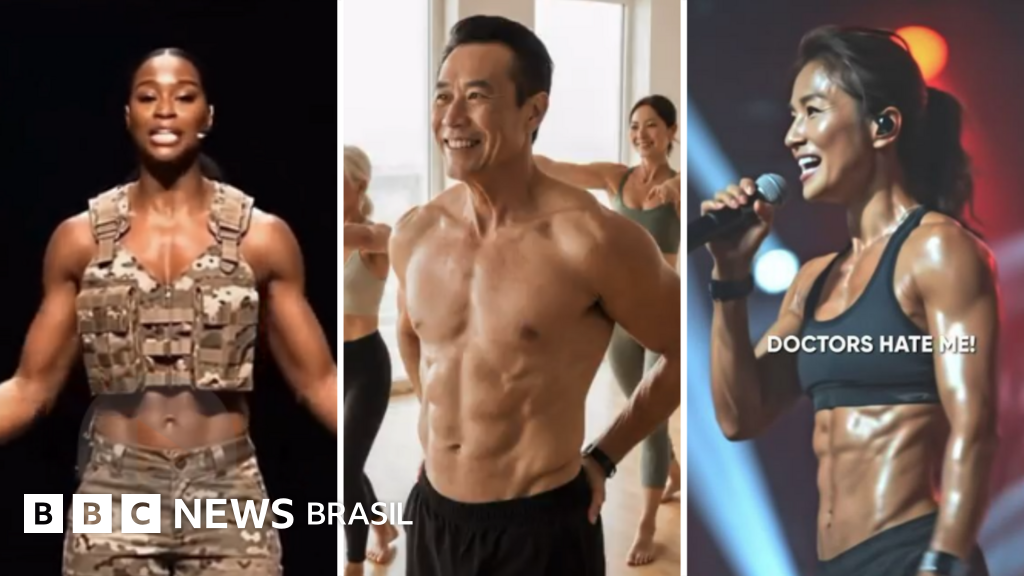

AI-Generated Fitness Instructors Mislead Consumers with Unrealistic Claims

Investigations revealed that AI-generated fitness instructors and content are being used in social media ads to promote scientifically implausible results, misleading consumers and violating UK advertising standards. The deceptive use of AI has led to complaints and regulatory action due to potential harm to mental and physical health.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves AI systems generating synthetic fitness instructor personas and content that mislead consumers with false claims about fitness results. This use of AI has directly contributed to harm by fostering unrealistic expectations and potential mental health issues, which qualifies as harm to communities and individuals. The regulatory response and complaints indicate that harm has materialized. Therefore, this qualifies as an AI Incident because the AI system's use has directly or indirectly led to harm through deceptive advertising and misinformation.[AI generated]

Experts Warn of AI-Driven Fake News Risks in Brazilian Elections

Brazilian electoral authorities and experts warn that AI could intensify the spread of fake news during upcoming elections, especially amid political polarization and low digital literacy. The Tribunal Superior Eleitoral is preparing to address these risks, but no AI-driven incidents have yet occurred.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions the use of AI in election campaigns and the potential for it to exacerbate the circulation of false information, which could harm communities by undermining democratic processes. However, it does not describe any realized harm or specific AI system malfunction or misuse that has already caused damage. Instead, it highlights the plausible risk and the need for vigilance and capacity building by electoral authorities. Therefore, this event fits the definition of an AI Hazard, as it plausibly could lead to an AI Incident involving harm to communities through misinformation but has not yet done so.[AI generated]