The event involves AI systems hardware (Nvidia AI chips) and their use and transfer, which is under investigation for potentially breaching legal export restrictions. This constitutes a plausible risk of violation of legal obligations and possibly other harms if the restricted technology is used in unauthorized ways. Since no actual harm or incident is reported yet, but there is a credible risk of legal violations and regulatory circumvention, this qualifies as an AI Hazard rather than an AI Incident. The article focuses on the potential and ongoing investigation rather than a confirmed incident causing harm.[AI generated]

AIM: AI Incidents and Hazards Monitor

Automated monitor of incidents and hazards from public sources (Beta).

AI-related legislation is gaining traction, and effective policymaking needs evidence, foresight and international cooperation. The OECD AI Incidents and Hazards Monitor (AIM) documents AI incidents and hazards to help policymakers, AI practitioners, and all stakeholders worldwide gain valuable insights into the risks and harms of AI systems. Over time, AIM will help to show risk patterns and establish a collective understanding of AI incidents and hazards and their multifaceted nature, serving as an important tool for trustworthy AI. AI incidents seem to be getting more media attention lately, but they've actually gone down as a share of all AI news (see chart below!).

The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

Advanced Search Options

As percentage of total AI events

Show summary statistics of AI incidents & hazards

US Probes Illegal Smuggling of Nvidia AI Chips to China via Thailand

US authorities are investigating allegations that Thailand-based OBON Corp helped smuggle billions of dollars' worth of Super Micro servers containing Nvidia AI chips into China, potentially violating US export controls. Alibaba is named as a possible end customer. The case highlights risks in AI hardware supply chains and export law compliance.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Why's our monitor labelling this an incident or hazard?

Vietnam Uses AI for Online Propaganda and Censorship

Vietnam's Communist Party is implementing a strategy to use AI-powered moderation tools and social media influencers to control online narratives and suppress dissent. The plan involves recruiting thousands of AI experts to remove content and guide discussions, leading to ongoing violations of freedom of expression and information.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves the use of AI systems explicitly for content moderation and propaganda dissemination, which directly impacts human rights by censoring dissent and controlling information. The article details concrete plans and ongoing actions, indicating realized harm rather than just potential risk. Hence, this qualifies as an AI Incident due to the direct role of AI in violating rights and harming communities through ideological control and censorship.[AI generated]

NHTSA Investigates Avride-Uber Robotaxi Crashes in Texas

The US National Highway Traffic Safety Administration is investigating Avride, Uber's autonomous vehicle partner, after 16 crashes—including property damage and a minor injury—in Dallas and Austin. The incidents, linked to failures in Avride's AI driving system, raise concerns about the safety and competence of self-driving robotaxis.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The autonomous vehicles operated by Avride are AI systems as they perform complex real-time decision-making for navigation and control. The crashes caused property damage and a minor injury, which are harms directly linked to the AI system's malfunction or insufficient capability. The investigation by NHTSA confirms the AI system's involvement in these harms. Therefore, this event qualifies as an AI Incident due to realized harm caused by the AI system's use and malfunction.[AI generated]

First Case of AI Addiction Treated in Venice

In Venice, Italy, a 20-year-old woman has been treated by the local addiction service (Serd) for behavioral addiction to an AI conversational system. The AI's adaptive responses reinforced her dependency, leading to social isolation and mental health harm. This is the first such case reported in Italy.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

An AI system is explicitly involved as the source of the behavioral addiction, with the AI's adaptive responses contributing to the harm experienced by the patient. The harm is to the health of a person, fitting the definition of an AI Incident. The article describes an actual case of harm, not just a potential risk or general information, so it qualifies as an AI Incident.[AI generated]

JR East to Trial Level 4 Autonomous Buses on Kesennuma Line BRT

JR East will conduct Level 4 autonomous driving trials on a 15.5 km section of the Kesennuma Line BRT in Miyagi, Japan. The AI system will handle all driving and emergency stops under specific conditions, with staff onboard for safety. No harm has occurred, but future risks remain plausible.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions Level 4 autonomous driving, which involves AI systems for vehicle control. The event is about testing and development, with no reported injury, disruption, or rights violation. Since no harm has occurred, but the system could plausibly lead to harm if malfunctioning in the future, this qualifies as an AI Hazard. It is not an incident because no harm has materialized, nor is it complementary information or unrelated news.[AI generated]

Meta's AI-Driven Account Purge Causes Mass Suspensions and Follower Losses

Meta's recent deployment of advanced AI systems to enforce age restrictions and remove fake or inactive accounts on Instagram and Facebook led to widespread account suspensions, including for legitimate users and celebrities like Lee Yufen and Sunny Wang. The AI's misjudgments caused significant follower losses and user distress, especially in Taiwan.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions the use of advanced AI systems by Meta to analyze user profiles and images to enforce age restrictions and remove fake or inactive accounts. The AI system's use has directly caused the removal of large numbers of followers and account suspensions, which constitutes harm to communities (disruption of social media communities and user reputations) and potential violations of user rights (account suspensions without clear due process). The harm is realized and ongoing, not merely potential. Hence, this is an AI Incident rather than a hazard or complementary information.[AI generated]

Samsung Galaxy Watch Uses AI to Predict Fainting and Prevent Injuries

Samsung, in collaboration with Chung-Ang University Gwangmyeong Hospital in South Korea, has developed an AI-powered feature for the Galaxy Watch 6 that predicts vasovagal syncope (fainting) episodes. By analyzing biosignals, the AI system can warn users before fainting, potentially reducing injuries from sudden falls.[AI generated]

Industries:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

An AI system (the algorithm analyzing bio-signals from the smartwatch) is explicitly involved in predicting a medical condition that can lead to physical harm (injuries from falls). The AI's use directly contributes to harm prevention by providing early alerts, thus addressing potential injury risks. Since the AI system's use is linked to preventing injury and improving health outcomes, and the event reports successful prediction and clinical validation, this constitutes an AI Incident involving harm to health (a).[AI generated]

AI-Powered Cyberattacks Threaten Global Financial Stability

The IMF and cybersecurity experts warn that advanced AI models, such as Anthropic's Mythos, can autonomously discover and exploit software vulnerabilities, enabling high-frequency, complex cyberattacks on financial systems. These AI-driven threats have already heightened risks to critical infrastructure, prompting regulatory responses and industry concern over potential systemic financial instability.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves AI systems (frontier AI models like Claude Mythos) that could be misused by hackers to launch cyberattacks, which could disrupt critical infrastructure and cause harm. However, the article does not report any realized harm or incident caused by AI misuse; it mainly describes the plausible future threat and the government's response to it. Therefore, this qualifies as an AI Hazard, as the AI system's misuse could plausibly lead to an AI Incident, but no incident has yet occurred.[AI generated]

Chinese Courts Rule Against AI Platforms for Defamation and Copyright Infringement

In China, Baidu's AI system falsely claimed a lawyer was convicted, causing reputational harm and resulting in a court-ordered apology. Separately, an AI search platform displayed pirated TV links, leading to a copyright lawsuit. Courts found Baidu liable for defamation, while the search platform was exempted due to prompt remedial action and lack of intent.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The AI system (Baidu's AI smart answer feature) generated false information about a lawyer, falsely stating he was sentenced to prison, which damaged his social reputation. This is a direct harm caused by the AI system's output, meeting the definition of an AI Incident due to violation of rights (reputational harm). The court ruling and legal consequences confirm the harm has materialized and is attributable to the AI system's use. Hence, the event is classified as an AI Incident.[AI generated]

US Judge Rules Use of ChatGPT to Cut Humanities Grants Unconstitutional

The US Department of Government Efficiency (DOGE) used ChatGPT to identify and terminate over $100 million in National Endowment for the Humanities grants, targeting projects linked to DEI, Holocaust education, and Black history. A federal judge ruled this AI-driven process unconstitutional, citing viewpoint discrimination and violation of rights.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly states that ChatGPT was used by DOGE employees to flag grants for cancellation based on keywords, which led to the termination of grants and caused irreparable injury to the plaintiffs, including disruption of expression and research. The harms are directly linked to the AI system's use in decision-making that violated constitutional protections and caused widespread disruption. This meets the criteria for an AI Incident because the AI system's use directly led to realized harm involving violations of rights and harm to communities.[AI generated]

AI-Generated Fake Social Media Accounts Spread Political Misinformation in South Korea

AI-generated images of young women were used to create fake social media accounts in South Korea, spreading political messages and deceiving users. The accounts, operated by men, used deepfake technology to manipulate public perception, leading to misinformation, violation of individual rights, and undermining social trust.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly states that the videos were created using AI technology to fabricate a person's appearance and speech, which were then widely shared on social media, deceiving many people. This manipulation of public perception through AI-generated fake content is a direct cause of harm to communities by spreading misinformation and undermining democratic discourse. Therefore, this event qualifies as an AI Incident due to realized harm caused by AI misuse.[AI generated]

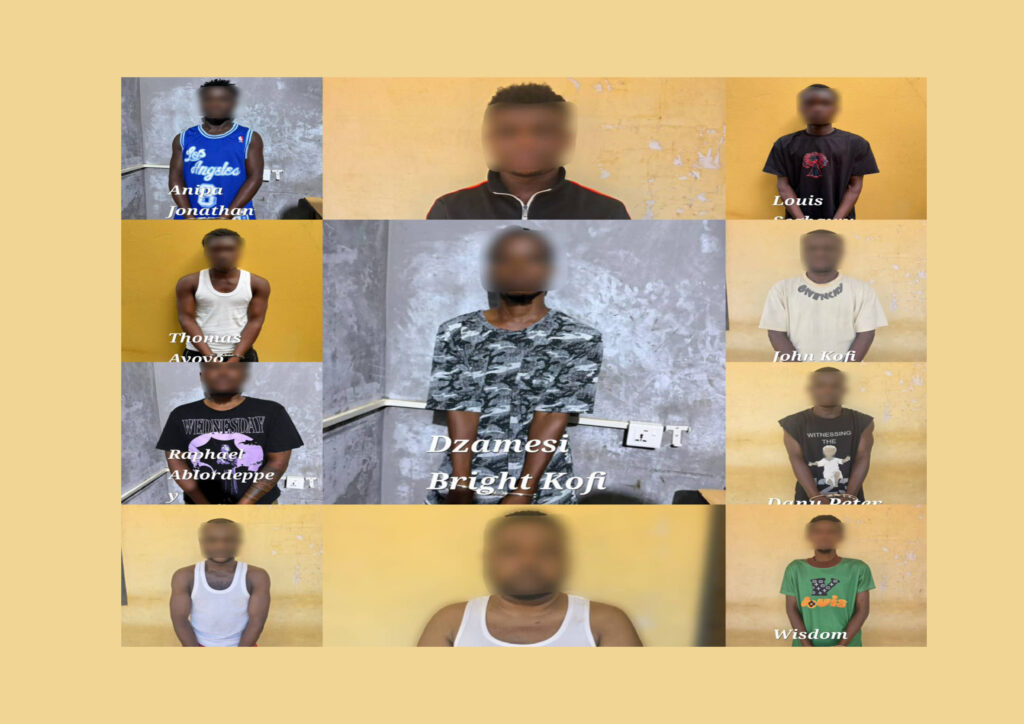

11 Arrested for Deepfake AI Scam Impersonating Ghana's Former President

Ghanaian police arrested 11 suspects, including Nigerian nationals, for using AI-generated deepfake videos to impersonate former President John Dramani Mahama. The suspects allegedly used the videos in online scams to solicit money and sensitive information, causing harm through deception and fraud. Operations occurred in the Volta Region in May 2026.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event explicitly mentions the use of AI-generated deepfake videos, which are AI systems generating synthetic media. The fraudulent use of these videos to impersonate a public figure and solicit money and personal information constitutes a violation of rights and harm to communities. Since the harm has already occurred and arrests have been made, this qualifies as an AI Incident rather than a hazard or complementary information.[AI generated]

AI-Assisted Cyberattack Targets Mexican Water Utility

Hackers used commercial AI tools, including Anthropic's Claude and OpenAI's GPT models, to plan and execute a cyberattack on Monterrey's municipal water and drainage utility in Mexico. The AI systems enabled rapid reconnaissance, credential harvesting, and malicious scripting, leading to IT system compromise and attempted OT system breach between December 2025 and February 2026.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The article explicitly states that the hackers used an AI system to generate exploitation frameworks and guide their attacks, leading to successful data theft from government IT systems. This constitutes direct involvement of an AI system in causing harm (data theft, a violation of property and community harm). The failure to breach OT systems does not negate the realized harm caused by the AI-driven attack on IT systems. Therefore, this event meets the criteria for an AI Incident due to the direct role of AI in enabling a harmful cyberattack with realized consequences.[AI generated]

AI-Coded Apps Leak Sensitive Data Due to Poor Security

Researchers at RedAccess found over 5,000 web apps built with AI coding tools like Lovable, Replit, Base44, and Netlify exposed sensitive corporate and personal data due to inadequate security. These apps, often created by non-experts, were publicly accessible, leading to privacy violations and potential regulatory breaches.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event explicitly involves AI coding tools that have been used to create applications exposing sensitive data, including personal and corporate information. The exposure of this data is a direct harm to privacy and security, which is a violation of rights and a significant harm. The AI systems' default settings and ease of use without proper security controls have directly led to this harm. Although some companies argue that public apps are expected behavior, the scale and nature of the data exposed indicate a failure in the AI systems' deployment and use, leading to real harm. Hence, this qualifies as an AI Incident rather than a hazard or complementary information.[AI generated]

Hacker Exploits Security Flaws in Yarbo Robot Lawnmowers, Demonstrates Physical and Privacy Risks

Security researcher Andreas Makris remotely hacked Yarbo robot lawnmowers, demonstrating their severe vulnerabilities. He controlled the robots from Germany, nearly running over a Verge editor in the US, and accessed sensitive data. The incident highlights risks of physical harm and privacy breaches due to poor AI security design.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The robot lawn mowers are AI systems as they autonomously navigate and perform complex tasks like mowing, using onboard computing and sensors. The event involves the use and malfunction (security vulnerabilities) of these AI systems, which have directly led to harms: physical danger to a person (the researcher lying in the mower's path), privacy violations (unauthorized access to cameras, GPS, Wi-Fi credentials), and potential broader harms (botnet formation, network intrusion). The article documents actual exploitation and demonstration of these harms, not just potential risks. Hence, it meets the criteria for an AI Incident rather than a hazard or complementary information.[AI generated]

US-China Consider Formal AI Talks to Prevent Military and Economic Crises

The US and China are considering formal, regular dialogues to address risks from AI competition, including potential crises from autonomous military systems, loss of AI control, and misuse by non-state actors. The talks aim to establish safeguards and prevent AI-driven military or economic crises amid escalating technological rivalry.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Why's our monitor labelling this an incident or hazard?

The event involves AI systems as it concerns AI models and autonomous military systems, and the discussion is about preventing risks and crises related to AI. Since no actual harm or incident has occurred yet, but there is a credible risk of future harm (e.g., AI-driven military escalation or attacks), this qualifies as an AI Hazard. The article's main focus is on the plausible future risks and the planned official dialogue to manage these risks, not on a realized AI incident or complementary information about responses to past incidents.[AI generated]

Hyundai Rotem and Anduril Collaborate on AI-Driven Military Command Systems

Hyundai Rotem and U.S. defense tech firm Anduril have signed an agreement in Seoul to jointly develop AI-based command and control systems for military vehicles, drones, and robots. The collaboration aims to integrate Anduril's Lattice AI OS into unmanned platforms, enabling autonomous operations and swarm control, raising future risks of AI-enabled autonomous weapon systems.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event involves the development and planned use of an AI system (LatticeOS) for autonomous and semi-autonomous military operations, including swarm control and counter-drone activities. Although no harm has yet occurred, the deployment of AI in lethal or military command systems carries credible risks of injury, violation of rights, or disruption, making this a plausible future hazard. Therefore, this qualifies as an AI Hazard rather than an Incident or Complementary Information, as the article focuses on the system's development and intended operational use without reporting actual harm or incident.[AI generated]

Unauthorized Use of AI-Generated Celebrity Likeness in Livestream Sales Leads to Detention in China

In Datong, China, a netizen named Xing illegally used AI tools to create a digital likeness of KMT chairperson Cheng Liwen for livestream sales without authorization. This misuse of AI for commercial gain infringed on personal rights, disrupted online order, and resulted in administrative detention by local police.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Business function:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event explicitly involves the use of an AI tool to generate a digital human likeness (AI digital person) of a real individual without authorization, which was then used in live-streaming commerce. This unauthorized use infringes on the person's rights and caused social harm by disturbing network order and misleading the public. The legal action taken confirms the harm and violation of laws. The AI system's misuse directly led to these harms, fitting the definition of an AI Incident involving violations of rights and harm to communities.[AI generated]

AI-Powered DeepLoad Malware Targets Nigerian Institutions

Nigeria's National Information Technology Development Agency (NITDA) has warned of an active AI-powered malware, DeepLoad, targeting government agencies, banks, businesses, and individuals. The malware uses social engineering to infiltrate systems, steal sensitive data, evade antivirus detection, and enable financial fraud and operational disruptions across Nigeria.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

Autonomy level:

AI system task:

Why's our monitor labelling this an incident or hazard?

The DeepLoad malware explicitly incorporates AI-generated code to evade antivirus detection and maintain persistence, qualifying it as an AI system. The malware's active infections have caused direct harms including credential theft, financial fraud, system compromise, and risks to national security, fulfilling the criteria for an AI Incident. The advisory details realized harms and ongoing attacks, not just potential risks, confirming this classification.[AI generated]

IT Contractor Creates Deepfake Videos from Stolen School Staff Photos in Busan

A male IT contractor in Busan, South Korea, illegally accessed 194 female school staff members' PCs, stealing over 220,000 personal files and using AI deepfake technology to create manipulated sexual videos. The incident, uncovered after a USB was found, highlights privacy violations and misuse of AI for harmful content creation.[AI generated]

AI principles:

Industries:

Affected stakeholders:

Harm types:

Severity:

AI system task:

Why's our monitor labelling this an incident or hazard?

The event explicitly involves the use of AI technology (deepfake generation) to create harmful synthetic sexual videos without consent, which is a violation of human rights and privacy. The AI system's use directly led to harm through the creation and possession of illicit content. The incident is not merely a potential risk but a realized harm, as the deepfake videos were produced and stored. Hence, it meets the criteria for an AI Incident rather than a hazard or complementary information.[AI generated]